1. Overview of Strimzi

Strimzi is based on Apache Kafka, a popular platform for streaming data delivery and processing. Strimzi makes it easy to run Apache Kafka on OpenShift or Kubernetes.

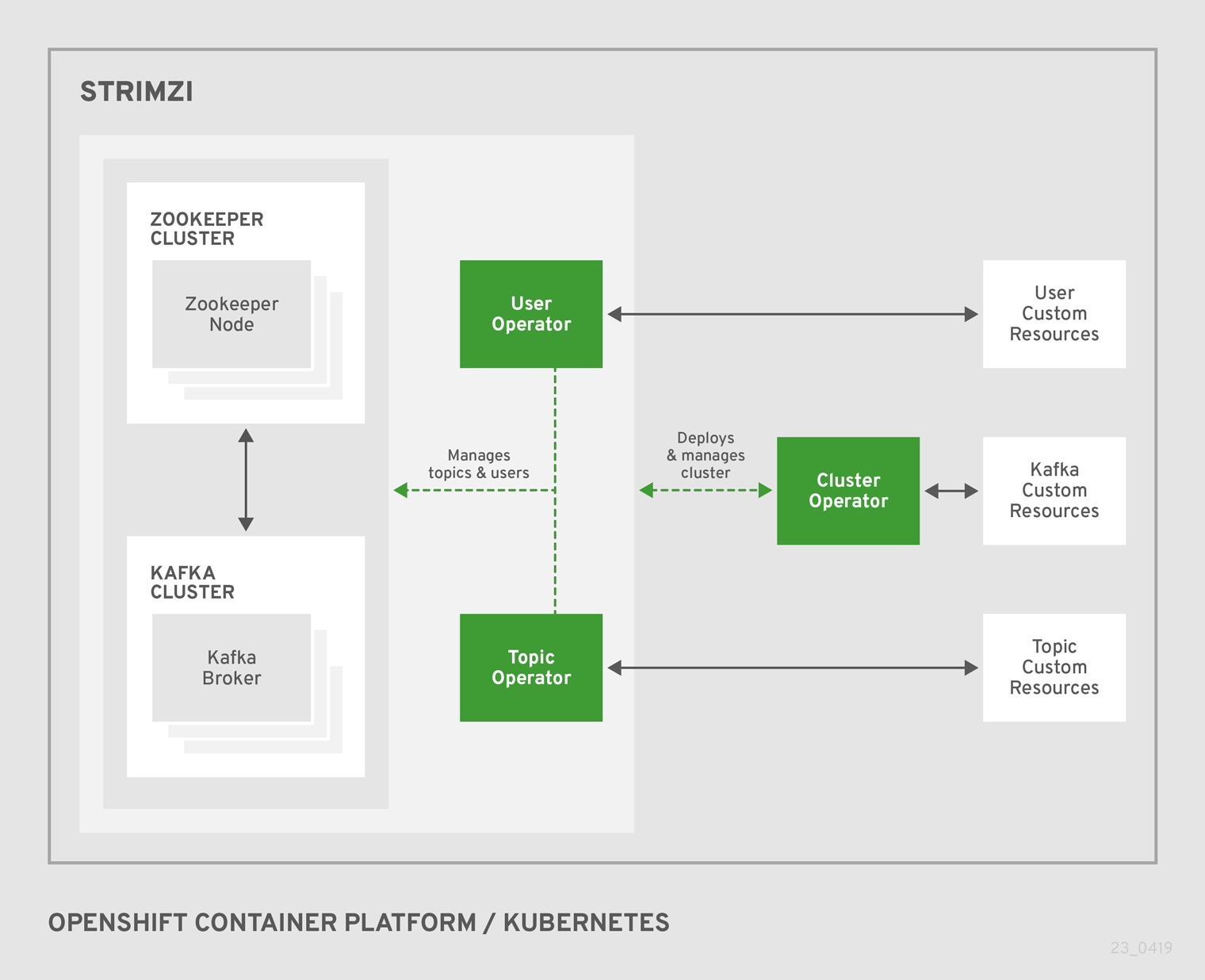

Strimzi provides three operators:

- Cluster Operator

-

Responsible for deploying and managing Apache Kafka clusters within an OpenShift or Kubernetes cluster.

- Topic Operator

-

Responsible for managing Kafka topics within a Kafka cluster running within an OpenShift or Kubernetes cluster.

- User Operator

-

Responsible for managing Kafka users within a Kafka cluster running within an OpenShift or Kubernetes cluster.

This guide describes how to install and use Strimzi.

1.1. Kafka Key Features

-

Designed for horizontal scalability

-

Message ordering guarantee at the partition level

-

Message rewind/replay

-

"Long term" storage allows the reconstruction of an application state by replaying the messages

-

Combines with compacted topics to use Kafka as a key-value store

-

-

For more information about Apache Kafka, see the Apache Kafka website.

1.2. Document Conventions

In this document, replaceable text is styled in monospace and italics.

For example, in the following code, you will want to replace my-namespace with the name of your namespace:

sed -i 's/namespace: .*/namespace: my-namespace/' install/cluster-operator/*RoleBinding*.yaml2. Getting started with Strimzi

Strimzi works on all types of clusters, from public and private clouds to local deployments intended for development.

This guide expects that an OpenShift or Kubernetes cluster is available and the

kubectl and

oc command-line tools are installed and configured to connect to the running cluster.

When no existing OpenShift or Kubernetes cluster is available, Minikube or Minishift can be used to create a local

cluster. More details can be found in Installing Kubernetes and OpenShift clusters.

|

Note

|

To run the commands in this guide, your Kubernetes and OpenShift Origin user must have the rights to manage role-based access control (RBAC). |

For more information about OpenShift and setting up OpenShift cluster, see OpenShift documentation.

2.1. Installing Strimzi and deploying components

To install Strimzi, download the release artefacts from GitHub.

The folder contains several YAML files to help you deploy the components of Strimzi to OpenShift or Kubernetes, perform common operations, and configure your Kafka cluster. The YAML files are referenced throughout this documentation.

Additionally, a Helm Chart is provided for deploying the Cluster Operator using Helm. The container images are available through the Docker Hub.

The remainder of this chapter provides an overview of each component and instructions for deploying the components to OpenShift or Kubernetes using the YAML files provided.

|

Note

|

Although container images for Strimzi are available in the Docker Hub, we recommend that you use the YAML files provided instead. |

2.2. Custom resources

Custom resource definitions (CRDs) extend the Kubernetes API, providing definitions to create and modify custom resources to an OpenShift or Kubernetes cluster. Custom resources are created as instances of CRDs.

In Strimzi, CRDs introduce custom resources specific to Strimzi to an OpenShift or Kubernetes cluster, such as Kafka, Kafka Connect, Kafka Mirror Maker, and users and topics custom resources. CRDs provide configuration instructions, defining the schemas used to instantiate and manage the Strimzi-specific resources. CRDs also allow Strimzi resources to benefit from native OpenShift or Kubernetes features like CLI accessibility and configuration validation.

CRDs require a one-time installation in a cluster. Depending on the cluster setup, installation typically requires cluster admin privileges.

|

Note

|

Access to manage custom resources is limited to Strimzi administrators. |

CRDs and custom resources are defined as YAML files.

A CRD defines a new kind of resource, such as kind:Kafka, within an OpenShift or Kubernetes cluster.

The OpenShift or Kubernetes API server allows custom resources to be created based on the kind and understands from the CRD how to validate and store the custom resource when it is added to the OpenShift or Kubernetes cluster.

|

Warning

|

When CRDs are deleted, custom resources of that type are also deleted. Additionally, the resources created by the custom resource, such as pods and statefulsets are also deleted. |

2.2.1. Strimzi custom resource example

Each Strimzi-specific custom resource conforms to the schema defined by the CRD for the resource’s kind.

To understand the relationship between a CRD and a custom resource, let’s look at a sample of the CRD for a Kafka topic.

apiVersion: kafka.strimzi.io/v1beta1

kind: CustomResourceDefinition

metadata: (1)

name: kafkatopics.kafka.strimzi.io

labels:

app: strimzi

spec: (2)

group: kafka.strimzi.io

versions:

v1beta1

scope: Namespaced

names:

# ...

singular: kafkatopic

plural: kafkatopics

shortNames:

- kt (3)

additionalPrinterColumns: (4)

# ...

validation: (5)

openAPIV3Schema:

properties:

spec:

type: object

properties:

partitions:

type: integer

minimum: 1

replicas:

type: integer

minimum: 1

maximum: 32767

# ...-

The metadata for the topic CRD, its name and a label to identify the CRD.

-

The specification for this CRD, including the group (domain) name, the plural name and the supported schema version, which are used in the URL to access the API of the topic. The other names are used to identify instance resources in the CLI. For example,

kubectl get kafkatopic my-topicorkubectl get kafkatopics. -

The shortname can be used in CLI commands. For example,

kubectl get ktcan be used as an abbreviation instead ofkubectl get kafkatopic. -

The information presented when using a

getcommand on the custom resource. -

openAPIV3Schema validation provides validation for the creation of topic custom resources. For example, a topic requires at least one partition and one replica.

|

Note

|

You can identify the CRD YAML files supplied with the Strimzi installation files, because the file names contain an index number followed by ‘Crd’. |

Here is a corresponding example of a KafkaTopic custom resource.

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaTopic (1)

metadata:

name: my-topic

labels:

strimzi.io/cluster: my-cluster

spec: (2)

partitions: 1

replicas: 1

config:

retention.ms: 7200000

segment.bytes: 1073741824-

The

kindandapiVersionidentify the CRD of which the custom resource is an instance. -

The spec shows the number of partitions and replicas for the topic as well as configuration for the retention period for a message to remain in the topic and the segment file size for the log.

Custom resources can be applied to a cluster through the platform CLI. When the custom resource is created, it uses the same validation as the built-in resources of the Kubernetes API.

After a KafkaTopic custom resource is created, the Topic Operator is notified and corresponding Kafka topics are created in Strimzi.

2.2.2. Strimzi custom resource status

The status property of a Strimzi-specific custom resource publishes the current state of the resource to users and tools that need the information.

Status information is useful for tracking progress related to a resource achieving its desired state, as defined by the spec property. The status provides the time and reason the state of the resource changed and details of events preventing or delaying the Operator from realizing the desired state.

Strimzi creates and maintains the status of custom resources, periodically evaluating the current state of the custom resource and updating its status accordingly.

When performing an update on a custom resource using kubectl edit, for example, its status is not editable. Moreover, changing the status would not affect the configuration of the Kafka cluster.

|

Important

|

The status property feature for Strimzi-specific custom resources is still under development and only available for Kafka resources. |

Here we see the status property specified for a Kafka custom resource.

apiVersion: kafka.strimzi.io/v1beta1

kind: Kafka

metadata:

spec:

# ...

status:

conditions: (1)

- lastTransitionTime: 2019-06-02T23:46:57+0000

status: "True"

type: Ready (2)

listeners: (3)

- addresses:

- host: my-cluster-kafka-bootstrap.myproject.svc

port: 9092

type: plain

- addresses:

- host: my-cluster-kafka-bootstrap.myproject.svc

port: 9093

type: tls

- addresses:

- host: 172.29.49.180

port: 9094

type: external

# ...-

Status

conditionsdescribe criteria related to the status that cannot be deduced from the existing resource information, or are specific to the instance of a resource. -

The

Readycondition indicates whether the Cluster Operator currently considers the Kafka cluster able to handle traffic. -

The

listenersdescribe the current Kafka bootstrap addresses by type.ImportantThe status for externallisteners is still under development and does not provide a specific IP address for external listeners of typenodeport.

|

Note

|

The Kafka bootstrap addresses listed in the status do not signify that those endpoints or the Kafka cluster is in a ready state. |

You can access status information for a resource from the command line. For more information, see Checking the status of a custom resource.

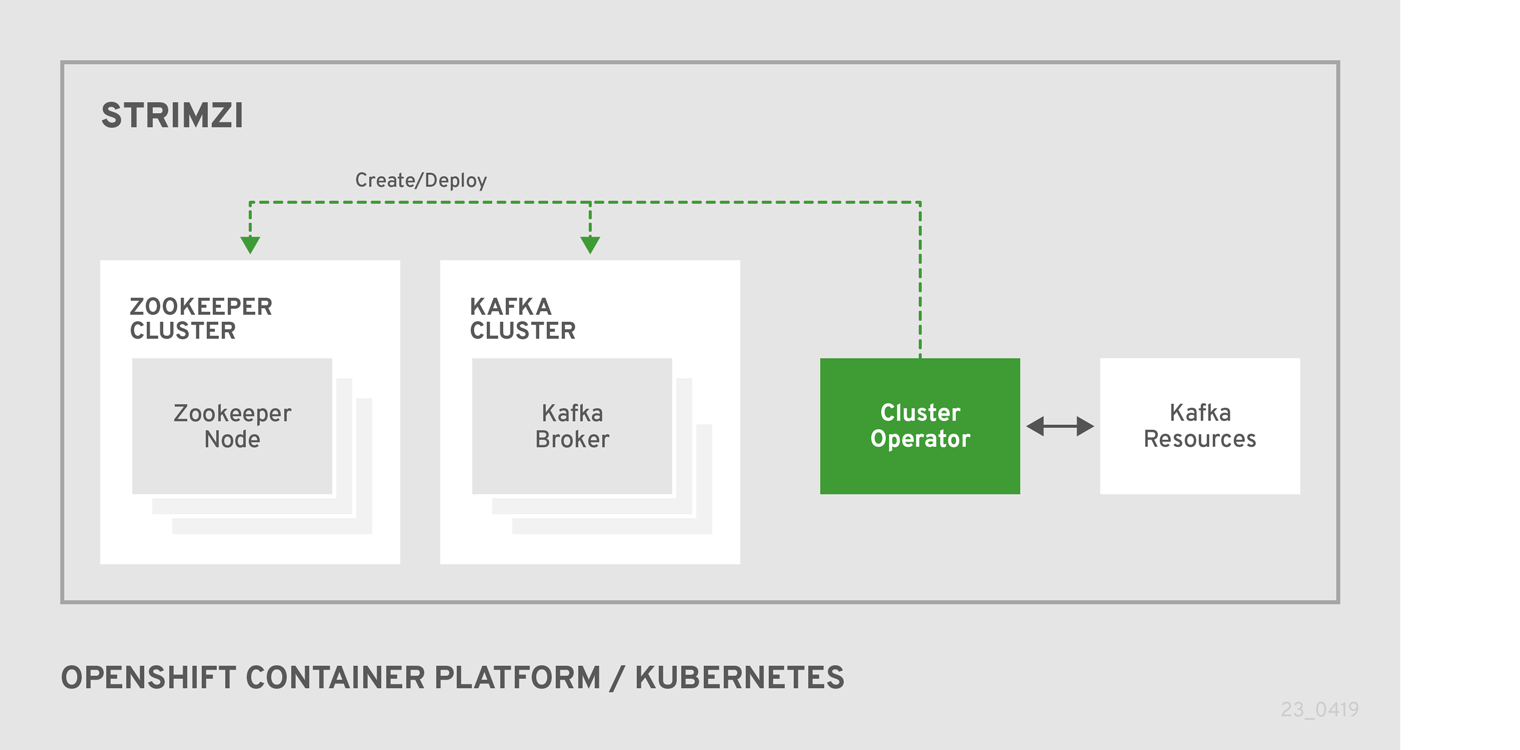

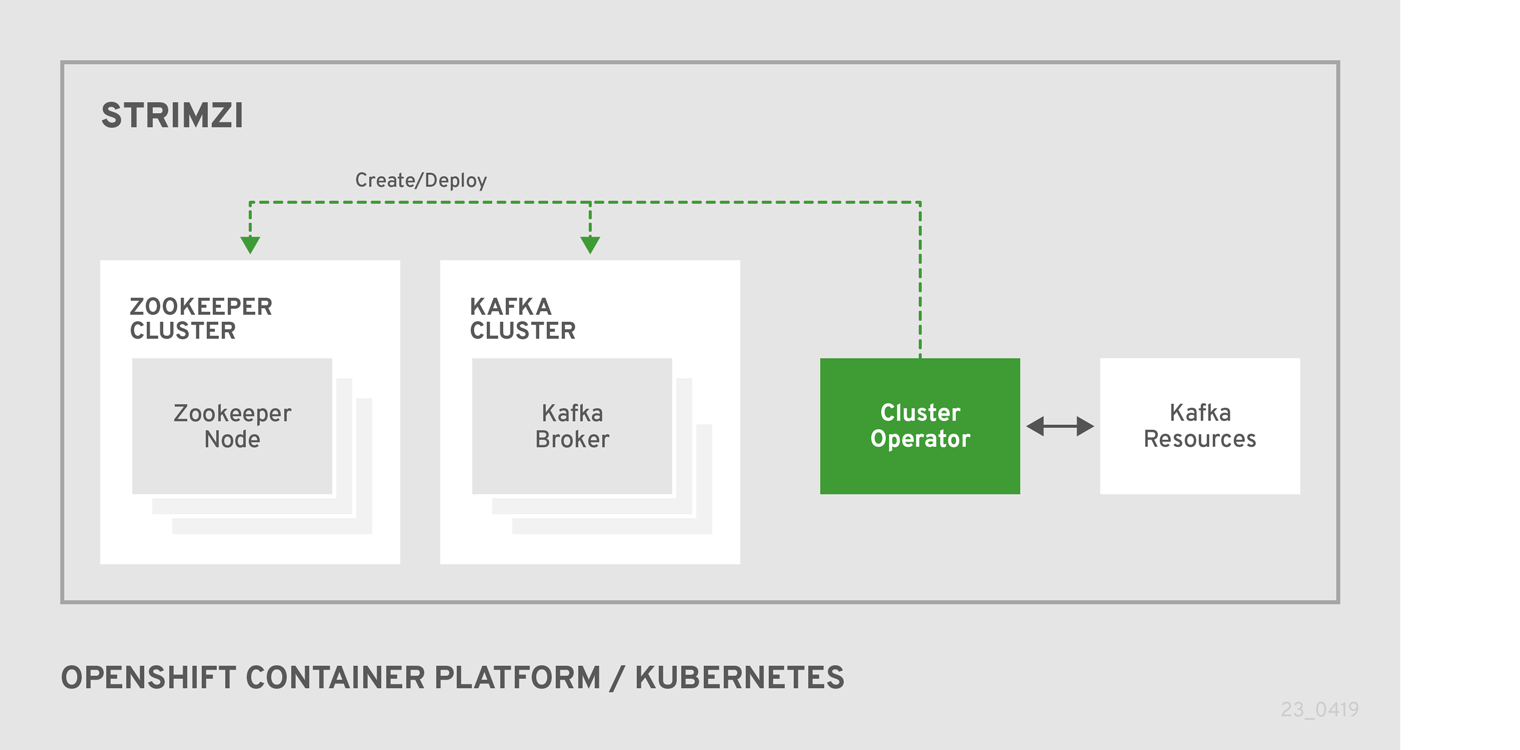

2.3. Cluster Operator

Strimzi uses the Cluster Operator to deploy and manage Kafka (including Zookeeper) and Kafka Connect clusters.

The Cluster Operator is deployed inside of the

Kubernetes or

OpenShift cluster.

To deploy a Kafka cluster, a Kafka resource with the cluster configuration has to be created within the

Kubernetes or

OpenShift cluster.

Based on what is declared inside of the Kafka resource, the Cluster Operator deploys a corresponding Kafka cluster.

For more information about the different configuration options supported by the Kafka resource, see Kafka cluster configuration

|

Note

|

Strimzi contains example YAML files, which make deploying a Cluster Operator easier. |

2.3.1. Overview of the Cluster Operator component

The Cluster Operator is in charge of deploying a Kafka cluster alongside a Zookeeper ensemble.

As part of the Kafka cluster, it can also deploy the topic operator which provides operator-style topic management via KafkaTopic custom resources.

The Cluster Operator is also able to deploy a Kafka Connect cluster which connects to an existing Kafka cluster.

On OpenShift such a cluster can be deployed using the Source2Image feature, providing an easy way of including more connectors.

When the Cluster Operator is up, it starts to watch for certain OpenShift or Kubernetes resources containing the desired Kafka, Kafka Connect, or Kafka Mirror Maker cluster configuration. By default, it watches only in the same namespace or project where it is installed. The Cluster Operator can be configured to watch for more OpenShift projects or Kubernetes namespaces. Cluster Operator watches the following resources:

-

A

Kafkaresource for the Kafka cluster. -

A

KafkaConnectresource for the Kafka Connect cluster. -

A

KafkaConnectS2Iresource for the Kafka Connect cluster with Source2Image support. -

A

KafkaMirrorMakerresource for the Kafka Mirror Maker instance.

When a new Kafka, KafkaConnect, KafkaConnectS2I, or Kafka Mirror Maker resource is created in the OpenShift or Kubernetes cluster, the operator gets the cluster description from the desired resource and starts creating a new Kafka, Kafka Connect, or Kafka Mirror Maker cluster by creating the necessary other OpenShift or Kubernetes resources, such as StatefulSets, Services, ConfigMaps, and so on.

Every time the desired resource is updated by the user, the operator performs corresponding updates on the OpenShift or Kubernetes resources which make up the Kafka, Kafka Connect, or Kafka Mirror Maker cluster. Resources are either patched or deleted and then re-created in order to make the Kafka, Kafka Connect, or Kafka Mirror Maker cluster reflect the state of the desired cluster resource. This might cause a rolling update which might lead to service disruption.

Finally, when the desired resource is deleted, the operator starts to undeploy the cluster and delete all the related OpenShift or Kubernetes resources.

2.3.2. Deploying the Cluster Operator to Kubernetes

-

Modify the installation files according to the namespace the Cluster Operator is going to be installed in.

On Linux, use:

sed -i 's/namespace: .*/namespace: my-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-namespace/' install/cluster-operator/*RoleBinding*.yaml

-

Deploy the Cluster Operator:

kubectl apply -f install/cluster-operator -n _my-namespace_

2.3.3. Deploying the Cluster Operator to OpenShift

-

A user with

cluster-adminrole needs to be used, for example,system:admin. -

Modify the installation files according to the namespace the Cluster Operator is going to be installed in.

On Linux, use:

sed -i 's/namespace: .*/namespace: my-project/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-project/' install/cluster-operator/*RoleBinding*.yaml

-

Deploy the Cluster Operator:

oc apply -f install/cluster-operator -n _my-project_ oc apply -f examples/templates/cluster-operator -n _my-project_

2.3.4. Deploying the Cluster Operator to watch multiple namespaces

-

Edit the installation files according to the OpenShift project or Kubernetes namespace the Cluster Operator is going to be installed in.

On Linux, use:

sed -i 's/namespace: .*/namespace: my-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-namespace/' install/cluster-operator/*RoleBinding*.yaml

-

Edit the file

install/cluster-operator/050-Deployment-strimzi-cluster-operator.yamland in the environment variableSTRIMZI_NAMESPACElist all the OpenShift projects or Kubernetes namespaces where Cluster Operator should watch for resources. For example:apiVersion: extensions/v1beta1 kind: Deployment spec: template: spec: serviceAccountName: strimzi-cluster-operator containers: - name: strimzi-cluster-operator image: strimzi/operator:0.12.0 imagePullPolicy: IfNotPresent env: - name: STRIMZI_NAMESPACE value: myproject,myproject2,myproject3 -

For all namespaces or projects which should be watched by the Cluster Operator, install the

RoleBindings. Replace themy-namespaceormy-projectwith the OpenShift project or Kubernetes namespace used in the previous step.On Kubernetes this can be done using

kubectl apply:kubectl apply -f install/cluster-operator/020-RoleBinding-strimzi-cluster-operator.yaml -n my-namespace kubectl apply -f install/cluster-operator/031-RoleBinding-strimzi-cluster-operator-entity-operator-delegation.yaml -n my-namespace kubectl apply -f install/cluster-operator/032-RoleBinding-strimzi-cluster-operator-topic-operator-delegation.yaml -n my-namespaceOn OpenShift this can be done using

oc apply:oc apply -f install/cluster-operator/020-RoleBinding-strimzi-cluster-operator.yaml -n my-project oc apply -f install/cluster-operator/031-RoleBinding-strimzi-cluster-operator-entity-operator-delegation.yaml -n my-project oc apply -f install/cluster-operator/032-RoleBinding-strimzi-cluster-operator-topic-operator-delegation.yaml -n my-project -

Deploy the Cluster Operator

On Kubernetes this can be done using

kubectl apply:kubectl apply -f install/cluster-operator -n my-namespaceOn OpenShift this can be done using

oc apply:oc apply -f install/cluster-operator -n my-project

2.3.5. Deploying the Cluster Operator to watch all namespaces

You can configure the Cluster Operator to watch Strimzi resources across all OpenShift projects or Kubernetes namespaces in your OpenShift or Kubernetes cluster. When running in this mode, the Cluster Operator automatically manages clusters in any new projects or namespaces that are created.

-

Your OpenShift or Kubernetes cluster is running.

-

Configure the Cluster Operator to watch all namespaces:

-

Edit the

050-Deployment-strimzi-cluster-operator.yamlfile. -

Set the value of the

STRIMZI_NAMESPACEenvironment variable to*.apiVersion: extensions/v1beta1 kind: Deployment spec: template: spec: # ... serviceAccountName: strimzi-cluster-operator containers: - name: strimzi-cluster-operator image: strimzi/operator:0.12.0 imagePullPolicy: IfNotPresent env: - name: STRIMZI_NAMESPACE value: "*" # ...

-

-

Create

ClusterRoleBindingsthat grant cluster-wide access to all OpenShift projects or Kubernetes namespaces to the Cluster Operator.On OpenShift, use the

oc adm policycommand:oc adm policy add-cluster-role-to-user strimzi-cluster-operator-namespaced --serviceaccount strimzi-cluster-operator -n my-project oc adm policy add-cluster-role-to-user strimzi-entity-operator --serviceaccount strimzi-cluster-operator -n my-project oc adm policy add-cluster-role-to-user strimzi-topic-operator --serviceaccount strimzi-cluster-operator -n my-projectReplace

my-projectwith the project in which you want to install the Cluster Operator.On Kubernetes, use the

kubectl createcommand:kubectl create clusterrolebinding strimzi-cluster-operator-namespaced --clusterrole=strimzi-cluster-operator-namespaced --serviceaccount my-namespace:strimzi-cluster-operator kubectl create clusterrolebinding strimzi-cluster-operator-entity-operator-delegation --clusterrole=strimzi-entity-operator --serviceaccount my-namespace:strimzi-cluster-operator kubectl create clusterrolebinding strimzi-cluster-operator-topic-operator-delegation --clusterrole=strimzi-topic-operator --serviceaccount my-namespace:strimzi-cluster-operatorReplace

my-namespacewith the namespace in which you want to install the Cluster Operator. -

Deploy the Cluster Operator to your OpenShift or Kubernetes cluster.

On OpenShift, use the

oc applycommand:oc apply -f install/cluster-operator -n my-projectOn Kubernetes, use the

kubectl applycommand:kubectl apply -f install/cluster-operator -n my-namespace

2.3.6. Deploying the Cluster Operator using Helm Chart

-

Helm client has to be installed on the local machine.

-

Helm has to be installed in the OpenShift or Kubernetes cluster.

-

Add the Strimzi Helm Chart repository:

helm repo add strimzi https://strimzi.io/charts/ -

Deploy the Cluster Operator using the Helm command line tool:

helm install strimzi/strimzi-kafka-operator -

Verify whether the Cluster Operator has been deployed successfully using the Helm command line tool:

helm ls

-

For more information about Helm, see the Helm website.

2.3.7. Deploying the Cluster Operator from OperatorHub.io

OperatorHub.io is a catalog of Kubernetes Operators sourced from multiple providers. It offers you an alternative way to install stable versions of Strimzi using the Strimzi Kafka Operator.

The Operator Lifecycle Manager is used for the installation and management of all Operators published on OperatorHub.io.

To install Strimzi from OperatorHub.io, locate the Strimzi Kafka Operator and follow the instructions provided.

2.4. Kafka cluster

You can use Strimzi to deploy an ephemeral or persistent Kafka cluster to OpenShift or Kubernetes. When installing Kafka, Strimzi also installs a Zookeeper cluster and adds the necessary configuration to connect Kafka with Zookeeper.

- Ephemeral cluster

-

In general, an ephemeral (that is, temporary) Kafka cluster is suitable for development and testing purposes, not for production. This deployment uses

emptyDirvolumes for storing broker information (for Zookeeper) and topics or partitions (for Kafka). Using anemptyDirvolume means that its content is strictly related to the pod life cycle and is deleted when the pod goes down. - Persistent cluster

-

A persistent Kafka cluster uses

PersistentVolumesto store Zookeeper and Kafka data. ThePersistentVolumeis acquired using aPersistentVolumeClaimto make it independent of the actual type of thePersistentVolume. For example, it can use HostPath volumes on Minikube or Amazon EBS volumes in Amazon AWS deployments without any changes in the YAML files. ThePersistentVolumeClaimcan use aStorageClassto trigger automatic volume provisioning.

Strimzi includes two templates for deploying a Kafka cluster:

-

kafka-ephemeral.yamldeploys an ephemeral cluster, namedmy-clusterby default. -

kafka-persistent.yamldeploys a persistent cluster, namedmy-clusterby default.

The cluster name is defined by the name of the resource and cannot be changed after the cluster has been deployed. To change the cluster name before you deploy the cluster, edit the Kafka.metadata.name property of the resource in the relevant YAML file.

apiVersion: kafka.strimzi.io/v1beta1

kind: Kafka

metadata:

name: my-cluster

# ...2.4.1. Deploying the Kafka cluster to Kubernetes

You can deploy an ephemeral or persistent Kafka cluster to Kubernetes on the command line.

-

The Cluster Operator is deployed.

-

If you plan to use the cluster for development or testing purposes, you can create and deploy an ephemeral cluster using

kubectl apply.kubectl apply -f examples/kafka/kafka-ephemeral.yaml -

If you plan to use the cluster in production, create and deploy a persistent cluster using

kubectl apply.kubectl apply -f examples/kafka/kafka-persistent.yaml

-

For more information on deploying the Cluster Operator, see Cluster Operator.

-

For more information on the different configuration options supported by the

Kafkaresource, see Kafka cluster configuration.

2.4.2. Deploying the Kafka cluster to OpenShift

The following procedure describes how to deploy an ephemeral or persistent Kafka cluster to OpenShift on the command line. You can also deploy clusters in the OpenShift console.

-

The Cluster Operator is deployed.

-

If you plan to use the cluster for development or testing purposes, create and deploy an ephemeral cluster using

oc apply.oc apply -f examples/kafka/kafka-ephemeral.yaml -

If you plan to use the cluster in production, create and deploy a persistent cluster using

oc apply.oc apply -f examples/kafka/kafka-persistent.yaml

-

For more information on deploying the Cluster Operator, see Cluster Operator. For more information on the different configuration options supported by the

Kafkaresource, see Kafka cluster configuration.

2.5. Kafka Connect

Kafka Connect is a tool for streaming data between Apache Kafka and external systems. It provides a framework for moving large amounts of data into and out of your Kafka cluster while maintaining scalability and reliability. Kafka Connect is typically used to integrate Kafka with external databases and storage and messaging systems.

You can use Kafka Connect to:

-

Build connector plug-ins (as JAR files) for your Kafka cluster

-

Run connectors

Kafka Connect includes the following built-in connectors for moving file-based data into and out of your Kafka cluster.

| File Connector | Description |

|---|---|

|

Transfers data to your Kafka cluster from a file (the source). |

|

Transfers data from your Kafka cluster to a file (the sink). |

In Strimzi, you can use the Cluster Operator to deploy a Kafka Connect or Kafka Connect Source-2-Image (S2I) cluster to your OpenShift or Kubernetes cluster.

A Kafka Connect cluster is implemented as a Deployment with a configurable number of workers. The Kafka Connect REST API is available on port 8083, as the <connect-cluster-name>-connect-api service.

For more information on deploying a Kafka Connect S2I cluster, see Creating a container image using OpenShift builds and Source-to-Image.

2.5.1. Deploying Kafka Connect to your Kubernetes cluster

You can deploy a Kafka Connect cluster to your Kubernetes cluster by using the Cluster Operator.

-

Use the

kubectl applycommand to create aKafkaConnectresource based on thekafka-connect.yamlfile:kubectl apply -f examples/kafka-connect/kafka-connect.yaml

2.5.2. Deploying Kafka Connect to your OpenShift cluster

You can deploy a Kafka Connect cluster to your OpenShift cluster by using the Cluster Operator. Kafka Connect is provided as an OpenShift template that you can deploy from the command line or the OpenShift console.

-

Use the

oc applycommand to create aKafkaConnectresource based on thekafka-connect.yamlfile:oc apply -f examples/kafka-connect/kafka-connect.yaml

2.5.3. Extending Kafka Connect with connector plug-ins

The Strimzi container images for Kafka Connect include the two built-in file connectors: FileStreamSourceConnector and FileStreamSinkConnector. You can add your own connectors by using one of the following methods:

-

Create a Docker image from the Kafka Connect base image.

-

Create a container image using OpenShift builds and Source-to-Image (S2I).

Creating a Docker image from the Kafka Connect base image

You can use the Kafka container image on Docker Hub as a base image for creating your own custom image with additional connector plug-ins.

The following procedure explains how to create your custom image and add it to the /opt/kafka/plugins directory. At startup, the Strimzi version of Kafka Connect loads any third-party connector plug-ins contained in the /opt/kafka/plugins directory.

-

Create a new

Dockerfileusingstrimzi/kafka:0.12.0-kafka-2.2.1as the base image:FROM strimzi/kafka:0.12.0-kafka-2.2.1 USER root:root COPY ./my-plugins/ /opt/kafka/plugins/ USER 1001 -

Build the container image.

-

Push your custom image to your container registry.

-

Edit the

KafkaConnect.spec.imageproperty of theKafkaConnectcustom resource to point to the new container image. If set, this property overrides theSTRIMZI_DEFAULT_KAFKA_CONNECT_IMAGEvariable referred to in the next step.apiVersion: kafka.strimzi.io/v1beta1 kind: KafkaConnect metadata: name: my-connect-cluster spec: #... image: my-new-container-image -

In the

install/cluster-operator/050-Deployment-strimzi-cluster-operator.yamlfile, edit theSTRIMZI_DEFAULT_KAFKA_CONNECT_IMAGEvariable to point to the new container image.

-

For more information on the

KafkaConnect.spec.image property, see Container images. -

For more information on the

STRIMZI_DEFAULT_KAFKA_CONNECT_IMAGEvariable, see Cluster Operator Configuration.

Creating a container image using OpenShift builds and Source-to-Image

You can use OpenShift builds and the Source-to-Image (S2I) framework to create new container images. An OpenShift build takes a builder image with S2I support, together with source code and binaries provided by the user, and uses them to build a new container image. Once built, container images are stored in OpenShift’s local container image repository and are available for use in deployments.

A Kafka Connect builder image with S2I support is provided on the Docker Hub as part of the strimzi/kafka:0.12.0-kafka-2.2.1 image. This S2I image takes your binaries (with plug-ins and connectors) and stores them in the /tmp/kafka-plugins/s2i directory. It creates a new Kafka Connect image from this directory, which can then be used with the Kafka Connect deployment. When started using the enhanced image, Kafka Connect loads any third-party plug-ins from the /tmp/kafka-plugins/s2i directory.

-

On the command line, use the

oc applycommand to create and deploy a Kafka Connect S2I cluster:oc apply -f examples/kafka-connect/kafka-connect-s2i.yaml -

Create a directory with Kafka Connect plug-ins:

$ tree ./my-plugins/ ./my-plugins/ ├── debezium-connector-mongodb │ ├── bson-3.4.2.jar │ ├── CHANGELOG.md │ ├── CONTRIBUTE.md │ ├── COPYRIGHT.txt │ ├── debezium-connector-mongodb-0.7.1.jar │ ├── debezium-core-0.7.1.jar │ ├── LICENSE.txt │ ├── mongodb-driver-3.4.2.jar │ ├── mongodb-driver-core-3.4.2.jar │ └── README.md ├── debezium-connector-mysql │ ├── CHANGELOG.md │ ├── CONTRIBUTE.md │ ├── COPYRIGHT.txt │ ├── debezium-connector-mysql-0.7.1.jar │ ├── debezium-core-0.7.1.jar │ ├── LICENSE.txt │ ├── mysql-binlog-connector-java-0.13.0.jar │ ├── mysql-connector-java-5.1.40.jar │ ├── README.md │ └── wkb-1.0.2.jar └── debezium-connector-postgres ├── CHANGELOG.md ├── CONTRIBUTE.md ├── COPYRIGHT.txt ├── debezium-connector-postgres-0.7.1.jar ├── debezium-core-0.7.1.jar ├── LICENSE.txt ├── postgresql-42.0.0.jar ├── protobuf-java-2.6.1.jar └── README.md -

Use the

oc start-buildcommand to start a new build of the image using the prepared directory:oc start-build my-connect-cluster-connect --from-dir ./my-plugins/NoteThe name of the build is the same as the name of the deployed Kafka Connect cluster. -

Once the build has finished, the new image is used automatically by the Kafka Connect deployment.

2.6. Kafka Mirror Maker

The Cluster Operator deploys one or more Kafka Mirror Maker replicas to replicate data between Kafka clusters. This process is called mirroring to avoid confusion with the Kafka partitions replication concept. The Mirror Maker consumes messages from the source cluster and republishes those messages to the target cluster.

For information about example resources and the format for deploying Kafka Mirror Maker, see Kafka Mirror Maker configuration.

2.6.1. Deploying Kafka Mirror Maker to Kubernetes

-

Before deploying Kafka Mirror Maker, the Cluster Operator must be deployed.

-

Deploy Kafka Mirror Maker on Kubernetes by creating the corresponding

KafkaMirrorMakerresource.kubectl apply -f examples/kafka-mirror-maker/kafka-mirror-maker.yaml

-

For more information about deploying the Cluster Operator, see Cluster Operator

2.6.2. Deploying Kafka Mirror Maker to OpenShift

On OpenShift, Kafka Mirror Maker is provided in the form of a template. It can be deployed from the template using the command-line or through the OpenShift console.

-

Before deploying Kafka Mirror Maker, the Cluster Operator must be deployed.

-

Create a Kafka Mirror Maker cluster from the command-line:

oc apply -f examples/kafka-mirror-maker/kafka-mirror-maker.yaml

-

For more information about deploying the Cluster Operator, see Cluster Operator

2.7. Kafka Bridge

The Cluster Operator deploys one or more Kafka bridge replicas to send data between Kafka clusters and clients via HTTP API.

For information about example resources and the format for deploying Kafka Bridge, see Kafka Bridge configuration.

2.7.1. Deploying Kafka Bridge to your Kubernetes cluster

You can deploy a Kafka Bridge cluster to your Kubernetes cluster by using the Cluster Operator.

-

Use the

kubectl applycommand to create aKafkaBridgeresource based on thekafka-bridge.yamlfile:kubectl apply -f examples/kafka-bridge/kafka-bridge.yaml

2.7.2. Deploying Kafka Bridge to your OpenShift cluster

You can deploy a Kafka Bridge cluster to your OpenShift cluster by using the Cluster Operator. Kafka Bridge is provided as an OpenShift template that you can deploy from the command line or the OpenShift console.

-

Use the

oc applycommand to create aKafkaBridgeresource based on thekafka-bridge.yamlfile:oc apply -f examples/kafka-bridge/kafka-bridge.yaml

2.8. Deploying example clients

-

An existing Kafka cluster for the client to connect to.

-

Deploy the producer.

On Kubernetes, use

kubectl run:kubectl run kafka-producer -ti --image=strimzi/kafka:0.12.0-kafka-2.2.1 --rm=true --restart=Never -- bin/kafka-console-producer.sh --broker-list cluster-name-kafka-bootstrap:9092 --topic my-topicOn OpenShift, use

oc run:oc run kafka-producer -ti --image=strimzi/kafka:0.12.0-kafka-2.2.1 --rm=true --restart=Never -- bin/kafka-console-producer.sh --broker-list cluster-name-kafka-bootstrap:9092 --topic my-topic -

Type your message into the console where the producer is running.

-

Press Enter to send the message.

-

Deploy the consumer.

On Kubernetes, use

kubectl run:kubectl run kafka-consumer -ti --image=strimzi/kafka:0.12.0-kafka-2.2.1 --rm=true --restart=Never -- bin/kafka-console-consumer.sh --bootstrap-server cluster-name-kafka-bootstrap:9092 --topic my-topic --from-beginningOn OpenShift, use

oc run:oc run kafka-consumer -ti --image=strimzi/kafka:0.12.0-kafka-2.2.1 --rm=true --restart=Never -- bin/kafka-console-consumer.sh --bootstrap-server cluster-name-kafka-bootstrap:9092 --topic my-topic --from-beginning -

Confirm that you see the incoming messages in the consumer console.

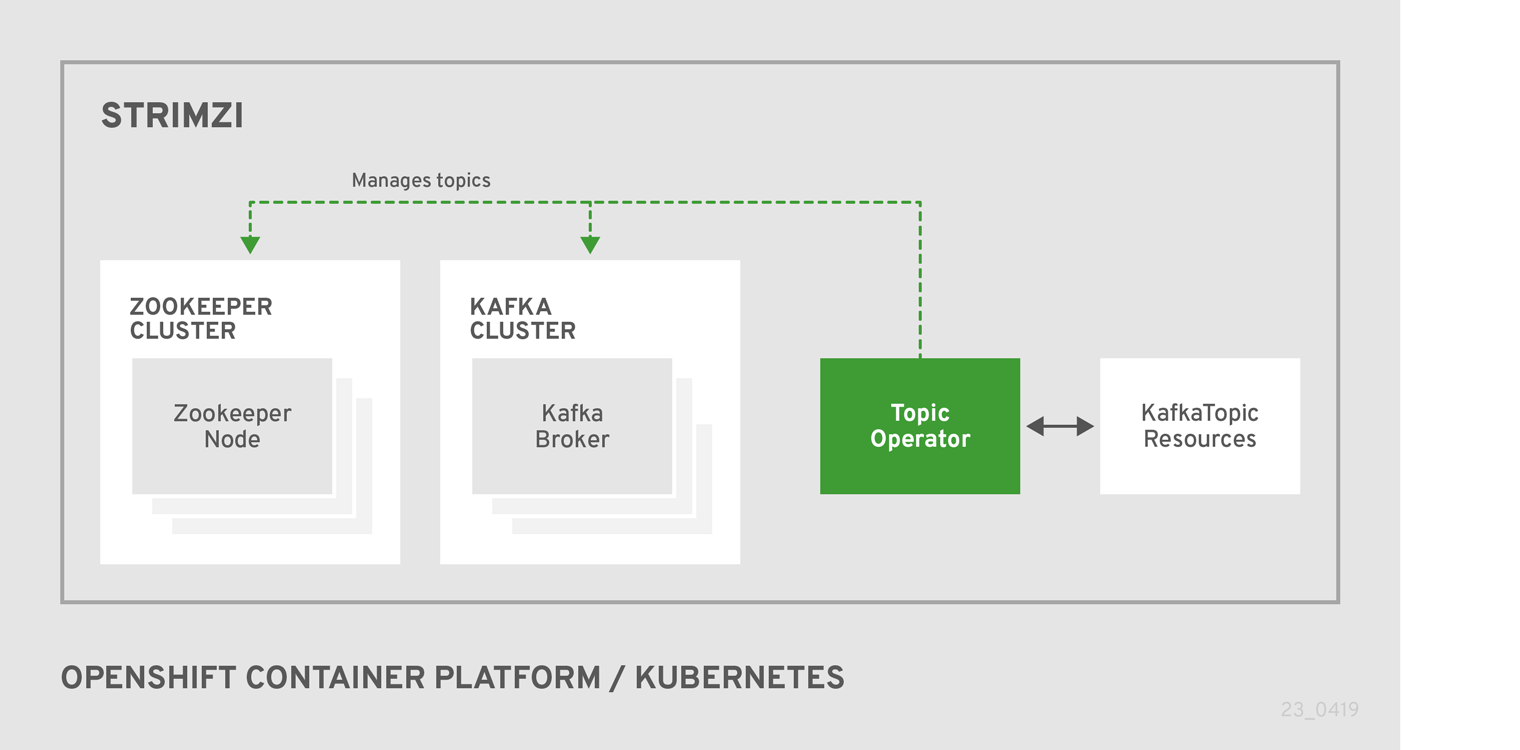

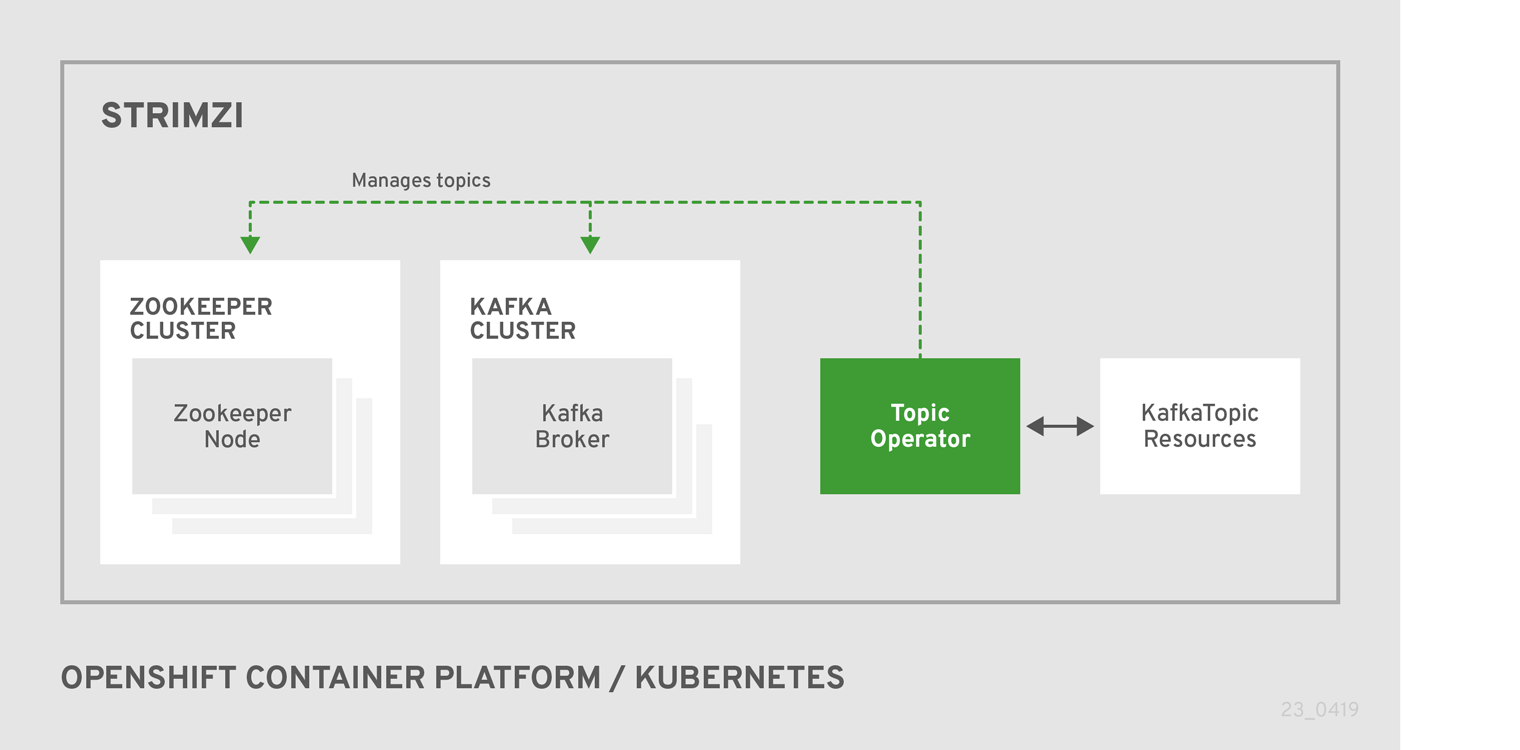

2.9. Topic Operator

2.9.1. Overview of the Topic Operator component

The Topic Operator provides a way of managing topics in a Kafka cluster via OpenShift or Kubernetes resources.

The role of the Topic Operator is to keep a set of KafkaTopic OpenShift or Kubernetes resources describing Kafka topics in-sync with corresponding Kafka topics.

Specifically, if a KafkaTopic is:

-

Created, the operator will create the topic it describes

-

Deleted, the operator will delete the topic it describes

-

Changed, the operator will update the topic it describes

And also, in the other direction, if a topic is:

-

Created within the Kafka cluster, the operator will create a

KafkaTopicdescribing it -

Deleted from the Kafka cluster, the operator will delete the

KafkaTopicdescribing it -

Changed in the Kafka cluster, the operator will update the

KafkaTopicdescribing it

This allows you to declare a KafkaTopic as part of your application’s deployment and the Topic Operator will take care of creating the topic for you.

Your application just needs to deal with producing or consuming from the necessary topics.

If the topic is reconfigured or reassigned to different Kafka nodes, the KafkaTopic will always be up to date.

For more details about creating, modifying and deleting topics, see Using the Topic Operator.

2.9.2. Deploying the Topic Operator using the Cluster Operator

This procedure describes how to deploy the Topic Operator using the Cluster Operator. If you want to use the Topic Operator with a Kafka cluster that is not managed by Strimzi, you must deploy the Topic Operator as a standalone component. For more information, see Deploying the standalone Topic Operator.

-

A running Cluster Operator

-

A

Kafkaresource to be created or updated

-

Ensure that the

Kafka.spec.entityOperatorobject exists in theKafkaresource. This configures the Entity Operator.apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: #... entityOperator: topicOperator: {} userOperator: {} -

Configure the Topic Operator using the fields described in

EntityTopicOperatorSpecschema reference. -

Create or update the Kafka resource in OpenShift or Kubernetes.

On Kubernetes, use

kubectl apply:kubectl apply -f your-fileOn OpenShift, use

oc apply:oc apply -f your-file

-

For more information about deploying the Cluster Operator, see Cluster Operator.

-

For more information about deploying the Entity Operator, see Entity Operator.

-

For more information about the

Kafka.spec.entityOperatorobject used to configure the Topic Operator when deployed by the Cluster Operator, seeEntityOperatorSpecschema reference.

2.10. User Operator

The User Operator provides a way of managing Kafka users via OpenShift or Kubernetes resources.

2.10.1. Overview of the User Operator component

The User Operator manages Kafka users for a Kafka cluster by watching for KafkaUser OpenShift or Kubernetes resources that describe Kafka users and ensuring that they are configured properly in the Kafka cluster.

For example:

-

if a

KafkaUseris created, the User Operator will create the user it describes -

if a

KafkaUseris deleted, the User Operator will delete the user it describes -

if a

KafkaUseris changed, the User Operator will update the user it describes

Unlike the Topic Operator, the User Operator does not sync any changes from the Kafka cluster with the OpenShift or Kubernetes resources. Unlike the Kafka topics which might be created by applications directly in Kafka, it is not expected that the users will be managed directly in the Kafka cluster in parallel with the User Operator, so this should not be needed.

The User Operator allows you to declare a KafkaUser as part of your application’s deployment.

When the user is created, the credentials will be created in a Secret.

Your application needs to use the user and its credentials for authentication and to produce or consume messages.

In addition to managing credentials for authentication, the User Operator also manages authorization rules by including a description of the user’s rights in the KafkaUser declaration.

2.10.2. Deploying the User Operator using the Cluster Operator

-

A running Cluster Operator

-

A

Kafkaresource to be created or updated.

-

Edit the

Kafkaresource ensuring it has aKafka.spec.entityOperator.userOperatorobject that configures the User Operator how you want. -

Create or update the Kafka resource in OpenShift or Kubernetes.

On Kubernetes this can be done using

kubectl apply:kubectl apply -f your-fileOn OpenShift this can be done using

oc apply:oc apply -f your-file

-

For more information about deploying the Cluster Operator, see Cluster Operator.

-

For more information about the

Kafka.spec.entityOperatorobject used to configure the User Operator when deployed by the Cluster Operator, seeEntityOperatorSpecschema reference.

2.11. Strimzi Administrators

Strimzi includes several custom resources. By default, permission to create, edit, and delete these resources is limited to OpenShift or Kubernetes cluster administrators. If you want to allow non-cluster administators to manage Strimzi resources, you must assign them the Strimzi Administrator role.

2.11.1. Designating Strimzi Administrators

-

Strimzi

CustomResourceDefinitionsare installed.

-

Create the

strimzi-admincluster role in OpenShift or Kubernetes.On Kubernetes, use

kubectl apply:kubectl apply -f install/strimzi-adminOn OpenShift, use

oc apply:oc apply -f install/strimzi-admin -

Assign the

strimzi-adminClusterRoleto one or more existing users in the OpenShift or Kubernetes cluster.On Kubernetes, use

kubectl create:kubectl create clusterrolebinding strimzi-admin --clusterrole=strimzi-admin --user=user1 --user=user2On OpenShift, use

oc adm:oc adm policy add-cluster-role-to-user strimzi-admin user1 user2

2.12. Container images

Container images for Strimzi are available in the Docker Hub. The installation YAML files provided by Strimzi will pull the images directly from the Docker Hub.

If you do not have access to the Docker Hub or want to use your own container repository:

-

Pull all container images listed here

-

Push them into your own registry

-

Update the image names in the installation YAML files

|

Note

|

Each Kafka version supported for the release has a separate image. |

| Container image | Namespace/Repository | Description |

|---|---|---|

Kafka |

|

Strimzi image for running Kafka, including:

|

Operator |

|

Strimzi image for running the operators:

|

Kafka Bridge |

|

Strimzi image for running the Strimzi kafka Bridge |

3. Deployment configuration

This chapter describes how to configure different aspects of the supported deployments:

-

Kafka clusters

-

Kafka Connect clusters

-

Kafka Connect clusters with Source2Image support

-

Kafka Mirror Maker

3.1. Kafka cluster configuration

The full schema of the Kafka resource is described in the Kafka schema reference.

All labels that are applied to the desired Kafka resource will also be applied to the OpenShift or Kubernetes resources making up the Kafka cluster.

This provides a convenient mechanism for resources to be labeled as required.

3.1.1. Data storage considerations

An efficient data storage infrastructure is essential to the optimal performance of Strimzi.

Strimzi requires block storage and is designed to work optimally with cloud-based block storage solutions, including Amazon Elastic Block Store (EBS). The use of file storage (for example, NFS) is not recommended.

Choose local storage (local persistent volumes) when possible. If local storage is not available, you can use a Storage Area Network (SAN) accessed by a protocol such as Fibre Channel or iSCSI.

Apache Kafka and Zookeeper storage

Use separate disks for Apache Kafka and Zookeeper.

Three types of data storage are supported:

-

Ephemeral (Recommended for development only)

-

Persistent

-

JBOD (Just a Bunch of Disks, suitable for Kafka only)

For more information, see Kafka and Zookeeper storage.

Solid-state drives (SSDs), though not essential, can improve the performance of Kafka in large clusters where data is sent to and received from multiple topics asynchronously. SSDs are particularly effective with Zookeeper, which requires fast, low latency data access.

|

Note

|

You do not need to provision replicated storage because Kafka and Zookeeper both have built-in data replication. |

File systems

It is recommended that you configure your storage system to use the XFS file system. Strimzi is also compatible with the ext4 file system, but this might require additional configuration for best results.

3.1.2. Kafka and Zookeeper storage types

As stateful applications, Kafka and Zookeeper need to store data on disk. Strimzi supports three storage types for this data:

-

Ephemeral

-

Persistent

-

JBOD storage

|

Note

|

JBOD storage is supported only for Kafka, not for Zookeeper. |

When configuring a Kafka resource, you can specify the type of storage used by the Kafka broker and its corresponding Zookeeper node. You configure the storage type using the storage property in the following resources:

-

Kafka.spec.kafka -

Kafka.spec.zookeeper

The storage type is configured in the type field.

|

Warning

|

The storage type cannot be changed after a Kafka cluster is deployed. |

Ephemeral storage

Ephemeral storage uses the `emptyDir` volumes to store data.

To use ephemeral storage, the type field should be set to ephemeral.

|

Important

|

EmptyDir volumes are not persistent and the data stored in them will be lost when the Pod is restarted.

After the new pod is started, it has to recover all data from other nodes of the cluster.

Ephemeral storage is not suitable for use with single node Zookeeper clusters and for Kafka topics with replication factor 1, because it will lead to data loss.

|

apiVersion: kafka.strimzi.io/v1beta1

kind: Kafka

metadata:

name: my-cluster

spec:

kafka:

# ...

storage:

type: ephemeral

# ...

zookeeper:

# ...

storage:

type: ephemeral

# ...Log directories

The ephemeral volume will be used by the Kafka brokers as log directories mounted into the following path:

/var/lib/kafka/data/kafka-log_idx_-

Where

idxis the Kafka broker pod index. For example/var/lib/kafka/data/kafka-log0.

Persistent storage

Persistent storage uses Persistent Volume Claims to provision persistent volumes for storing data. Persistent Volume Claims can be used to provision volumes of many different types, depending on the Storage Class which will provision the volume. The data types which can be used with persistent volume claims include many types of SAN storage as well as Local persistent volumes.

To use persistent storage, the type has to be set to persistent-claim.

Persistent storage supports additional configuration options:

id(optional)-

Storage identification number. This option is mandatory for storage volumes defined in a JBOD storage declaration. Default is

0. size(required)-

Defines the size of the persistent volume claim, for example, "1000Gi".

class(optional)-

The OpenShift or Kubernetes Storage Class to use for dynamic volume provisioning.

selector(optional)-

Allows selecting a specific persistent volume to use. It contains key:value pairs representing labels for selecting such a volume.

deleteClaim(optional)-

Boolean value which specifies if the Persistent Volume Claim has to be deleted when the cluster is undeployed. Default is

false.

|

Warning

|

Increasing the size of persistent volumes in an existing Strimzi cluster is only supported in OpenShift or Kubernetes versions that support persistent volume resizing. The persistent volume to be resized must use a storage class that supports volume expansion. For other versions of OpenShift or Kubernetes and storage classes which do not support volume expansion, you must decide the necessary storage size before deploying the cluster. Decreasing the size of existing persistent volumes is not possible. |

size# ...

storage:

type: persistent-claim

size: 1000Gi

# ...The following example demonstrates the use of a storage class.

# ...

storage:

type: persistent-claim

size: 1Gi

class: my-storage-class

# ...Finally, a selector can be used to select a specific labeled persistent volume to provide needed features such as an SSD.

# ...

storage:

type: persistent-claim

size: 1Gi

selector:

hdd-type: ssd

deleteClaim: true

# ...Storage class overrides

You can specify a different storage class for one or more Kafka brokers, instead of using the default storage class.

This is useful if, for example, storage classes are restricted to different availability zones or data centers.

You can use the overrides field for this purpose.

In this example, the default storage class is named my-storage-class:

apiVersion: kafka.strimzi.io/v1beta1

kind: Kafka

metadata:

labels:

app: my-cluster

name: my-cluster

namespace: myproject

spec:

# ...

kafka:

replicas: 3

storage:

deleteClaim: true

size: 100Gi

type: persistent-claim

class: my-storage-class

overrides:

- broker: 0

class: my-storage-class-zone-1a

- broker: 1

class: my-storage-class-zone-1b

- broker: 2

class: my-storage-class-zone-1c

# ...As a result of the configured overrides property, the broker volumes use the following storage classes:

-

The persistent volumes of broker 0 will use

my-storage-class-zone-1a. -

The persistent volumes of broker 1 will use

my-storage-class-zone-1b. -

The persistent volumes of broker 2 will use

my-storage-class-zone-1c.

The overrides property is currently used only to override storage class configurations. Overriding other storage configuration fields is not currently supported.

Other fields from the storage configuration are currently not supported.

Persistent Volume Claim naming

When persistent storage is used, it creates Persistent Volume Claims with the following names:

data-cluster-name-kafka-idx-

Persistent Volume Claim for the volume used for storing data for the Kafka broker pod

idx. data-cluster-name-zookeeper-idx-

Persistent Volume Claim for the volume used for storing data for the Zookeeper node pod

idx.

Log directories

The persistent volume will be used by the Kafka brokers as log directories mounted into the following path:

/var/lib/kafka/data/kafka-log_idx_-

Where

idxis the Kafka broker pod index. For example/var/lib/kafka/data/kafka-log0.

Resizing persistent volumes

You can provision increased storage capacity by increasing the size of the persistent volumes used by an existing Strimzi cluster. Resizing persistent volumes is supported in clusters that use either a single persistent volume or multiple persistent volumes in a JBOD storage configuration.

|

Note

|

You can increase but not decrease the size of persistent volumes. Decreasing the size of persistent volumes is not currently supported in OpenShift or Kubernetes. |

-

An OpenShift or Kubernetes cluster with support for volume resizing.

-

The Cluster Operator is running.

-

A Kafka cluster using persistent volumes created using a storage class that supports volume expansion.

-

In a

Kafkaresource, increase the size of the persistent volume allocated to the Kafka cluster, the Zookeeper cluster, or both.-

To increase the volume size allocated to the Kafka cluster, edit the

spec.kafka.storageproperty. -

To increase the volume size allocated to the Zookeeper cluster, edit the

spec.zookeeper.storageproperty.For example, to increase the volume size from

1000Gito2000Gi:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: kafka: # ... storage: type: persistent-claim size: 2000Gi class: my-storage-class # ... zookeeper: # ...

-

-

Create or update the resource.

On Kubernetes, use

kubectl apply:kubectl apply -f your-fileOn OpenShift, use

oc apply:oc apply -f your-fileOpenShift or Kubernetes increases the capacity of the selected persistent volumes in response to a request from the Cluster Operator. When the resizing is complete, the Cluster Operator restarts all pods that use the resized persistent volumes. This happens automatically.

For more information about resizing persistent volumes in OpenShift or Kubernetes, see Resizing Persistent Volumes using Kubernetes.

JBOD storage overview

You can configure Strimzi to use JBOD, a data storage configuration of multiple disks or volumes. JBOD is one approach to providing increased data storage for Kafka brokers. It can also improve performance.

A JBOD configuration is described by one or more volumes, each of which can be either ephemeral or persistent. The rules and constraints for JBOD volume declarations are the same as those for ephemeral and persistent storage. For example, you cannot change the size of a persistent storage volume after it has been provisioned.

JBOD configuration

To use JBOD with Strimzi, the storage type must be set to jbod. The volumes property allows you to describe the disks that make up your JBOD storage array or configuration. The following fragment shows an example JBOD configuration:

# ...

storage:

type: jbod

volumes:

- id: 0

type: persistent-claim

size: 100Gi

deleteClaim: false

- id: 1

type: persistent-claim

size: 100Gi

deleteClaim: false

# ...The ids cannot be changed once the JBOD volumes are created.

Users can add or remove volumes from the JBOD configuration.

JBOD and Persistent Volume Claims

When persistent storage is used to declare JBOD volumes, the naming scheme of the resulting Persistent Volume Claims is as follows:

data-id-cluster-name-kafka-idx-

Where

idis the ID of the volume used for storing data for Kafka broker podidx.

Log directories

The JBOD volumes will be used by the Kafka brokers as log directories mounted into the following path:

/var/lib/kafka/data-id/kafka-log_idx_-

Where

idis the ID of the volume used for storing data for Kafka broker podidx. For example/var/lib/kafka/data-0/kafka-log0.

Adding volumes to JBOD storage

This procedure describes how to add volumes to a Kafka cluster configured to use JBOD storage. It cannot be applied to Kafka clusters configured to use any other storage type.

|

Note

|

When adding a new volume under an id which was already used in the past and removed, you have to make sure that the previously used PersistentVolumeClaims have been deleted.

|

-

An OpenShift or Kubernetes cluster

-

A running Cluster Operator

-

A Kafka cluster with JBOD storage

-

Edit the

spec.kafka.storage.volumesproperty in theKafkaresource. Add the new volumes to thevolumesarray. For example, add the new volume with id2:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: kafka: # ... storage: type: jbod volumes: - id: 0 type: persistent-claim size: 100Gi deleteClaim: false - id: 1 type: persistent-claim size: 100Gi deleteClaim: false - id: 2 type: persistent-claim size: 100Gi deleteClaim: false # ... zookeeper: # ... -

Create or update the resource.

On Kubernetes this can be done using

kubectl apply:kubectl apply -f your-fileOn OpenShift this can be done using

oc apply:oc apply -f your-file -

Create new topics or reassign existing partitions to the new disks.

For more information about reassigning topics, see Partition reassignment.

Removing volumes from JBOD storage

This procedure describes how to remove volumes from Kafka cluster configured to use JBOD storage. It cannot be applied to Kafka clusters configured to use any other storage type. The JBOD storage always has to contain at least one volume.

|

Important

|

To avoid data loss, you have to move all partitions before removing the volumes. |

-

An OpenShift or Kubernetes cluster

-

A running Cluster Operator

-

A Kafka cluster with JBOD storage with two or more volumes

-

Reassign all partitions from the disks which are you going to remove. Any data in partitions still assigned to the disks which are going to be removed might be lost.

-

Edit the

spec.kafka.storage.volumesproperty in theKafkaresource. Remove one or more volumes from thevolumesarray. For example, remove the volumes with ids1and2:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: kafka: # ... storage: type: jbod volumes: - id: 0 type: persistent-claim size: 100Gi deleteClaim: false # ... zookeeper: # ... -

Create or update the resource.

On Kubernetes this can be done using

kubectl apply:kubectl apply -f your-fileOn OpenShift this can be done using

oc apply:oc apply -f your-file

For more information about reassigning topics, see Partition reassignment.

-

For more information about ephemeral storage, see ephemeral storage schema reference.

-

For more information about persistent storage, see persistent storage schema reference.

-

For more information about JBOD storage, see JBOD schema reference.

-

For more information about the schema for

Kafka, seeKafkaschema reference.

3.1.3. Kafka broker replicas

A Kafka cluster can run with many brokers.

You can configure the number of brokers used for the Kafka cluster in Kafka.spec.kafka.replicas.

The best number of brokers for your cluster has to be determined based on your specific use case.

Configuring the number of broker nodes

This procedure describes how to configure the number of Kafka broker nodes in a new cluster. It only applies to new clusters with no partitions. If your cluster already has topics defined, see Scaling clusters.

-

An OpenShift or Kubernetes cluster

-

A running Cluster Operator

-

A Kafka cluster with no topics defined yet

-

Edit the

replicasproperty in theKafkaresource. For example:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: kafka: # ... replicas: 3 # ... zookeeper: # ... -

Create or update the resource.

On Kubernetes this can be done using

kubectl apply:kubectl apply -f your-fileOn OpenShift this can be done using

oc apply:oc apply -f your-file

If your cluster already has topics defined, see Scaling clusters.

3.1.4. Kafka broker configuration

Strimzi allows you to customize the configuration of the Kafka brokers in your Kafka cluster. You can specify and configure most of the options listed in the "Broker Configs" section of the Apache Kafka documentation. You cannot configure options that are related to the following areas:

-

Security (Encryption, Authentication, and Authorization)

-

Listener configuration

-

Broker ID configuration

-

Configuration of log data directories

-

Inter-broker communication

-

Zookeeper connectivity

These options are automatically configured by Strimzi.

Kafka broker configuration

A Kafka broker can be configured using the config property in Kafka.spec.kafka.

This property should contain the Kafka broker configuration options as keys with values in one of the following JSON types:

-

String

-

Number

-

Boolean

You can specify and configure all of the options in the "Broker Configs" section of the Apache Kafka documentation apart from those managed directly by Strimzi. Specifically, you are prevented from modifying all configuration options with keys equal to or starting with one of the following strings:

-

listeners -

advertised. -

broker. -

listener. -

host.name -

port -

inter.broker.listener.name -

sasl. -

ssl. -

security. -

password. -

principal.builder.class -

log.dir -

zookeeper.connect -

zookeeper.set.acl -

authorizer. -

super.user

If the config property specifies a restricted option, it is ignored and a warning message is printed to the Cluster Operator log file.

All other supported options are passed to Kafka.

|

Important

|

The Cluster Operator does not validate keys or values in the provided config object.

If invalid configuration is provided, the Kafka cluster might not start or might become unstable.

In such cases, you must fix the configuration in the Kafka.spec.kafka.config object and the Cluster Operator will roll out the new configuration to all Kafka brokers.

|

apiVersion: kafka.strimzi.io/v1beta1

kind: Kafka

metadata:

name: my-cluster

spec:

kafka:

# ...

config:

num.partitions: 1

num.recovery.threads.per.data.dir: 1

default.replication.factor: 3

offsets.topic.replication.factor: 3

transaction.state.log.replication.factor: 3

transaction.state.log.min.isr: 1

log.retention.hours: 168

log.segment.bytes: 1073741824

log.retention.check.interval.ms: 300000

num.network.threads: 3

num.io.threads: 8

socket.send.buffer.bytes: 102400

socket.receive.buffer.bytes: 102400

socket.request.max.bytes: 104857600

group.initial.rebalance.delay.ms: 0

# ...Configuring Kafka brokers

You can configure an existing Kafka broker, or create a new Kafka broker with a specified configuration.

-

An OpenShift or Kubernetes cluster is available.

-

The Cluster Operator is running.

-

Open the YAML configuration file that contains the

Kafkaresource specifying the cluster deployment. -

In the

spec.kafka.configproperty in theKafkaresource, enter one or more Kafka configuration settings. For example:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka spec: kafka: # ... config: default.replication.factor: 3 offsets.topic.replication.factor: 3 transaction.state.log.replication.factor: 3 transaction.state.log.min.isr: 1 # ... zookeeper: # ... -

Apply the new configuration to create or update the resource.

On Kubernetes, use

kubectl apply:kubectl apply -f kafka.yamlOn OpenShift, use

oc apply:oc apply -f kafka.yamlwhere

kafka.yamlis the YAML configuration file for the resource that you want to configure; for example,kafka-persistent.yaml.

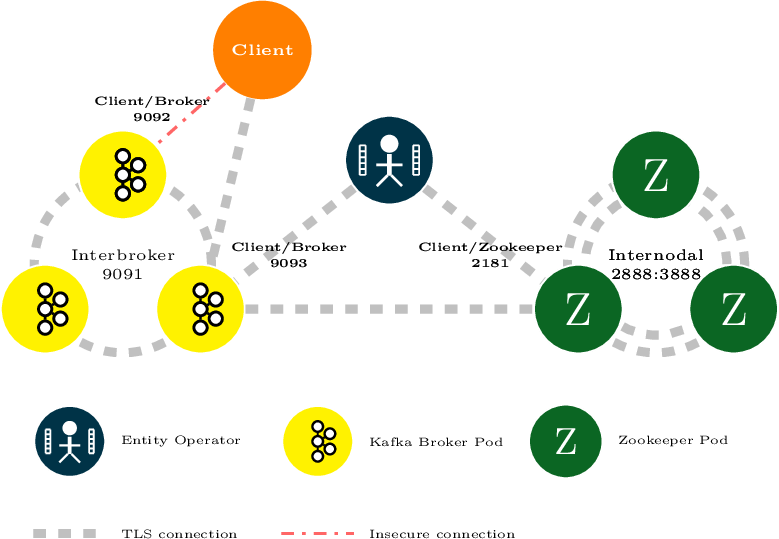

3.1.5. Kafka broker listeners

Strimzi allows users to configure the listeners which will be enabled in Kafka brokers. Three types of listener are supported:

-

Plain listener on port 9092 (without encryption)

-

TLS listener on port 9093 (with encryption)

-

External listener on port 9094 for access from outside of OpenShift or Kubernetes

Mutual TLS authentication for clients

Mutual TLS authentication

Mutual TLS authentication is always used for the communication between Kafka brokers and Zookeeper pods.Mutual authentication or two-way authentication is when both the server and the client present certificates. Strimzi can configure Kafka to use TLS (Transport Layer Security) to provide encrypted communication between Kafka brokers and clients either with or without mutual authentication. When you configure mutual authentication, the broker authenticates the client and the client authenticates the broker.

|

Note

|

TLS authentication is more commonly one-way, with one party authenticating the identity of another. For example, when HTTPS is used between a web browser and a web server, the server obtains proof of the identity of the browser. |

When to use mutual TLS authentication for clients

Mutual TLS authentication is recommended for authenticating Kafka clients when:

-

The client supports authentication using mutual TLS authentication

-

It is necessary to use the TLS certificates rather than passwords

-

You can reconfigure and restart client applications periodically so that they do not use expired certificates.

SCRAM-SHA authentication

SCRAM (Salted Challenge Response Authentication Mechanism) is an authentication protocol that can establish mutual authentication using passwords. Strimzi can configure Kafka to use SASL (Simple Authentication and Security Layer) SCRAM-SHA-512 to provide authentication on both unencrypted and TLS-encrypted client connections. TLS authentication is always used internally between Kafka brokers and Zookeeper nodes. When used with a TLS client connection, the TLS protocol provides encryption, but is not used for authentication.

The following properties of SCRAM make it safe to use SCRAM-SHA even on unencrypted connections:

-

The passwords are not sent in the clear over the communication channel. Instead the client and the server are each challenged by the other to offer proof that they know the password of the authenticating user.

-

The server and client each generate a new challenge for each authentication exchange. This means that the exchange is resilient against replay attacks.

Supported SCRAM credentials

Strimzi supports SCRAM-SHA-512 only.

When a KafkaUser.spec.authentication.type is configured with scram-sha-512 the User Operator will generate a random 12 character password consisting of upper and lowercase ASCII letters and numbers.

When to use SCRAM-SHA authentication for clients

SCRAM-SHA is recommended for authenticating Kafka clients when:

-

The client supports authentication using SCRAM-SHA-512

-

It is necessary to use passwords rather than the TLS certificates

-

Authentication for unencrypted communication is required

Kafka listeners

You can configure Kafka broker listeners using the listeners property in the Kafka.spec.kafka resource.

The listeners property contains three sub-properties:

-

plain -

tls -

external

When none of these properties are defined, the listener will be disabled.

listeners property with all listeners enabled# ...

listeners:

plain: {}

tls: {}

external:

type: loadbalancer

# ...listeners property with only the plain listener enabled# ...

listeners:

plain: {}

# ...External listener

The external listener is used to connect to a Kafka cluster from outside of an OpenShift or Kubernetes environment. Strimzi supports three types of external listeners:

-

route -

loadbalancer -

nodeport -

ingress

RoutesAn external listener of type route exposes Kafka by using OpenShift Routes and the HAProxy router.

A dedicated Route is created for every Kafka broker pod.

An additional Route is created to serve as a Kafka bootstrap address.

Kafka clients can use these Routes to connect to Kafka on port 443.

|

Note

|

Routes are available only on OpenShift. External listeners of type route cannot be used on Kubernetes.

|

When exposing Kafka using OpenShift Routes, TLS encryption is always used.

By default, the route hosts are automatically assigned by OpenShift.

However, you can override the assigned route hosts by specifying the requested hosts in the overrides property.

Strimzi will not perform any validation that the requested hosts are available; you must ensure that they are free and can be used.

routes configured with overrides for OpenShift route hosts# ...

listeners:

external:

type: route

authentication:

type: tls

overrides:

bootstrap:

host: bootstrap.myrouter.com

brokers:

- broker: 0

host: broker-0.myrouter.com

- broker: 1

host: broker-1.myrouter.com

- broker: 2

host: broker-2.myrouter.com

# ...For more information on using Routes to access Kafka, see Accessing Kafka using OpenShift routes.

External listeners of type loadbalancer expose Kafka by using Loadbalancer type Services.

A new loadbalancer service is created for every Kafka broker pod.

An additional loadbalancer is created to serve as a Kafka bootstrap address.

Loadbalancers listen to connections on port 9094.

By default, TLS encryption is enabled.

To disable it, set the tls field to false.

For more information on using loadbalancers to access Kafka, see Accessing Kafka using loadbalancers.

External listeners of type nodeport expose Kafka by using NodePort type Services.

When exposing Kafka in this way, Kafka clients connect directly to the nodes of OpenShift or Kubernetes.

You must enable access to the ports on the OpenShift or Kubernetes nodes for each client (for example, in firewalls or security groups).

Each Kafka broker pod is then accessible on a separate port.

Additional NodePort type Service is created to serve as a Kafka bootstrap address.

When configuring the advertised addresses for the Kafka broker pods, Strimzi uses the address of the node on which the given pod is running. When selecting the node address, the different address types are used with the following priority:

-

ExternalDNS

-

ExternalIP

-

Hostname

-

InternalDNS

-

InternalIP

By default, TLS encryption is enabled.

To disable it, set the tls field to false.

|

Note

|

TLS hostname verification is not currently supported when exposing Kafka clusters using node ports. |

By default, the port numbers used for the bootstrap and broker services are automatically assigned by OpenShift or Kubernetes.

However, you can override the assigned node ports by specifying the requested port numbers in the overrides property.

Strimzi does not perform any validation on the requested ports; you must ensure that they are free and available for use.

# ...

listeners:

external:

type: nodeport

tls: true

authentication:

type: tls

overrides:

bootstrap:

nodePort: 32100

brokers:

- broker: 0

nodePort: 32000

- broker: 1

nodePort: 32001

- broker: 2

nodePort: 32002

# ...For more information on using node ports to access Kafka, see Accessing Kafka using node ports.

IngressAn external listener of type ingress exposes Kafka by using Kubernetes Ingress and the NGINX Ingress Controller for Kubernetes.

A dedicated Ingress resource is created for every Kafka broker pod.

An additional Ingress resource is created to serve as a Kafka bootstrap address.

Kafka clients can use these Ingress resources to connect to Kafka on port 443.

|

Note

|

External listeners using Ingress have been currently tested only with the NGINX Ingress Controller for Kubernetes.

|

Strimzi uses the TLS passthrough feature of the NGINX Ingress Controller for Kubernetes.

Make sure TLS passthrough is enabled in your NGINX Ingress Controller for Kubernetes deployment.

For more information about enabling TLS passthrough see TLS passthrough documentation.

Because it is using the TLS passthrough functionality, TLS encryption cannot be disabled when exposing Kafka using Ingress.

The Ingress controller does not assign any hostnames automatically.

You have to specify the hostnames which should be used by the bootstrap and per-broker services in the spec.kafka.listeners.external.configuration section.

You also have to make sure that the hostnames resolve to the Ingress endpoints.

Strimzi will not perform any validation that the requested hosts are available and properly routed to the Ingress endpoints.

ingress# ...

listeners:

external:

type: ingress

authentication:

type: tls

configuration:

bootstrap:

host: bootstrap.myingress.com

brokers:

- broker: 0

host: broker-0.myingress.com

- broker: 1

host: broker-1.myingress.com

- broker: 2

host: broker-2.myingress.com

# ...For more information on using Ingress to access Kafka, see Accessing Kafka using Kubernetes ingress.

By default, Strimzi tries to automatically determine the hostnames and ports that your Kafka cluster advertises to its clients.

This is not sufficient in all situations, because the infrastructure on which Strimzi is running might not provide the right hostname or port through which Kafka can be accessed.

You can customize the advertised hostname and port in the overrides property of the external listener.

Strimzi will then automatically configure the advertised address in the Kafka brokers and add it to the broker certificates so it can be used for TLS hostname verification.

Overriding the advertised host and ports is available for all types of external listeners.

# ...

listeners:

external:

type: route

authentication:

type: tls

overrides:

brokers:

- broker: 0

advertisedHost: example.hostname.0

advertisedPort: 12340

- broker: 1

advertisedHost: example.hostname.1

advertisedPort: 12341

- broker: 2

advertisedHost: example.hostname.2

advertisedPort: 12342

# ...Additionally, you can specify the name of the bootstrap service. This name will be added to the broker certificates and can be used for TLS hostname verification. Adding the additional bootstrap address is available for all types of external listeners.

# ...

listeners:

external:

type: route

authentication:

type: tls

overrides:

bootstrap:

address: example.hostname

# ...On loadbalancer

and ingress

listeners, you can use the dnsAnnotations property to add additional annotations to the

ingress resources or

load balancer services.

You can use these annotations to instrument DNS tooling such as External DNS, which automatically assigns DNS names to the

ingress resources or

services.

loadbalancer using link:https://github.com/kubernetes-incubator/external-dns[External DNS^] annotations# ...

listeners:

external:

type: loadbalancer

authentication:

type: tls

overrides:

bootstrap:

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: kafka-bootstrap.mydomain.com.

external-dns.alpha.kubernetes.io/ttl: "60"

brokers:

- broker: 0

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: kafka-broker-0.mydomain.com.

external-dns.alpha.kubernetes.io/ttl: "60"

- broker: 1

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: kafka-broker-1.mydomain.com.

external-dns.alpha.kubernetes.io/ttl: "60"

- broker: 2

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: kafka-broker-2.mydomain.com.

external-dns.alpha.kubernetes.io/ttl: "60"

# ...ingress using link:https://github.com/kubernetes-incubator/external-dns[External DNS^] annotations# ...

listeners:

external:

type: ingress

authentication:

type: tls

configuration:

bootstrap:

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: bootstrap.myingress.com.

external-dns.alpha.kubernetes.io/ttl: "60"

host: bootstrap.myingress.com

brokers:

- broker: 0

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: broker-0.myingress.com.

external-dns.alpha.kubernetes.io/ttl: "60"

host: broker-0.myingress.com

- broker: 1

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: broker-1.myingress.com.

external-dns.alpha.kubernetes.io/ttl: "60"

host: broker-1.myingress.com

- broker: 2

dnsAnnotations:

external-dns.alpha.kubernetes.io/hostname: broker-2.myingress.com.

external-dns.alpha.kubernetes.io/ttl: "60"

host: broker-2.myingress.com

# ...Listener authentication

The listener sub-properties can also contain additional configuration.

Both listeners support the authentication property. This is used to specify an authentication mechanism specific to that listener:

-

mutual TLS authentication (only on the listeners with TLS encryption)

-

SCRAM-SHA authentication

If no authentication property is specified then the listener does not authenticate clients which connect though that listener.

tls listener with mutual TLS authentication# ...

listeners:

plain:

authentication:

type: scram-sha-512

tls:

authentication:

type: tls

external:

type: loadbalancer

tls: true

authentication:

type: tls

# ...Authentication must be configured when using the User Operator to manage KafkaUsers.

Network policies

Strimzi automatically creates a NetworkPolicy resource for every listener that is enabled on a Kafka broker.

By default, a NetworkPolicy grants access to a listener to all applications and namespaces.

If you want to restrict access to a listener to only selected applications or namespaces, use the networkPolicyPeers field.

Each listener can have a different networkPolicyPeers configuration.

The following example shows a networkPolicyPeers configuration for a plain and a tls listener:

# ...

listeners:

plain:

authentication:

type: scram-sha-512

networkPolicyPeers:

- podSelector:

matchLabels:

app: kafka-sasl-consumer

- podSelector:

matchLabels:

app: kafka-sasl-producer

tls:

authentication:

type: tls

networkPolicyPeers:

- namespaceSelector:

matchLabels:

project: myproject

- namespaceSelector:

matchLabels:

project: myproject2

# ...In the above example:

-

Only application pods matching the labels

app: kafka-sasl-consumerandapp: kafka-sasl-producercan connect to theplainlistener. The application pods must be running in the same namespace as the Kafka broker. -

Only application pods running in namespaces matching the labels

project: myprojectandproject: myproject2can connect to thetlslistener.

The syntax of the networkPolicyPeers field is the same as the from field in the NetworkPolicy resource in Kubernetes.

For more information about the schema, see NetworkPolicyPeer API reference and the KafkaListeners schema reference.

|

Note

|

Your configuration of OpenShift or Kubernetes must support Ingress NetworkPolicies in order to use network policies in Strimzi. |

Configuring Kafka listeners

-

An OpenShift or Kubernetes cluster

-

A running Cluster Operator

-

Edit the

listenersproperty in theKafka.spec.kafkaresource.An example configuration of the plain (unencrypted) listener without authentication:

apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka spec: kafka: # ... listeners: plain: {} # ... zookeeper: # ... -

Create or update the resource.

On Kubernetes this can be done using

kubectl apply:kubectl apply -f your-fileOn OpenShift this can be done using

oc apply:oc apply -f your-file

-