1. Overview of Strimzi

Strimzi simplifies the process of running Apache Kafka in a Kubernetes cluster.

This guide provides instructions for configuring Kafka components and using Strimzi Operators. Procedures relate to how you might want to modify your deployment and introduce additional features, such as Cruise Control or distributed tracing.

You can configure your deployment using Strimzi custom resources. The Custom resource API reference describes the properties you can use in your configuration.

|

Note

|

Looking to get started with Strimzi? For step-by-step deployment instructions, see the Deploying and Upgrading Strimzi guide. |

1.1. Kafka capabilities

The underlying data stream-processing capabilities and component architecture of Kafka can deliver:

-

Microservices and other applications to share data with extremely high throughput and low latency

-

Message ordering guarantees

-

Message rewind/replay from data storage to reconstruct an application state

-

Message compaction to remove old records when using a key-value log

-

Horizontal scalability in a cluster configuration

-

Replication of data to control fault tolerance

-

Retention of high volumes of data for immediate access

1.2. Kafka use cases

Kafka’s capabilities make it suitable for:

-

Event-driven architectures

-

Event sourcing to capture changes to the state of an application as a log of events

-

Message brokering

-

Website activity tracking

-

Operational monitoring through metrics

-

Log collection and aggregation

-

Commit logs for distributed systems

-

Stream processing so that applications can respond to data in real time

1.3. How Strimzi supports Kafka

Strimzi provides container images and Operators for running Kafka on Kubernetes. Strimzi Operators are fundamental to the running of Strimzi. The Operators provided with Strimzi are purpose-built with specialist operational knowledge to effectively manage Kafka.

Operators simplify the process of:

-

Deploying and running Kafka clusters

-

Deploying and running Kafka components

-

Configuring access to Kafka

-

Securing access to Kafka

-

Upgrading Kafka

-

Managing brokers

-

Creating and managing topics

-

Creating and managing users

1.4. Strimzi Operators

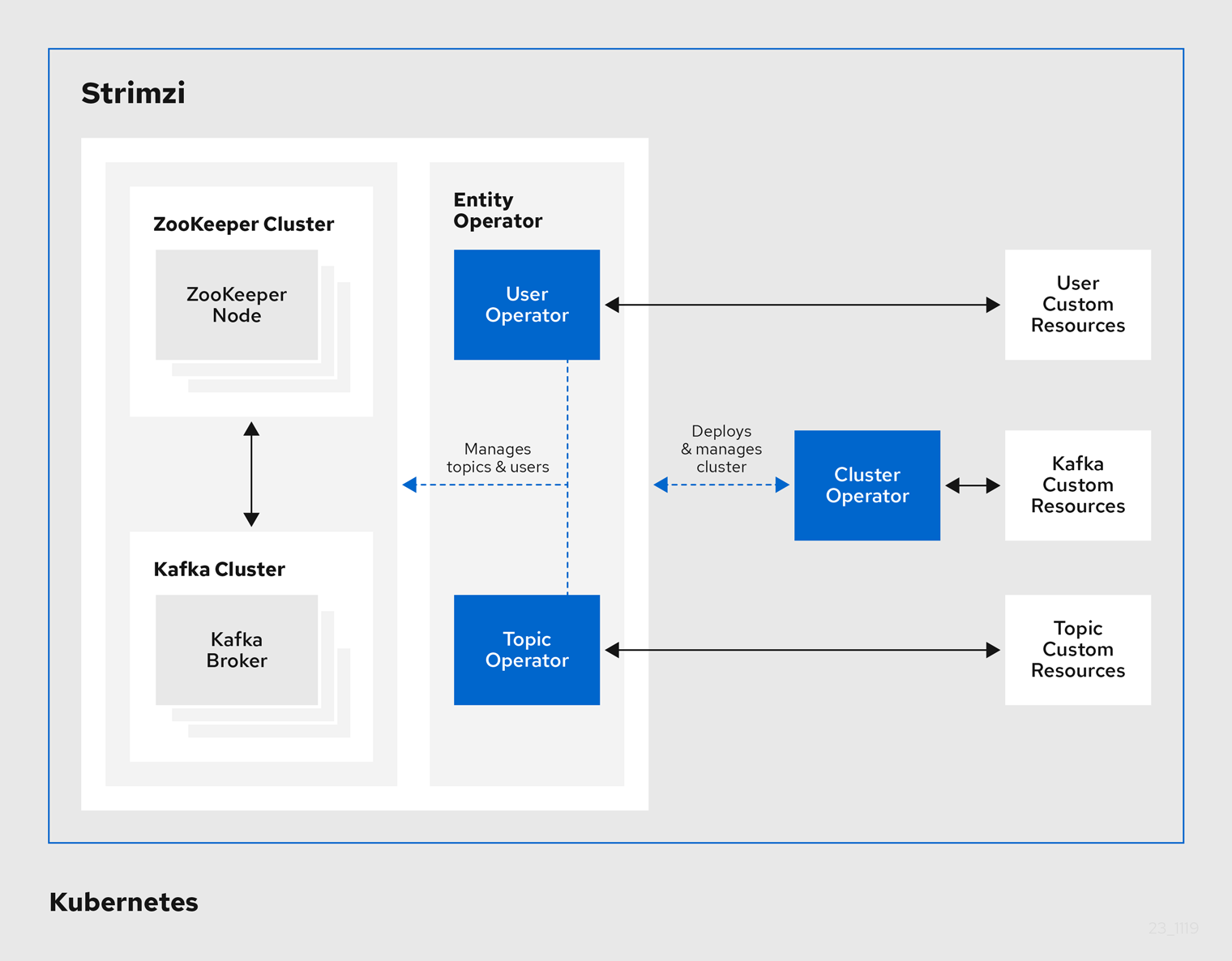

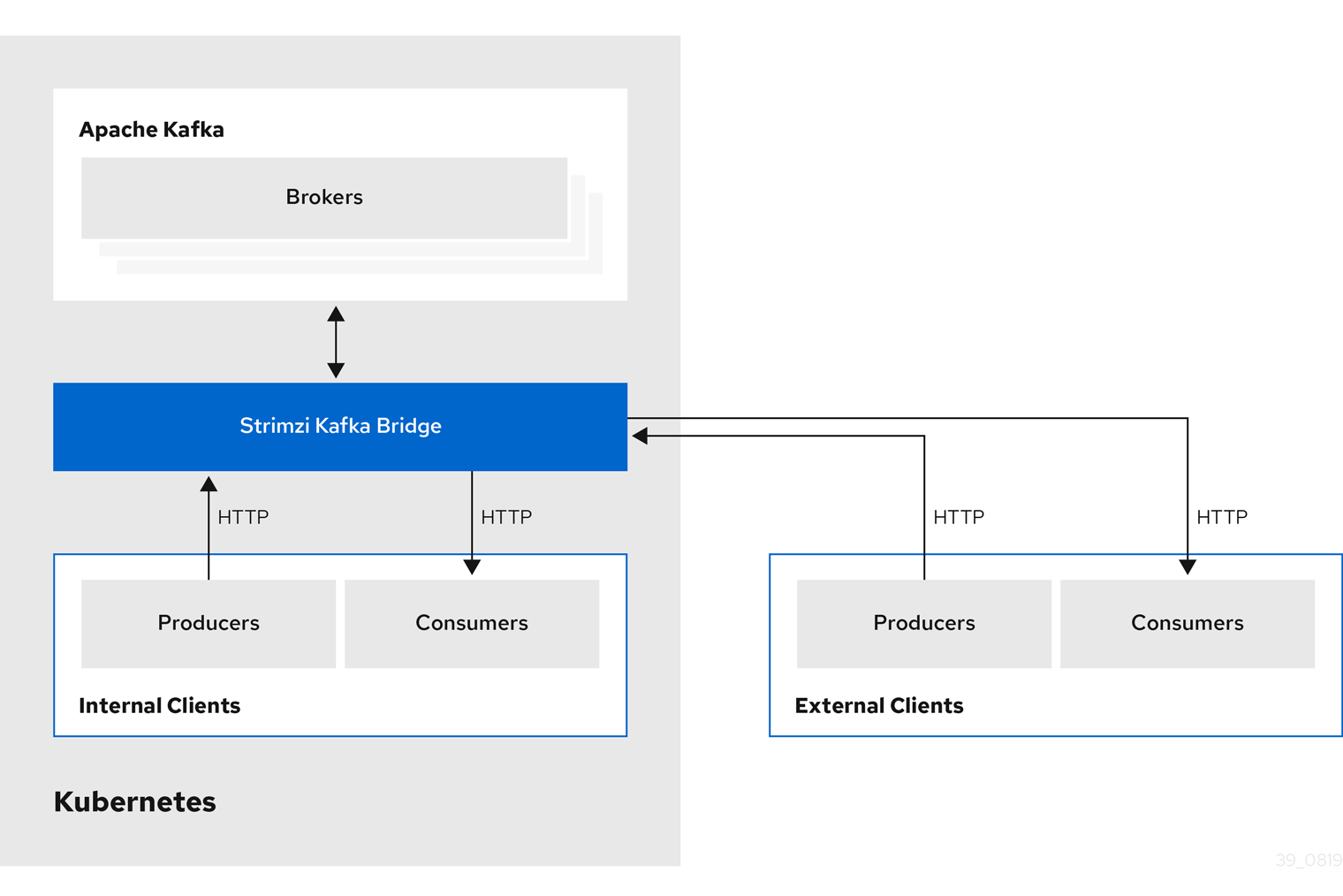

Strimzi supports Kafka using Operators to deploy and manage the components and dependencies of Kafka to Kubernetes.

Operators are a method of packaging, deploying, and managing a Kubernetes application. Strimzi Operators extend Kubernetes functionality, automating common and complex tasks related to a Kafka deployment. By implementing knowledge of Kafka operations in code, Kafka administration tasks are simplified and require less manual intervention.

Operators

Strimzi provides Operators for managing a Kafka cluster running within a Kubernetes cluster.

- Cluster Operator

-

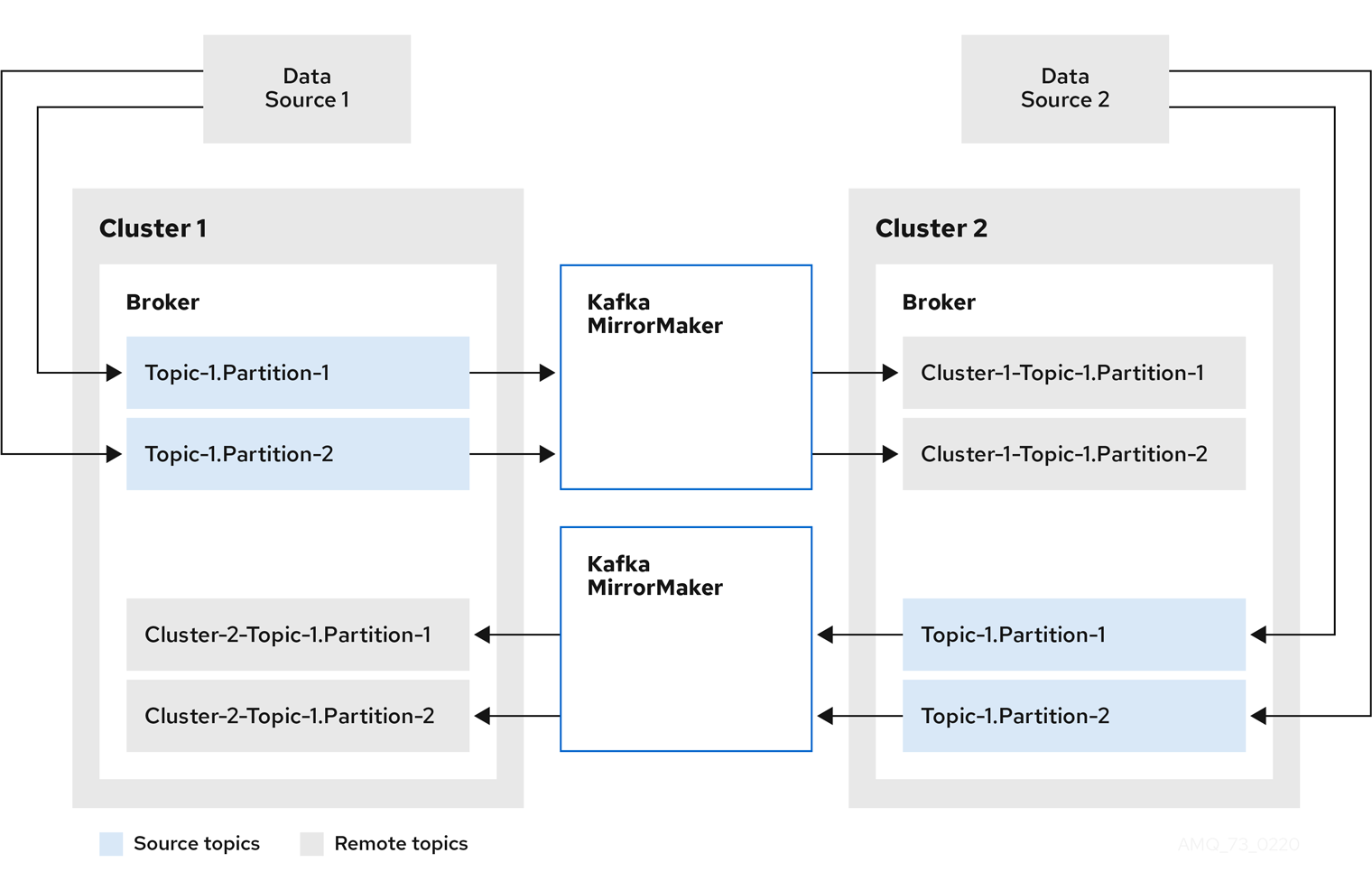

Deploys and manages Apache Kafka clusters, Kafka Connect, Kafka MirrorMaker, Kafka Bridge, Kafka Exporter, and the Entity Operator

- Entity Operator

-

Comprises the Topic Operator and User Operator

- Topic Operator

-

Manages Kafka topics

- User Operator

-

Manages Kafka users

The Cluster Operator can deploy the Topic Operator and User Operator as part of an Entity Operator configuration at the same time as a Kafka cluster.

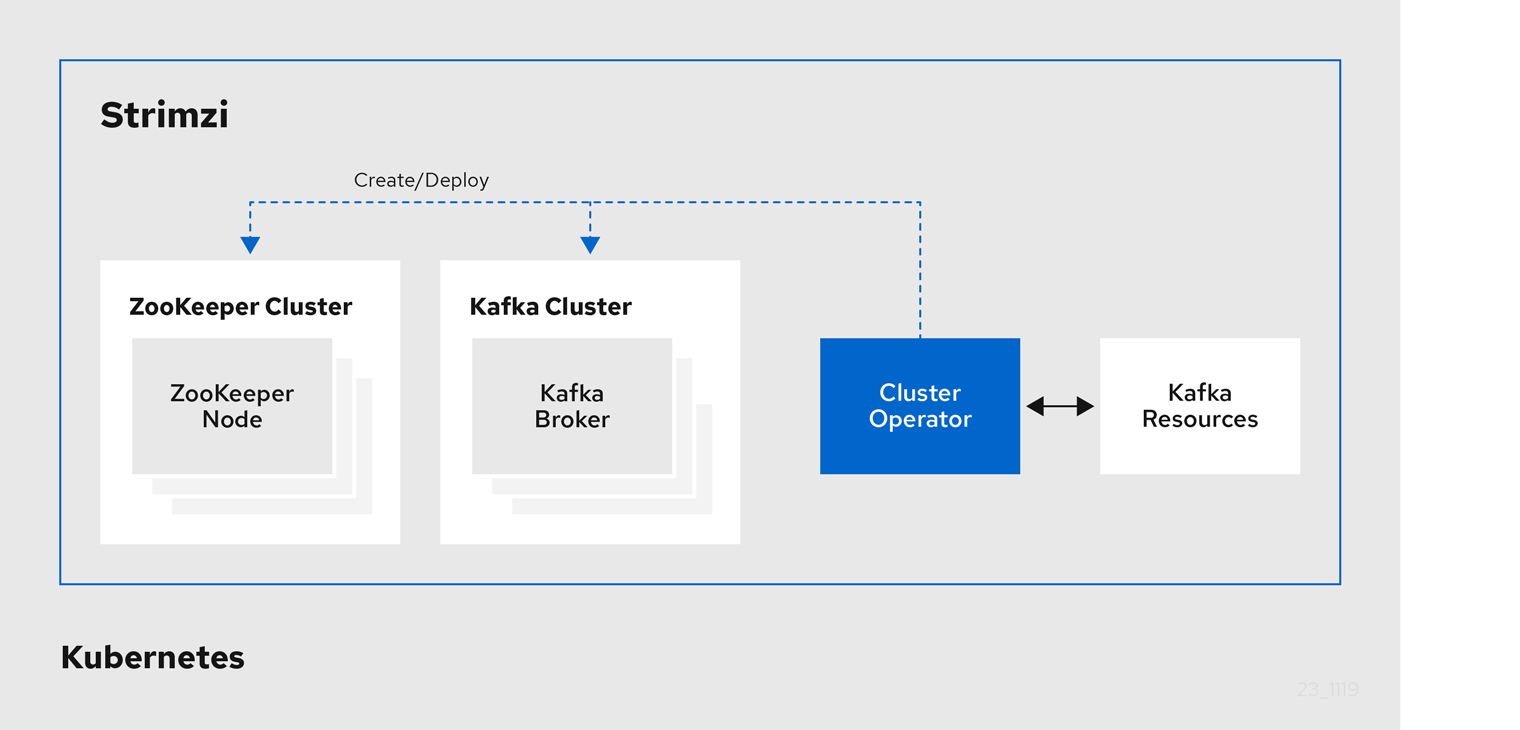

1.4.1. Cluster Operator

Strimzi uses the Cluster Operator to deploy and manage clusters for:

-

Kafka (including ZooKeeper, Entity Operator, Kafka Exporter, and Cruise Control)

-

Kafka Connect

-

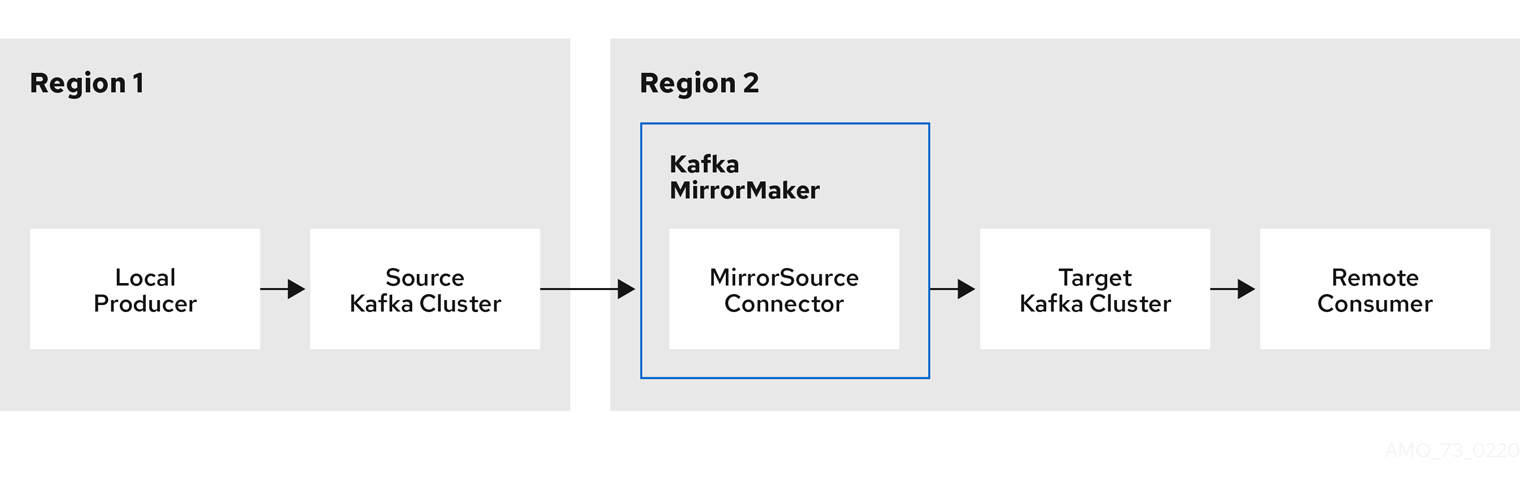

Kafka MirrorMaker

-

Kafka Bridge

Custom resources are used to deploy the clusters.

For example, to deploy a Kafka cluster:

-

A

Kafkaresource with the cluster configuration is created within the Kubernetes cluster. -

The Cluster Operator deploys a corresponding Kafka cluster, based on what is declared in the

Kafkaresource.

The Cluster Operator can also deploy (through configuration of the Kafka resource):

-

A Topic Operator to provide operator-style topic management through

KafkaTopiccustom resources -

A User Operator to provide operator-style user management through

KafkaUsercustom resources

The Topic Operator and User Operator function within the Entity Operator on deployment.

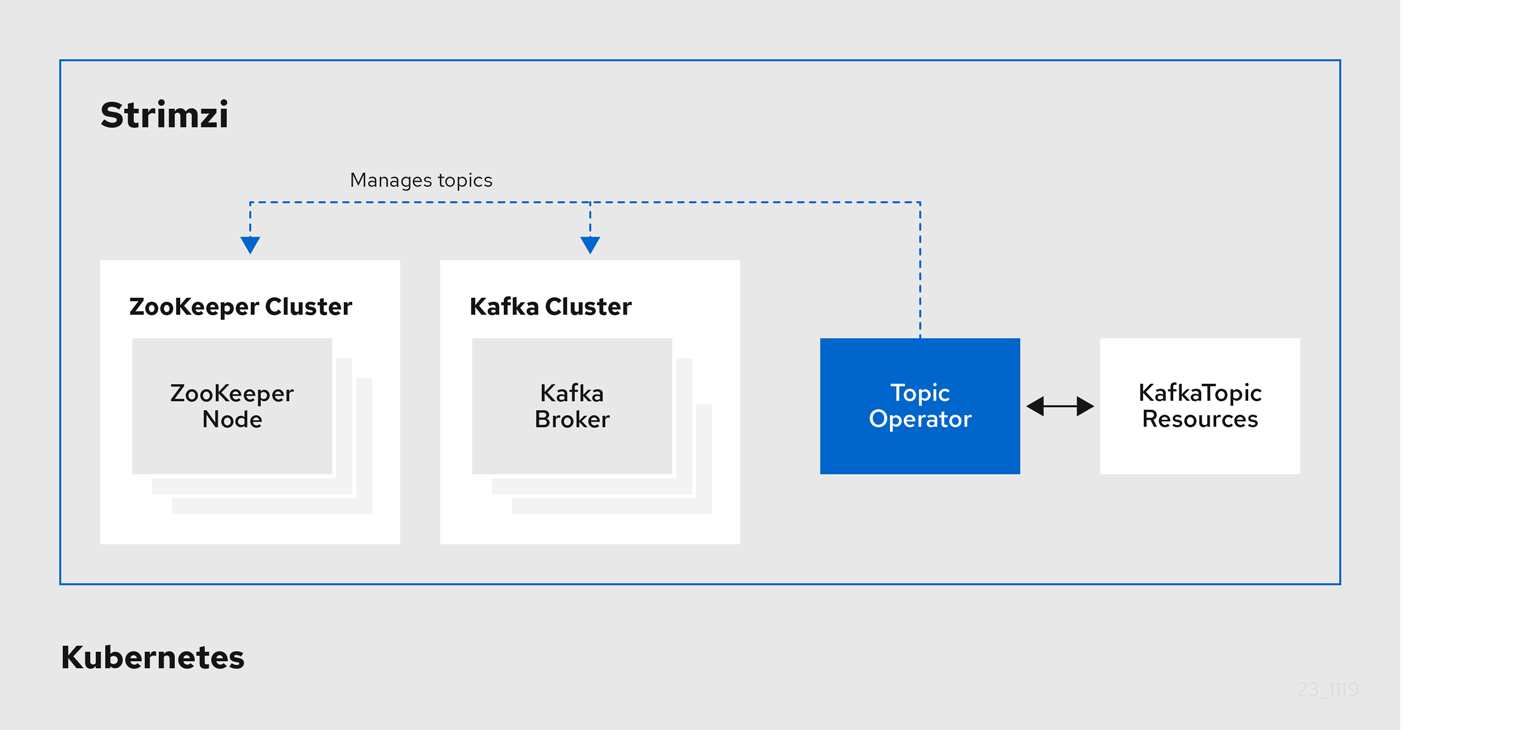

1.4.2. Topic Operator

The Topic Operator provides a way of managing topics in a Kafka cluster through Kubernetes resources.

The role of the Topic Operator is to keep a set of KafkaTopic Kubernetes resources describing Kafka topics in-sync with corresponding Kafka topics.

Specifically, if a KafkaTopic is:

-

Created, the Topic Operator creates the topic

-

Deleted, the Topic Operator deletes the topic

-

Changed, the Topic Operator updates the topic

Working in the other direction, if a topic is:

-

Created within the Kafka cluster, the Operator creates a

KafkaTopic -

Deleted from the Kafka cluster, the Operator deletes the

KafkaTopic -

Changed in the Kafka cluster, the Operator updates the

KafkaTopic

This allows you to declare a KafkaTopic as part of your application’s deployment and the Topic Operator will take care of creating the topic for you.

Your application just needs to deal with producing or consuming from the necessary topics.

The Topic Operator maintains information about each topic in a topic store, which is continually synchronized with updates from Kafka topics or Kubernetes KafkaTopic custom resources.

Updates from operations applied to a local in-memory topic store are persisted to a backup topic store on disk.

If a topic is reconfigured or reassigned to other brokers, the KafkaTopic will always be up to date.

1.4.3. User Operator

The User Operator manages Kafka users for a Kafka cluster by watching for KafkaUser resources that describe Kafka users,

and ensuring that they are configured properly in the Kafka cluster.

For example, if a KafkaUser is:

-

Created, the User Operator creates the user it describes

-

Deleted, the User Operator deletes the user it describes

-

Changed, the User Operator updates the user it describes

Unlike the Topic Operator, the User Operator does not sync any changes from the Kafka cluster with the Kubernetes resources. Kafka topics can be created by applications directly in Kafka, but it is not expected that the users will be managed directly in the Kafka cluster in parallel with the User Operator.

The User Operator allows you to declare a KafkaUser resource as part of your application’s deployment.

You can specify the authentication and authorization mechanism for the user.

You can also configure user quotas that control usage of Kafka resources to ensure, for example, that a user does not monopolize access to a broker.

When the user is created, the user credentials are created in a Secret.

Your application needs to use the user and its credentials for authentication and to produce or consume messages.

In addition to managing credentials for authentication, the User Operator also manages authorization rules by including a description of the user’s access rights in the KafkaUser declaration.

1.5. Strimzi custom resources

A deployment of Kafka components to a Kubernetes cluster using Strimzi is highly configurable through the application of custom resources. Custom resources are created as instances of APIs added by Custom resource definitions (CRDs) to extend Kubernetes resources.

CRDs act as configuration instructions to describe the custom resources in a Kubernetes cluster, and are provided with Strimzi for each Kafka component used in a deployment, as well as users and topics. CRDs and custom resources are defined as YAML files. Example YAML files are provided with the Strimzi distribution.

CRDs also allow Strimzi resources to benefit from native Kubernetes features like CLI accessibility and configuration validation.

1.5.1. Strimzi custom resource example

CRDs require a one-time installation in a cluster to define the schemas used to instantiate and manage Strimzi-specific resources.

After a new custom resource type is added to your cluster by installing a CRD, you can create instances of the resource based on its specification.

Depending on the cluster setup, installation typically requires cluster admin privileges.

|

Note

|

Access to manage custom resources is limited to Strimzi administrators. For more information, see Designating Strimzi administrators in the Deploying and Upgrading Strimzi guide. |

A CRD defines a new kind of resource, such as kind:Kafka, within a Kubernetes cluster.

The Kubernetes API server allows custom resources to be created based on the kind and understands from the CRD how to validate and store the custom resource when it is added to the Kubernetes cluster.

|

Warning

|

When CRDs are deleted, custom resources of that type are also deleted. Additionally, the resources created by the custom resource, such as pods and statefulsets are also deleted. |

Each Strimzi-specific custom resource conforms to the schema defined by the CRD for the resource’s kind.

The custom resources for Strimzi components have common configuration properties, which are defined under spec.

To understand the relationship between a CRD and a custom resource, let’s look at a sample of the CRD for a Kafka topic.

apiVersion: kafka.strimzi.io/v1beta2

kind: CustomResourceDefinition

metadata: (1)

name: kafkatopics.kafka.strimzi.io

labels:

app: strimzi

spec: (2)

group: kafka.strimzi.io

versions:

v1beta2

scope: Namespaced

names:

# ...

singular: kafkatopic

plural: kafkatopics

shortNames:

- kt (3)

additionalPrinterColumns: (4)

# ...

subresources:

status: {} (5)

validation: (6)

openAPIV3Schema:

properties:

spec:

type: object

properties:

partitions:

type: integer

minimum: 1

replicas:

type: integer

minimum: 1

maximum: 32767

# ...-

The metadata for the topic CRD, its name and a label to identify the CRD.

-

The specification for this CRD, including the group (domain) name, the plural name and the supported schema version, which are used in the URL to access the API of the topic. The other names are used to identify instance resources in the CLI. For example,

kubectl get kafkatopic my-topicorkubectl get kafkatopics. -

The shortname can be used in CLI commands. For example,

kubectl get ktcan be used as an abbreviation instead ofkubectl get kafkatopic. -

The information presented when using a

getcommand on the custom resource. -

The current status of the CRD as described in the schema reference for the resource.

-

openAPIV3Schema validation provides validation for the creation of topic custom resources. For example, a topic requires at least one partition and one replica.

|

Note

|

You can identify the CRD YAML files supplied with the Strimzi installation files, because the file names contain an index number followed by ‘Crd’. |

Here is a corresponding example of a KafkaTopic custom resource.

apiVersion: kafka.strimzi.io/v1beta2

kind: KafkaTopic (1)

metadata:

name: my-topic

labels:

strimzi.io/cluster: my-cluster (2)

spec: (3)

partitions: 1

replicas: 1

config:

retention.ms: 7200000

segment.bytes: 1073741824

status:

conditions: (4)

lastTransitionTime: "2019-08-20T11:37:00.706Z"

status: "True"

type: Ready

observedGeneration: 1

/ ...-

The

kindandapiVersionidentify the CRD of which the custom resource is an instance. -

A label, applicable only to

KafkaTopicandKafkaUserresources, that defines the name of the Kafka cluster (which is same as the name of theKafkaresource) to which a topic or user belongs. -

The spec shows the number of partitions and replicas for the topic as well as the configuration parameters for the topic itself. In this example, the retention period for a message to remain in the topic and the segment file size for the log are specified.

-

Status conditions for the

KafkaTopicresource. Thetypecondition changed toReadyat thelastTransitionTime.

Custom resources can be applied to a cluster through the platform CLI. When the custom resource is created, it uses the same validation as the built-in resources of the Kubernetes API.

After a KafkaTopic custom resource is created, the Topic Operator is notified and corresponding Kafka topics are created in Strimzi.

1.6. Listener configuration

Listeners are used to connect to Kafka brokers.

Strimzi provides a generic GenericKafkaListener schema with properties to configure listeners through the Kafka resource.

The GenericKafkaListener provides a flexible approach to listener configuration.

You can specify properties to configure internal listeners for connecting within the Kubernetes cluster, or external listeners for connecting outside the Kubernetes cluster.

Each listener is defined as an array in the Kafka resource.

You can configure as many listeners as required, as long as their names and ports are unique.

You might want to configure multiple external listeners, for example, to handle access from networks that require different authentication mechanisms.

Or you might need to join your Kubernetes network to an outside network.

In which case, you can configure internal listeners (using the useServiceDnsDomain property) so that the Kubernetes service DNS domain (typically .cluster.local) is not used.

For more information on the configuration options available for listeners,

see the GenericKafkaListener schema reference.

You can configure listeners for secure connection using authentication. For more information on securing access to Kafka brokers, see Managing access to Kafka.

You can configure external listeners for client access outside a Kubernetes environment using a specified connection mechanism, such as a loadbalancer. For more information on the configuration options for connecting an external client, see Configuring external listeners.

You can provide your own server certificates, called Kafka listener certificates, for TLS listeners or external listeners which have TLS encryption enabled. For more information, see Kafka listener certificates.

1.7. Document Conventions

In this document, replaceable text is styled in monospace, with italics, uppercase, and hyphens.

For example, in the following code, you will want to replace MY-NAMESPACE with the name of your namespace:

sed -i 's/namespace: .*/namespace: MY-NAMESPACE/' install/cluster-operator/*RoleBinding*.yaml2. Configuring a Strimzi deployment

This chapter describes how to configure different aspects of the supported deployments using custom resources:

-

Kafka clusters

-

Kafka Connect clusters

-

Kafka Connect clusters with Source2Image support

-

Kafka MirrorMaker

-

Kafka Bridge

-

Cruise Control

|

Note

|

Labels applied to a custom resource are also applied to the Kubernetes resources comprising Kafka MirrorMaker. This provides a convenient mechanism for resources to be labeled as required. |

The Deploying and Upgrading Strimzi guide describes how to monitor your Strimzi deployment.

2.1. Kafka cluster configuration

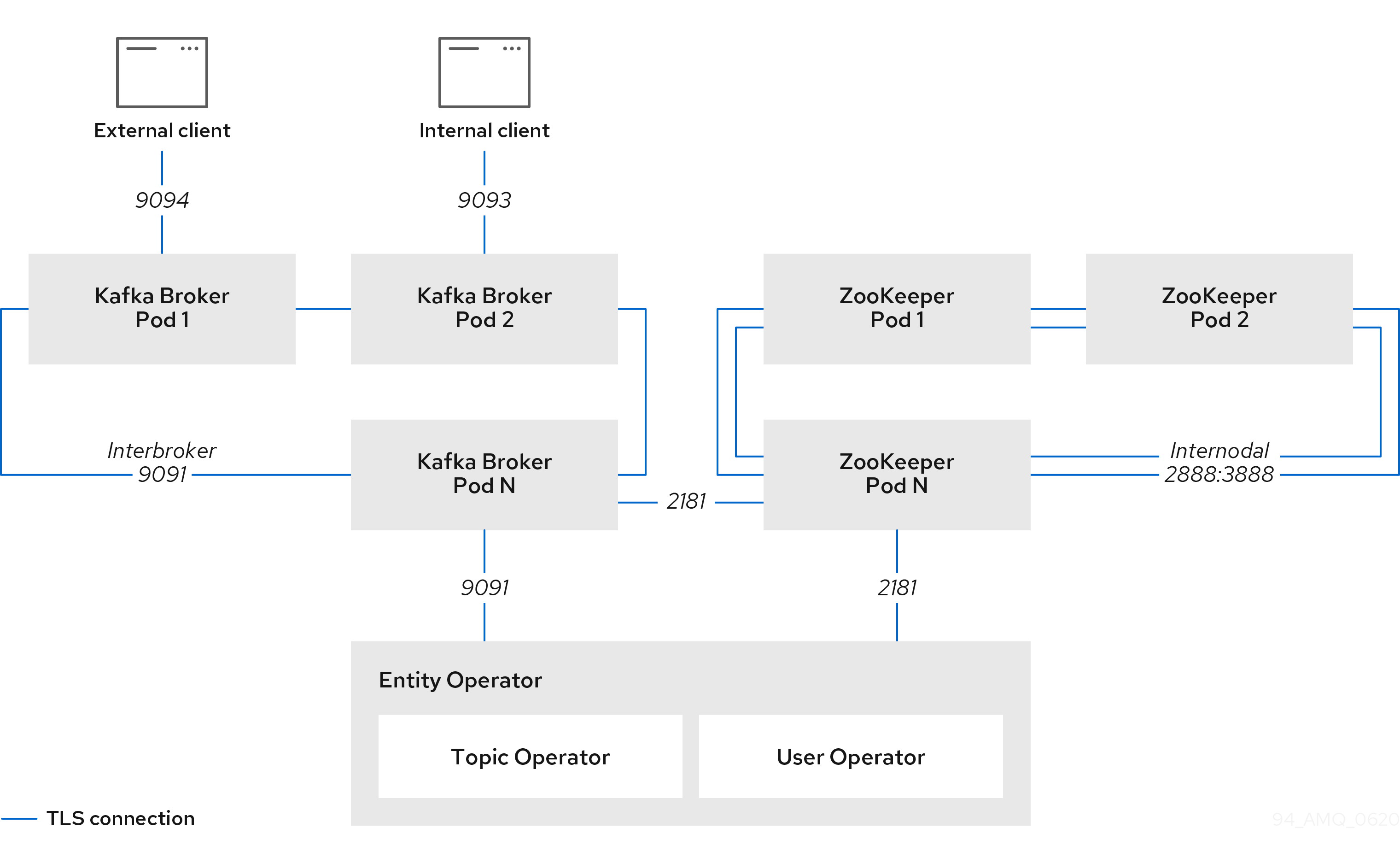

This section describes how to configure a Kafka deployment in your Strimzi cluster. A Kafka cluster is deployed with a ZooKeeper cluster. The deployment can also include the Topic Operator and User Operator, which manage Kafka topics and users.

You configure Kafka using the Kafka resource.

Configuration options are also available for ZooKeeper and the Entity Operator within the Kafka resource.

The Entity Operator comprises the Topic Operator and User Operator.

The full schema of the Kafka resource is described in the Kafka schema reference.

You configure listeners for connecting clients to Kafka brokers. For more information on configuring listeners for connecting brokers, see Listener configuration.

You can configure your Kafka cluster to allow or decline actions executed by users. For more information on securing access to Kafka brokers, see Managing access to Kafka.

When deploying Kafka, the Cluster Operator automatically sets up and renews TLS certificates to enable encryption and authentication within your cluster. If required, you can manually renew the cluster and client CA certificates before their renewal period ends. You can also replace the keys used by the cluster and client CA certificates. For more information, see Renewing CA certificates manually and Replacing private keys.

-

For more information about Apache Kafka, see the Apache Kafka website.

2.1.1. Configuring Kafka

Use the properties of the Kafka resource to configure your Kafka deployment.

As well as configuring Kafka, you can add configuration for ZooKeeper and the Strimzi Operators. Common configuration properties, such as logging and healthchecks, are configured independently for each component.

This procedure shows only some of the possible configuration options, but those that are particularly important include:

-

Resource requests (CPU / Memory)

-

JVM options for maximum and minimum memory allocation

-

Listeners (and authentication of clients)

-

Authentication

-

Storage

-

Rack awareness

-

Metrics

-

Cruise Control for cluster rebalancing

The log.message.format.version and inter.broker.protocol.version properties for the Kafka config must be the versions supported by the specified Kafka version (spec.kafka.version).

The properties represent the log format version appended to messages and the version of Kafka protocol used in a Kafka cluster.

Updates to these properties are required when upgrading your Kafka version.

For more information, see Upgrading Kafka in the Deploying and Upgrading Strimzi guide.

-

A Kubernetes cluster

-

A running Cluster Operator

See the Deploying and Upgrading Strimzi guide for instructions on deploying a:

-

Edit the

specproperties for theKafkaresource.The properties you can configure are shown in this example configuration:

apiVersion: kafka.strimzi.io/v1beta2 kind: Kafka metadata: name: my-cluster spec: kafka: replicas: 3 (1) version: 2.8.0 (2) logging: (3) type: inline loggers: kafka.root.logger.level: "INFO" resources: (4) requests: memory: 64Gi cpu: "8" limits: memory: 64Gi cpu: "12" readinessProbe: (5) initialDelaySeconds: 15 timeoutSeconds: 5 livenessProbe: initialDelaySeconds: 15 timeoutSeconds: 5 jvmOptions: (6) -Xms: 8192m -Xmx: 8192m image: my-org/my-image:latest (7) listeners: (8) - name: plain (9) port: 9092 (10) type: internal (11) tls: false (12) configuration: useServiceDnsDomain: true (13) - name: tls port: 9093 type: internal tls: true authentication: (14) type: tls - name: external (15) port: 9094 type: route tls: true configuration: brokerCertChainAndKey: (16) secretName: my-secret certificate: my-certificate.crt key: my-key.key authorization: (17) type: simple config: (18) auto.create.topics.enable: "false" offsets.topic.replication.factor: 3 transaction.state.log.replication.factor: 3 transaction.state.log.min.isr: 2 log.message.format.version: 2.8 inter.broker.protocol.version: 2.8 ssl.cipher.suites: "TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384" (19) ssl.enabled.protocols: "TLSv1.2" ssl.protocol: "TLSv1.2" storage: (20) type: persistent-claim (21) size: 10000Gi (22) rack: (23) topologyKey: topology.kubernetes.io/zone metricsConfig: (24) type: jmxPrometheusExporter valueFrom: configMapKeyRef: (25) name: my-config-map key: my-key # ... zookeeper: (26) replicas: 3 (27) logging: (28) type: inline loggers: zookeeper.root.logger: "INFO" resources: requests: memory: 8Gi cpu: "2" limits: memory: 8Gi cpu: "2" jvmOptions: -Xms: 4096m -Xmx: 4096m storage: type: persistent-claim size: 1000Gi metricsConfig: # ... entityOperator: (29) tlsSidecar: (30) resources: requests: cpu: 200m memory: 64Mi limits: cpu: 500m memory: 128Mi topicOperator: watchedNamespace: my-topic-namespace reconciliationIntervalSeconds: 60 logging: (31) type: inline loggers: rootLogger.level: "INFO" resources: requests: memory: 512Mi cpu: "1" limits: memory: 512Mi cpu: "1" userOperator: watchedNamespace: my-topic-namespace reconciliationIntervalSeconds: 60 logging: (32) type: inline loggers: rootLogger.level: INFO resources: requests: memory: 512Mi cpu: "1" limits: memory: 512Mi cpu: "1" kafkaExporter: (33) # ... cruiseControl: (34) # ... tlsSidecar: (35) # ...-

The number of replica nodes. If your cluster already has topics defined, you can scale clusters.

-

Kafka version, which can be changed to a supported version by following the upgrade procedure.

-

Specified Kafka loggers and log levels added directly (

inline) or indirectly (external) through a ConfigMap. A custom ConfigMap must be placed under thelog4j.propertieskey. For the Kafkakafka.root.logger.levellogger, you can set the log level to INFO, ERROR, WARN, TRACE, DEBUG, FATAL or OFF. -

Requests for reservation of supported resources, currently

cpuandmemory, and limits to specify the maximum resources that can be consumed. -

Healthchecks to know when to restart a container (liveness) and when a container can accept traffic (readiness).

-

JVM configuration options to optimize performance for the Virtual Machine (VM) running Kafka.

-

ADVANCED OPTION: Container image configuration, which is recommended only in special situations.

-

Listeners configure how clients connect to the Kafka cluster via bootstrap addresses. Listeners are configured as internal or external listeners for connection from inside or outside the Kubernetes cluster.

-

Name to identify the listener. Must be unique within the Kafka cluster.

-

Port number used by the listener inside Kafka. The port number has to be unique within a given Kafka cluster. Allowed port numbers are 9092 and higher with the exception of ports 9404 and 9999, which are already used for Prometheus and JMX. Depending on the listener type, the port number might not be the same as the port number that connects Kafka clients.

-

Listener type specified as

internal, or for external listeners, asroute,loadbalancer,nodeportoringress. -

Enables TLS encryption for each listener. Default is

false. TLS encryption is not required forroutelisteners. -

Defines whether the fully-qualified DNS names including the cluster service suffix (usually

.cluster.local) are assigned. -

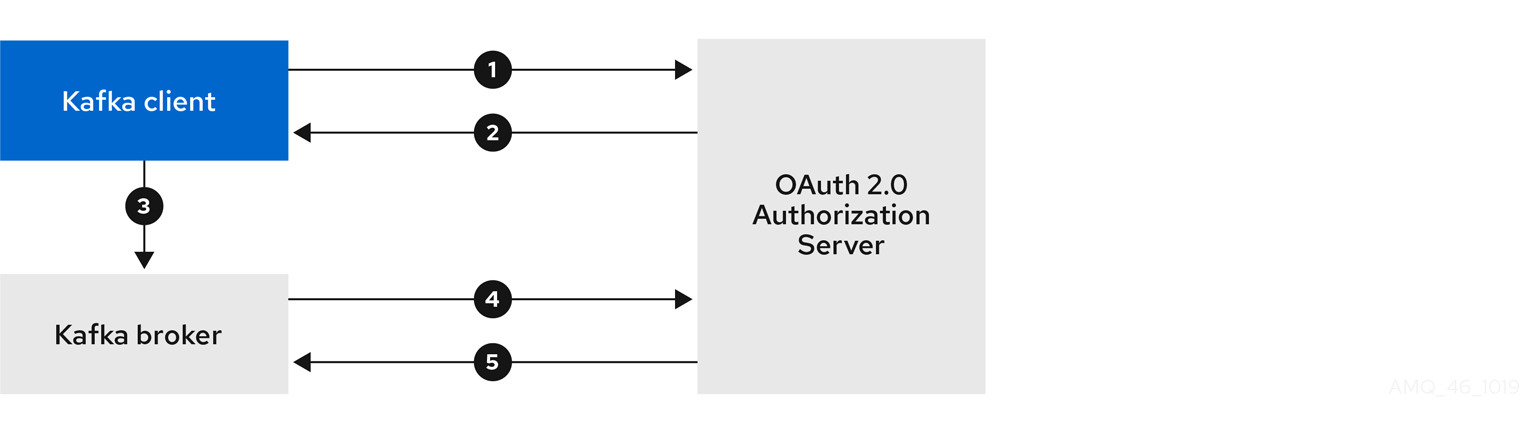

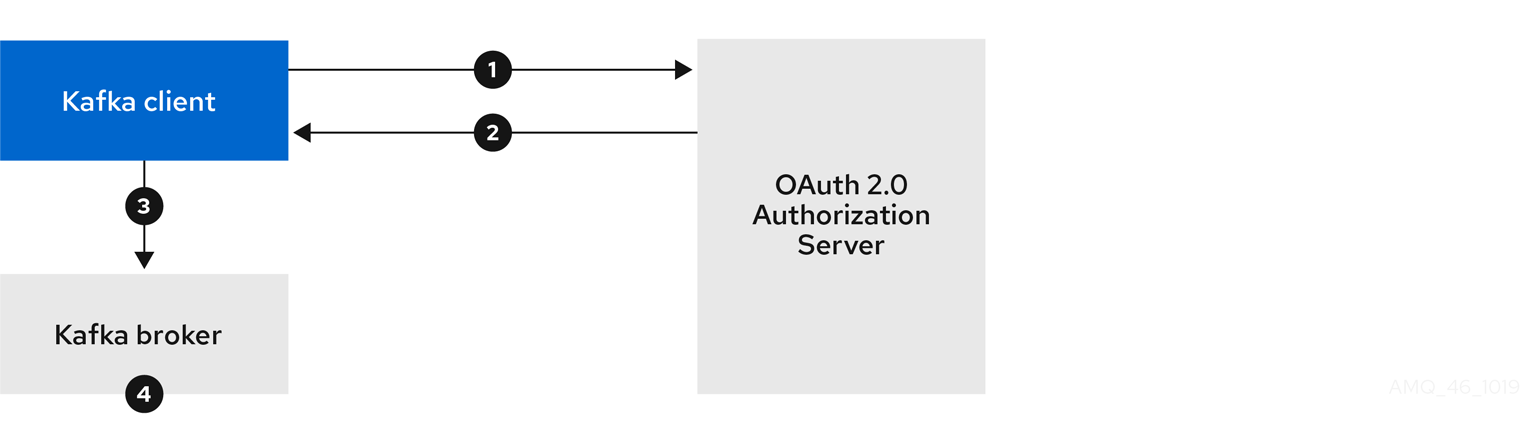

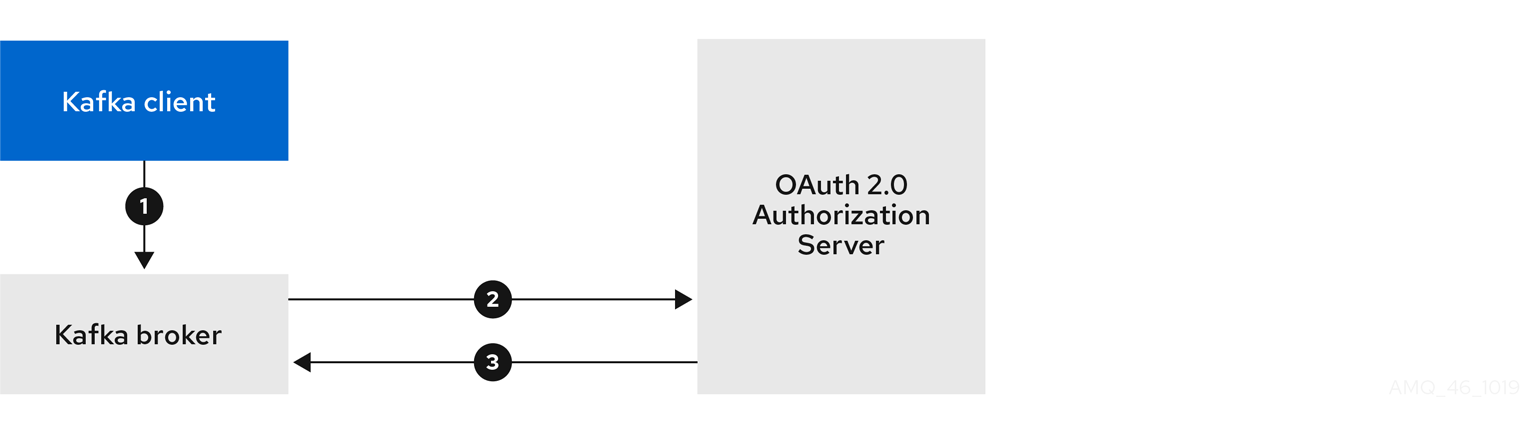

Listener authentication mechanism specified as mutual TLS, SCRAM-SHA-512 or token-based OAuth 2.0.

-

External listener configuration specifies how the Kafka cluster is exposed outside Kubernetes, such as through a

route,loadbalancerornodeport. -

Optional configuration for a Kafka listener certificate managed by an external Certificate Authority. The

brokerCertChainAndKeyspecifies aSecretthat contains a server certificate and a private key. You can configure Kafka listener certificates on any listener with enabled TLS encryption. -

Authorization enables simple, OAUTH 2.0, or OPA authorization on the Kafka broker. Simple authorization uses the

AclAuthorizerKafka plugin. -

The

configspecifies the broker configuration. Standard Apache Kafka configuration may be provided, restricted to those properties not managed directly by Strimzi. -

Storage is configured as

ephemeral,persistent-claimorjbod. -

Storage size for persistent volumes may be increased and additional volumes may be added to JBOD storage.

-

Persistent storage has additional configuration options, such as a storage

idandclassfor dynamic volume provisioning. -

Rack awareness is configured to spread replicas across different racks. A

topologykeymust match the label of a cluster node. -

Prometheus metrics enabled. In this example, metrics are configured for the Prometheus JMX Exporter (the default metrics exporter).

-

Prometheus rules for exporting metrics to a Grafana dashboard through the Prometheus JMX Exporter, which are enabled by referencing a ConfigMap containing configuration for the Prometheus JMX exporter. You can enable metrics without further configuration using a reference to a ConfigMap containing an empty file under

metricsConfig.valueFrom.configMapKeyRef.key. -

ZooKeeper-specific configuration, which contains properties similar to the Kafka configuration.

-

The number of ZooKeeper nodes. ZooKeeper clusters or ensembles usually run with an odd number of nodes, typically three, five, or seven. The majority of nodes must be available in order to maintain an effective quorum. If the ZooKeeper cluster loses its quorum, it will stop responding to clients and the Kafka brokers will stop working. Having a stable and highly available ZooKeeper cluster is crucial for Strimzi.

-

Specified ZooKeeper loggers and log levels.

-

Entity Operator configuration, which specifies the configuration for the Topic Operator and User Operator.

-

Entity Operator TLS sidecar configuration. Entity Operator uses the TLS sidecar for secure communication with ZooKeeper.

-

Specified Topic Operator loggers and log levels. This example uses

inlinelogging. -

Specified User Operator loggers and log levels.

-

Kafka Exporter configuration. Kafka Exporter is an optional component for extracting metrics data from Kafka brokers, in particular consumer lag data.

-

Optional configuration for Cruise Control, which is used to rebalance the Kafka cluster.

-

Cruise Control TLS sidecar configuration. Cruise Control uses the TLS sidecar for secure communication with ZooKeeper.

-

-

Create or update the resource:

kubectl apply -f KAFKA-CONFIG-FILE

2.1.2. Configuring the Entity Operator

The Entity Operator is responsible for managing Kafka-related entities in a running Kafka cluster.

The Entity Operator comprises the:

-

Topic Operator to manage Kafka topics

-

User Operator to manage Kafka users

Through Kafka resource configuration, the Cluster Operator can deploy the Entity Operator, including one or both operators, when deploying a Kafka cluster.

|

Note

|

When deployed, the Entity Operator contains the operators according to the deployment configuration. |

The operators are automatically configured to manage the topics and users of the Kafka cluster.

Entity Operator configuration properties

Use the entityOperator property in Kafka.spec to configure the Entity Operator.

The entityOperator property supports several sub-properties:

-

tlsSidecar -

topicOperator -

userOperator -

template

The tlsSidecar property contains the configuration of the TLS sidecar container, which is used to communicate with ZooKeeper.

The template property contains the configuration of the Entity Operator pod, such as labels, annotations, affinity, and tolerations.

For more information on configuring templates, see Customizing Kubernetes resources.

The topicOperator property contains the configuration of the Topic Operator.

When this option is missing, the Entity Operator is deployed without the Topic Operator.

The userOperator property contains the configuration of the User Operator.

When this option is missing, the Entity Operator is deployed without the User Operator.

For more information on the properties used to configure the Entity Operator, see the EntityUserOperatorSpec schema reference.

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: my-cluster

spec:

kafka:

# ...

zookeeper:

# ...

entityOperator:

topicOperator: {}

userOperator: {}If an empty object ({}) is used for the topicOperator and userOperator, all properties use their default values.

When both topicOperator and userOperator properties are missing, the Entity Operator is not deployed.

Topic Operator configuration properties

Topic Operator deployment can be configured using additional options inside the topicOperator object.

The following properties are supported:

watchedNamespace-

The Kubernetes namespace in which the topic operator watches for

KafkaTopics. Default is the namespace where the Kafka cluster is deployed. reconciliationIntervalSeconds-

The interval between periodic reconciliations in seconds. Default

120. zookeeperSessionTimeoutSeconds-

The ZooKeeper session timeout in seconds. Default

18. topicMetadataMaxAttempts-

The number of attempts at getting topic metadata from Kafka. The time between each attempt is defined as an exponential back-off. Consider increasing this value when topic creation might take more time due to the number of partitions or replicas. Default

6. image-

The

imageproperty can be used to configure the container image which will be used. For more details about configuring custom container images, seeimage. resources-

The

resourcesproperty configures the amount of resources allocated to the Topic Operator. For more details about resource request and limit configuration, seeresources. logging-

The

loggingproperty configures the logging of the Topic Operator. For more details, seelogging.

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: my-cluster

spec:

kafka:

# ...

zookeeper:

# ...

entityOperator:

# ...

topicOperator:

watchedNamespace: my-topic-namespace

reconciliationIntervalSeconds: 60

# ...User Operator configuration properties

User Operator deployment can be configured using additional options inside the userOperator object.

The following properties are supported:

watchedNamespace-

The Kubernetes namespace in which the user operator watches for

KafkaUsers. Default is the namespace where the Kafka cluster is deployed. reconciliationIntervalSeconds-

The interval between periodic reconciliations in seconds. Default

120. zookeeperSessionTimeoutSeconds-

The ZooKeeper session timeout in seconds. Default

18. image-

The

imageproperty can be used to configure the container image which will be used. For more details about configuring custom container images, seeimage. resources-

The

resourcesproperty configures the amount of resources allocated to the User Operator. For more details about resource request and limit configuration, seeresources. logging-

The

loggingproperty configures the logging of the User Operator. For more details, seelogging. secretPrefix-

The

secretPrefixproperty adds a prefix to the name of all Secrets created from the KafkaUser resource. For example,STRIMZI_SECRET_PREFIX=kafka-would prefix all Secret names withkafka-. So a KafkaUser namedmy-userwould create a Secret namedkafka-my-user.

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: my-cluster

spec:

kafka:

# ...

zookeeper:

# ...

entityOperator:

# ...

userOperator:

watchedNamespace: my-user-namespace

reconciliationIntervalSeconds: 60

# ...2.1.3. Kafka and ZooKeeper storage types

As stateful applications, Kafka and ZooKeeper need to store data on disk. Strimzi supports three storage types for this data:

-

Ephemeral

-

Persistent

-

JBOD storage

|

Note

|

JBOD storage is supported only for Kafka, not for ZooKeeper. |

When configuring a Kafka resource, you can specify the type of storage used by the Kafka broker and its corresponding ZooKeeper node. You configure the storage type using the storage property in the following resources:

-

Kafka.spec.kafka -

Kafka.spec.zookeeper

The storage type is configured in the type field.

|

Warning

|

The storage type cannot be changed after a Kafka cluster is deployed. |

-

For more information about ephemeral storage, see ephemeral storage schema reference.

-

For more information about persistent storage, see persistent storage schema reference.

-

For more information about JBOD storage, see JBOD schema reference.

-

For more information about the schema for

Kafka, seeKafkaschema reference.

Data storage considerations

An efficient data storage infrastructure is essential to the optimal performance of Strimzi.

Block storage is required. File storage, such as NFS, does not work with Kafka.

For your block storage, you can choose, for example:

-

Cloud-based block storage solutions, such as Amazon Elastic Block Store (EBS)

-

Storage Area Network (SAN) volumes accessed by a protocol such as Fibre Channel or iSCSI

|

Note

|

Strimzi does not require Kubernetes raw block volumes. |

File systems

It is recommended that you configure your storage system to use the XFS file system. Strimzi is also compatible with the ext4 file system, but this might require additional configuration for best results.

Apache Kafka and ZooKeeper storage

Use separate disks for Apache Kafka and ZooKeeper.

Three types of data storage are supported:

-

Ephemeral (Recommended for development only)

-

Persistent

-

JBOD (Just a Bunch of Disks, suitable for Kafka only)

For more information, see Kafka and ZooKeeper storage.

Solid-state drives (SSDs), though not essential, can improve the performance of Kafka in large clusters where data is sent to and received from multiple topics asynchronously. SSDs are particularly effective with ZooKeeper, which requires fast, low latency data access.

|

Note

|

You do not need to provision replicated storage because Kafka and ZooKeeper both have built-in data replication. |

Ephemeral storage

Ephemeral storage uses emptyDir volumes to store data.

To use ephemeral storage, set the type field to ephemeral.

|

Important

|

emptyDir volumes are not persistent and the data stored in them is lost when the pod is restarted.

After the new pod is started, it must recover all data from the other nodes of the cluster.

Ephemeral storage is not suitable for use with single-node ZooKeeper clusters or for Kafka topics with a replication factor of 1. This configuration will cause data loss.

|

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: my-cluster

spec:

kafka:

# ...

storage:

type: ephemeral

# ...

zookeeper:

# ...

storage:

type: ephemeral

# ...Log directories

The ephemeral volume is used by the Kafka brokers as log directories mounted into the following path:

/var/lib/kafka/data/kafka-logIDXWhere IDX is the Kafka broker pod index. For example /var/lib/kafka/data/kafka-log0.

Persistent storage

Persistent storage uses Persistent Volume Claims to provision persistent volumes for storing data. Persistent Volume Claims can be used to provision volumes of many different types, depending on the Storage Class which will provision the volume. The data types which can be used with persistent volume claims include many types of SAN storage as well as Local persistent volumes.

To use persistent storage, the type has to be set to persistent-claim.

Persistent storage supports additional configuration options:

id(optional)-

Storage identification number. This option is mandatory for storage volumes defined in a JBOD storage declaration. Default is

0. size(required)-

Defines the size of the persistent volume claim, for example, "1000Gi".

class(optional)-

The Kubernetes Storage Class to use for dynamic volume provisioning.

selector(optional)-

Allows selecting a specific persistent volume to use. It contains key:value pairs representing labels for selecting such a volume.

deleteClaim(optional)-

Boolean value which specifies if the Persistent Volume Claim has to be deleted when the cluster is undeployed. Default is

false.

|

Warning

|

Increasing the size of persistent volumes in an existing Strimzi cluster is only supported in Kubernetes versions that support persistent volume resizing. The persistent volume to be resized must use a storage class that supports volume expansion. For other versions of Kubernetes and storage classes which do not support volume expansion, you must decide the necessary storage size before deploying the cluster. Decreasing the size of existing persistent volumes is not possible. |

size# ...

storage:

type: persistent-claim

size: 1000Gi

# ...The following example demonstrates the use of a storage class.

# ...

storage:

type: persistent-claim

size: 1Gi

class: my-storage-class

# ...Finally, a selector can be used to select a specific labeled persistent volume to provide needed features such as an SSD.

# ...

storage:

type: persistent-claim

size: 1Gi

selector:

hdd-type: ssd

deleteClaim: true

# ...Storage class overrides

You can specify a different storage class for one or more Kafka brokers or ZooKeeper nodes, instead of using the default storage class.

This is useful if, for example, storage classes are restricted to different availability zones or data centers.

You can use the overrides field for this purpose.

In this example, the default storage class is named my-storage-class:

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

labels:

app: my-cluster

name: my-cluster

namespace: myproject

spec:

# ...

kafka:

replicas: 3

storage:

deleteClaim: true

size: 100Gi

type: persistent-claim

class: my-storage-class

overrides:

- broker: 0

class: my-storage-class-zone-1a

- broker: 1

class: my-storage-class-zone-1b

- broker: 2

class: my-storage-class-zone-1c

# ...

zookeeper:

replicas: 3

storage:

deleteClaim: true

size: 100Gi

type: persistent-claim

class: my-storage-class

overrides:

- broker: 0

class: my-storage-class-zone-1a

- broker: 1

class: my-storage-class-zone-1b

- broker: 2

class: my-storage-class-zone-1c

# ...As a result of the configured overrides property, the volumes use the following storage classes:

-

The persistent volumes of ZooKeeper node 0 will use

my-storage-class-zone-1a. -

The persistent volumes of ZooKeeper node 1 will use

my-storage-class-zone-1b. -

The persistent volumes of ZooKeeepr node 2 will use

my-storage-class-zone-1c. -

The persistent volumes of Kafka broker 0 will use

my-storage-class-zone-1a. -

The persistent volumes of Kafka broker 1 will use

my-storage-class-zone-1b. -

The persistent volumes of Kafka broker 2 will use

my-storage-class-zone-1c.

The overrides property is currently used only to override storage class configurations. Overriding other storage configuration fields is not currently supported.

Other fields from the storage configuration are currently not supported.

Persistent Volume Claim naming

When persistent storage is used, it creates Persistent Volume Claims with the following names:

data-cluster-name-kafka-idx-

Persistent Volume Claim for the volume used for storing data for the Kafka broker pod

idx. data-cluster-name-zookeeper-idx-

Persistent Volume Claim for the volume used for storing data for the ZooKeeper node pod

idx.

Log directories

The persistent volume is used by the Kafka brokers as log directories mounted into the following path:

/var/lib/kafka/data/kafka-logIDXWhere IDX is the Kafka broker pod index. For example /var/lib/kafka/data/kafka-log0.

Resizing persistent volumes

You can provision increased storage capacity by increasing the size of the persistent volumes used by an existing Strimzi cluster. Resizing persistent volumes is supported in clusters that use either a single persistent volume or multiple persistent volumes in a JBOD storage configuration.

|

Note

|

You can increase but not decrease the size of persistent volumes. Decreasing the size of persistent volumes is not currently supported in Kubernetes. |

-

A Kubernetes cluster with support for volume resizing.

-

The Cluster Operator is running.

-

A Kafka cluster using persistent volumes created using a storage class that supports volume expansion.

-

In a

Kafkaresource, increase the size of the persistent volume allocated to the Kafka cluster, the ZooKeeper cluster, or both.-

To increase the volume size allocated to the Kafka cluster, edit the

spec.kafka.storageproperty. -

To increase the volume size allocated to the ZooKeeper cluster, edit the

spec.zookeeper.storageproperty.For example, to increase the volume size from

1000Gito2000Gi:apiVersion: kafka.strimzi.io/v1beta2 kind: Kafka metadata: name: my-cluster spec: kafka: # ... storage: type: persistent-claim size: 2000Gi class: my-storage-class # ... zookeeper: # ...

-

-

Create or update the resource:

kubectl apply -f KAFKA-CONFIG-FILEKubernetes increases the capacity of the selected persistent volumes in response to a request from the Cluster Operator. When the resizing is complete, the Cluster Operator restarts all pods that use the resized persistent volumes. This happens automatically.

For more information about resizing persistent volumes in Kubernetes, see Resizing Persistent Volumes using Kubernetes.

JBOD storage overview

You can configure Strimzi to use JBOD, a data storage configuration of multiple disks or volumes. JBOD is one approach to providing increased data storage for Kafka brokers. It can also improve performance.

A JBOD configuration is described by one or more volumes, each of which can be either ephemeral or persistent. The rules and constraints for JBOD volume declarations are the same as those for ephemeral and persistent storage. For example, you cannot decrease the size of a persistent storage volume after it has been provisioned, or you cannot change the value of sizeLimit when type=ephemeral.

JBOD configuration

To use JBOD with Strimzi, the storage type must be set to jbod. The volumes property allows you to describe the disks that make up your JBOD storage array or configuration. The following fragment shows an example JBOD configuration:

# ...

storage:

type: jbod

volumes:

- id: 0

type: persistent-claim

size: 100Gi

deleteClaim: false

- id: 1

type: persistent-claim

size: 100Gi

deleteClaim: false

# ...The ids cannot be changed once the JBOD volumes are created.

Users can add or remove volumes from the JBOD configuration.

JBOD and Persistent Volume Claims

When persistent storage is used to declare JBOD volumes, the naming scheme of the resulting Persistent Volume Claims is as follows:

data-id-cluster-name-kafka-idx-

Where

idis the ID of the volume used for storing data for Kafka broker podidx.

Log directories

The JBOD volumes will be used by the Kafka brokers as log directories mounted into the following path:

/var/lib/kafka/data-id/kafka-log_idx_-

Where

idis the ID of the volume used for storing data for Kafka broker podidx. For example/var/lib/kafka/data-0/kafka-log0.

Adding volumes to JBOD storage

This procedure describes how to add volumes to a Kafka cluster configured to use JBOD storage. It cannot be applied to Kafka clusters configured to use any other storage type.

|

Note

|

When adding a new volume under an id which was already used in the past and removed, you have to make sure that the previously used PersistentVolumeClaims have been deleted.

|

-

A Kubernetes cluster

-

A running Cluster Operator

-

A Kafka cluster with JBOD storage

-

Edit the

spec.kafka.storage.volumesproperty in theKafkaresource. Add the new volumes to thevolumesarray. For example, add the new volume with id2:apiVersion: kafka.strimzi.io/v1beta2 kind: Kafka metadata: name: my-cluster spec: kafka: # ... storage: type: jbod volumes: - id: 0 type: persistent-claim size: 100Gi deleteClaim: false - id: 1 type: persistent-claim size: 100Gi deleteClaim: false - id: 2 type: persistent-claim size: 100Gi deleteClaim: false # ... zookeeper: # ... -

Create or update the resource:

kubectl apply -f KAFKA-CONFIG-FILE -

Create new topics or reassign existing partitions to the new disks.

For more information about reassigning topics, see Partition reassignment.

Removing volumes from JBOD storage

This procedure describes how to remove volumes from Kafka cluster configured to use JBOD storage. It cannot be applied to Kafka clusters configured to use any other storage type. The JBOD storage always has to contain at least one volume.

|

Important

|

To avoid data loss, you have to move all partitions before removing the volumes. |

-

A Kubernetes cluster

-

A running Cluster Operator

-

A Kafka cluster with JBOD storage with two or more volumes

-

Reassign all partitions from the disks which are you going to remove. Any data in partitions still assigned to the disks which are going to be removed might be lost.

-

Edit the

spec.kafka.storage.volumesproperty in theKafkaresource. Remove one or more volumes from thevolumesarray. For example, remove the volumes with ids1and2:apiVersion: kafka.strimzi.io/v1beta2 kind: Kafka metadata: name: my-cluster spec: kafka: # ... storage: type: jbod volumes: - id: 0 type: persistent-claim size: 100Gi deleteClaim: false # ... zookeeper: # ... -

Create or update the resource:

kubectl apply -f KAFKA-CONFIG-FILE

For more information about reassigning topics, see Partition reassignment.

2.1.4. Scaling clusters

Scaling Kafka clusters

Adding brokers to a cluster

The primary way of increasing throughput for a topic is to increase the number of partitions for that topic. That works because the extra partitions allow the load of the topic to be shared between the different brokers in the cluster. However, in situations where every broker is constrained by a particular resource (typically I/O) using more partitions will not result in increased throughput. Instead, you need to add brokers to the cluster.

When you add an extra broker to the cluster, Kafka does not assign any partitions to it automatically. You must decide which partitions to move from the existing brokers to the new broker.

Once the partitions have been redistributed between all the brokers, the resource utilization of each broker should be reduced.

Removing brokers from a cluster

Because Strimzi uses StatefulSets to manage broker pods, you cannot remove any pod from the cluster.

You can only remove one or more of the highest numbered pods from the cluster.

For example, in a cluster of 12 brokers the pods are named cluster-name-kafka-0 up to cluster-name-kafka-11.

If you decide to scale down by one broker, the cluster-name-kafka-11 will be removed.

Before you remove a broker from a cluster, ensure that it is not assigned to any partitions. You should also decide which of the remaining brokers will be responsible for each of the partitions on the broker being decommissioned. Once the broker has no assigned partitions, you can scale the cluster down safely.

Partition reassignment

The Topic Operator does not currently support reassigning replicas to different brokers, so it is necessary to connect directly to broker pods to reassign replicas to brokers.

Within a broker pod, the kafka-reassign-partitions.sh utility allows you to reassign partitions to different brokers.

It has three different modes:

--generate-

Takes a set of topics and brokers and generates a reassignment JSON file which will result in the partitions of those topics being assigned to those brokers. Because this operates on whole topics, it cannot be used when you only want to reassign some partitions of some topics.

--execute-

Takes a reassignment JSON file and applies it to the partitions and brokers in the cluster. Brokers that gain partitions as a result become followers of the partition leader. For a given partition, once the new broker has caught up and joined the ISR (in-sync replicas) the old broker will stop being a follower and will delete its replica.

--verify-

Using the same reassignment JSON file as the

--executestep,--verifychecks whether all the partitions in the file have been moved to their intended brokers. If the reassignment is complete, --verify also removes any throttles that are in effect. Unless removed, throttles will continue to affect the cluster even after the reassignment has finished.

It is only possible to have one reassignment running in a cluster at any given time, and it is not possible to cancel a running reassignment.

If you need to cancel a reassignment, wait for it to complete and then perform another reassignment to revert the effects of the first reassignment.

The kafka-reassign-partitions.sh will print the reassignment JSON for this reversion as part of its output.

Very large reassignments should be broken down into a number of smaller reassignments in case there is a need to stop in-progress reassignment.

Reassignment JSON file

The reassignment JSON file has a specific structure:

{

"version": 1,

"partitions": [

<PartitionObjects>

]

}Where <PartitionObjects> is a comma-separated list of objects like:

{

"topic": <TopicName>,

"partition": <Partition>,

"replicas": [ <AssignedBrokerIds> ]

}|

Note

|

Although Kafka also supports a "log_dirs" property this should not be used in Strimzi.

|

The following is an example reassignment JSON file that assigns partition 4 of topic topic-a to brokers 2, 4 and 7, and partition 2 of topic topic-b to brokers 1, 5 and 7:

{

"version": 1,

"partitions": [

{

"topic": "topic-a",

"partition": 4,

"replicas": [2,4,7]

},

{

"topic": "topic-b",

"partition": 2,

"replicas": [1,5,7]

}

]

}Partitions not included in the JSON are not changed.

Reassigning partitions between JBOD volumes

When using JBOD storage in your Kafka cluster, you can choose to reassign the partitions between specific volumes and their log directories (each volume has a single log directory).

To reassign a partition to a specific volume, add the log_dirs option to <PartitionObjects> in the reassignment JSON file.

{

"topic": <TopicName>,

"partition": <Partition>,

"replicas": [ <AssignedBrokerIds> ],

"log_dirs": [ <AssignedLogDirs> ]

}The log_dirs object should contain the same number of log directories as the number of replicas specified in the replicas object.

The value should be either an absolute path to the log directory, or the any keyword.

For example:

{

"topic": "topic-a",

"partition": 4,

"replicas": [2,4,7].

"log_dirs": [ "/var/lib/kafka/data-0/kafka-log2", "/var/lib/kafka/data-0/kafka-log4", "/var/lib/kafka/data-0/kafka-log7" ]

}Generating reassignment JSON files

This procedure describes how to generate a reassignment JSON file that reassigns all the partitions for a given set of topics using the kafka-reassign-partitions.sh tool.

-

A running Cluster Operator

-

A

Kafkaresource -

A set of topics to reassign the partitions of

-

Prepare a JSON file named

topics.jsonthat lists the topics to move. It must have the following structure:{ "version": 1, "topics": [ <TopicObjects> ] }where <TopicObjects> is a comma-separated list of objects like:

{ "topic": <TopicName> }For example if you want to reassign all the partitions of

topic-aandtopic-b, you would need to prepare atopics.jsonfile like this:{ "version": 1, "topics": [ { "topic": "topic-a"}, { "topic": "topic-b"} ] } -

Copy the

topics.jsonfile to one of the broker pods:cat topics.json | kubectl exec -c kafka <BrokerPod> -i -- \ /bin/bash -c \ 'cat > /tmp/topics.json' -

Use the

kafka-reassign-partitions.shcommand to generate the reassignment JSON.kubectl exec <BrokerPod> -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --topics-to-move-json-file /tmp/topics.json \ --broker-list <BrokerList> \ --generateFor example, to move all the partitions of

topic-aandtopic-bto brokers4and7kubectl exec <BrokerPod> -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --topics-to-move-json-file /tmp/topics.json \ --broker-list 4,7 \ --generate

Creating reassignment JSON files manually

You can manually create the reassignment JSON file if you want to move specific partitions.

Reassignment throttles

Partition reassignment can be a slow process because it involves transferring large amounts of data between brokers. To avoid a detrimental impact on clients, you can throttle the reassignment process. This might cause the reassignment to take longer to complete.

-

If the throttle is too low then the newly assigned brokers will not be able to keep up with records being published and the reassignment will never complete.

-

If the throttle is too high then clients will be impacted.

For example, for producers, this could manifest as higher than normal latency waiting for acknowledgement. For consumers, this could manifest as a drop in throughput caused by higher latency between polls.

Scaling up a Kafka cluster

This procedure describes how to increase the number of brokers in a Kafka cluster.

-

An existing Kafka cluster.

-

A reassignment JSON file named

reassignment.jsonthat describes how partitions should be reassigned to brokers in the enlarged cluster.

-

Add as many new brokers as you need by increasing the

Kafka.spec.kafka.replicasconfiguration option. -

Verify that the new broker pods have started.

-

Copy the

reassignment.jsonfile to the broker pod on which you will later execute the commands:cat reassignment.json | \ kubectl exec broker-pod -c kafka -i -- /bin/bash -c \ 'cat > /tmp/reassignment.json'For example:

cat reassignment.json | \ kubectl exec my-cluster-kafka-0 -c kafka -i -- /bin/bash -c \ 'cat > /tmp/reassignment.json' -

Execute the partition reassignment using the

kafka-reassign-partitions.shcommand line tool from the same broker pod.kubectl exec broker-pod -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --executeIf you are going to throttle replication you can also pass the

--throttleoption with an inter-broker throttled rate in bytes per second. For example:kubectl exec my-cluster-kafka-0 -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --throttle 5000000 \ --executeThis command will print out two reassignment JSON objects. The first records the current assignment for the partitions being moved. You should save this to a local file (not a file in the pod) in case you need to revert the reassignment later on. The second JSON object is the target reassignment you have passed in your reassignment JSON file.

-

If you need to change the throttle during reassignment you can use the same command line with a different throttled rate. For example:

kubectl exec my-cluster-kafka-0 -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --throttle 10000000 \ --execute -

Periodically verify whether the reassignment has completed using the

kafka-reassign-partitions.shcommand line tool from any of the broker pods. This is the same command as the previous step but with the--verifyoption instead of the--executeoption.kubectl exec broker-pod -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --verifyFor example,

kubectl exec my-cluster-kafka-0 -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --verify -

The reassignment has finished when the

--verifycommand reports each of the partitions being moved as completed successfully. This final--verifywill also have the effect of removing any reassignment throttles. You can now delete the revert file if you saved the JSON for reverting the assignment to their original brokers.

Scaling down a Kafka cluster

This procedure describes how to decrease the number of brokers in a Kafka cluster.

-

An existing Kafka cluster.

-

A reassignment JSON file named

reassignment.jsondescribing how partitions should be reassigned to brokers in the cluster once the broker(s) in the highest numberedPod(s)have been removed.

-

Copy the

reassignment.jsonfile to the broker pod on which you will later execute the commands:cat reassignment.json | \ kubectl exec broker-pod -c kafka -i -- /bin/bash -c \ 'cat > /tmp/reassignment.json'For example:

cat reassignment.json | \ kubectl exec my-cluster-kafka-0 -c kafka -i -- /bin/bash -c \ 'cat > /tmp/reassignment.json' -

Execute the partition reassignment using the

kafka-reassign-partitions.shcommand line tool from the same broker pod.kubectl exec broker-pod -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --executeIf you are going to throttle replication you can also pass the

--throttleoption with an inter-broker throttled rate in bytes per second. For example:kubectl exec my-cluster-kafka-0 -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --throttle 5000000 \ --executeThis command will print out two reassignment JSON objects. The first records the current assignment for the partitions being moved. You should save this to a local file (not a file in the pod) in case you need to revert the reassignment later on. The second JSON object is the target reassignment you have passed in your reassignment JSON file.

-

If you need to change the throttle during reassignment you can use the same command line with a different throttled rate. For example:

kubectl exec my-cluster-kafka-0 -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --throttle 10000000 \ --execute -

Periodically verify whether the reassignment has completed using the

kafka-reassign-partitions.shcommand line tool from any of the broker pods. This is the same command as the previous step but with the--verifyoption instead of the--executeoption.kubectl exec broker-pod -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --verifyFor example,

kubectl exec my-cluster-kafka-0 -c kafka -it -- \ bin/kafka-reassign-partitions.sh --bootstrap-server localhost:9092 \ --reassignment-json-file /tmp/reassignment.json \ --verify -

The reassignment has finished when the

--verifycommand reports each of the partitions being moved as completed successfully. This final--verifywill also have the effect of removing any reassignment throttles. You can now delete the revert file if you saved the JSON for reverting the assignment to their original brokers. -

Once all the partition reassignments have finished, the broker(s) being removed should not have responsibility for any of the partitions in the cluster. You can verify this by checking that the broker’s data log directory does not contain any live partition logs. If the log directory on the broker contains a directory that does not match the extended regular expression

[a-zA-Z0-9.-]+\.[a-z0-9]+-delete$then the broker still has live partitions and it should not be stopped.You can check this by executing the command:

kubectl exec my-cluster-kafka-0 -c kafka -it -- \ /bin/bash -c \ "ls -l /var/lib/kafka/kafka-log_<N>_ | grep -E '^d' | grep -vE '[a-zA-Z0-9.-]+\.[a-z0-9]+-delete$'"where N is the number of the

Pod(s)being deleted.If the above command prints any output then the broker still has live partitions. In this case, either the reassignment has not finished, or the reassignment JSON file was incorrect.

-

Once you have confirmed that the broker has no live partitions you can edit the

Kafka.spec.kafka.replicasof yourKafkaresource, which will scale down theStatefulSet, deleting the highest numbered brokerPod(s).

2.1.5. Retrieving JMX metrics with JmxTrans

JmxTrans is a tool for retrieving JMX metrics data from Java processes and pushing that data, in various formats, to remote sinks inside or outside the cluster. JmxTrans can communicate with a secure JMX port.

Strimzi supports using JmxTrans to read JMX metrics from Kafka brokers.

JmxTrans reads JMX metrics data from secure or insecure Kafka brokers and pushes the data to remote sinks in various data formats. For example, JmxTrans can obtain JMX metrics about the request rate of each Kafka broker’s network and then push the data to a Logstash database outside the Kubernetes cluster.

Configuring a JmxTrans deployment

-

A running Kubernetes cluster

You can configure a JmxTrans deployment by using the Kafka.spec.jmxTrans property.

A JmxTrans deployment can read from a secure or insecure Kafka broker.

To configure a JmxTrans deployment, define the following properties:

-

Kafka.spec.jmxTrans.outputDefinitions -

Kafka.spec.jmxTrans.kafkaQueries

For more information on these properties, see the JmxTransSpec schema reference.

|

Note

|

To use JMXTrans, jmxOptions must be configured on the Kafka broker.

|

Configuring JmxTrans output definitions

Output definitions specify where JMX metrics are pushed to, and in which data format.

For information about supported data formats, see Data formats.

How many seconds JmxTrans agent waits for before pushing new data can be configured through the flushDelay property.

The host and port properties define the target host address and target port the data is pushed to.

The name property is a required property that is referenced by the Kafka.spec.jmxTrans.kafkaQueries property.

Here is an example configuration pushing JMX data in the Graphite format every 5 seconds to a Logstash database on http://myLogstash:9999, and another pushing to standardOut (standard output):

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: my-cluster

spec:

jmxTrans:

outputDefinitions:

- outputType: "com.googlecode.jmxtrans.model.output.GraphiteWriter"

host: "http://myLogstash"

port: 9999

flushDelay: 5

name: "logstash"

- outputType: "com.googlecode.jmxtrans.model.output.StdOutWriter"

name: "standardOut"

# ...

# ...

zookeeper:

# ...Configuring JmxTrans queries

JmxTrans queries specify what JMX metrics are read from the Kafka brokers.

Currently JmxTrans queries can only be sent to the Kafka Brokers.

Configure the targetMBean property to specify which target MBean on the Kafka broker is addressed.

Configuring the attributes property specifies which MBean attribute is read as JMX metrics from the target MBean.

JmxTrans supports wildcards to read from target MBeans, and filter by specifying the typenames.

The outputs property defines where the metrics are pushed to by specifying the name of the output definitions.

The following JmxTrans deployment reads from all MBeans that match the pattern kafka.server:type=BrokerTopicMetrics,name=* and have name in the target MBean’s name.

From those Mbeans, it obtains JMX metrics about the Count attribute and pushes the metrics to standard output as defined by outputs.

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: my-cluster

spec:

# ...

jmxTrans:

kafkaQueries:

- targetMBean: "kafka.server:type=BrokerTopicMetrics,*"

typeNames: ["name"]

attributes: ["Count"]

outputs: ["standardOut"]

zookeeper:

# ...Additional resources

For more information about JmxTrans, see the JmxTrans github.

2.1.6. Maintenance time windows for rolling updates

Maintenance time windows allow you to schedule certain rolling updates of your Kafka and ZooKeeper clusters to start at a convenient time.

Maintenance time windows overview

In most cases, the Cluster Operator only updates your Kafka or ZooKeeper clusters in response to changes to the corresponding Kafka resource.

This enables you to plan when to apply changes to a Kafka resource to minimize the impact on Kafka client applications.

However, some updates to your Kafka and ZooKeeper clusters can happen without any corresponding change to the Kafka resource.

For example, the Cluster Operator will need to perform a rolling restart if a CA (Certificate Authority) certificate that it manages is close to expiry.

While a rolling restart of the pods should not affect availability of the service (assuming correct broker and topic configurations), it could affect performance of the Kafka client applications. Maintenance time windows allow you to schedule such spontaneous rolling updates of your Kafka and ZooKeeper clusters to start at a convenient time. If maintenance time windows are not configured for a cluster then it is possible that such spontaneous rolling updates will happen at an inconvenient time, such as during a predictable period of high load.

Maintenance time window definition

You configure maintenance time windows by entering an array of strings in the Kafka.spec.maintenanceTimeWindows property.

Each string is a cron expression interpreted as being in UTC (Coordinated Universal Time, which for practical purposes is the same as Greenwich Mean Time).

The following example configures a single maintenance time window that starts at midnight and ends at 01:59am (UTC), on Sundays, Mondays, Tuesdays, Wednesdays, and Thursdays:

# ...

maintenanceTimeWindows:

- "* * 0-1 ? * SUN,MON,TUE,WED,THU *"

# ...In practice, maintenance windows should be set in conjunction with the Kafka.spec.clusterCa.renewalDays and Kafka.spec.clientsCa.renewalDays properties of the Kafka resource, to ensure that the necessary CA certificate renewal can be completed in the configured maintenance time windows.

|

Note

|

Strimzi does not schedule maintenance operations exactly according to the given windows. Instead, for each reconciliation, it checks whether a maintenance window is currently "open". This means that the start of maintenance operations within a given time window can be delayed by up to the Cluster Operator reconciliation interval. Maintenance time windows must therefore be at least this long. |

-

For more information about the Cluster Operator configuration, see Cluster Operator configuration.

Configuring a maintenance time window

You can configure a maintenance time window for rolling updates triggered by supported processes.

-

A Kubernetes cluster.

-

The Cluster Operator is running.

-

Add or edit the

maintenanceTimeWindowsproperty in theKafkaresource. For example to allow maintenance between 0800 and 1059 and between 1400 and 1559 you would set themaintenanceTimeWindowsas shown below:apiVersion: kafka.strimzi.io/v1beta2 kind: Kafka metadata: name: my-cluster spec: kafka: # ... zookeeper: # ... maintenanceTimeWindows: - "* * 8-10 * * ?" - "* * 14-15 * * ?" -

Create or update the resource:

kubectl apply -f KAFKA-CONFIG-FILE

Performing rolling updates:

2.1.7. Connecting to ZooKeeper from a terminal

Most Kafka CLI tools can connect directly to Kafka, so under normal circumstances you should not need to connect to ZooKeeper. ZooKeeper services are secured with encryption and authentication and are not intended to be used by external applications that are not part of Strimzi.

However, if you want to use Kafka CLI tools that require a connection to ZooKeeper, you can use a terminal inside a ZooKeeper container and connect to localhost:12181 as the ZooKeeper address.

-

A Kubernetes cluster is available.

-

A Kafka cluster is running.

-

The Cluster Operator is running.

-

Open the terminal using the Kubernetes console or run the

execcommand from your CLI.For example:

kubectl exec -ti my-cluster-zookeeper-0 -- bin/kafka-topics.sh --list --zookeeper localhost:12181Be sure to use

localhost:12181.You can now run Kafka commands to ZooKeeper.

2.1.8. Deleting Kafka nodes manually

This procedure describes how to delete an existing Kafka node by using a Kubernetes annotation.

Deleting a Kafka node consists of deleting both the Pod on which the Kafka broker is running and the related PersistentVolumeClaim (if the cluster was deployed with persistent storage).

After deletion, the Pod and its related PersistentVolumeClaim are recreated automatically.

|

Warning

|

Deleting a PersistentVolumeClaim can cause permanent data loss. The following procedure should only be performed if you have encountered storage issues.

|

See the Deploying and Upgrading Strimzi guide for instructions on running a:

-

Find the name of the

Podthat you want to delete.For example, if the cluster is named cluster-name, the pods are named cluster-name-kafka-index, where index starts at zero and ends at the total number of replicas.

-

Annotate the

Podresource in Kubernetes.Use

kubectl annotate:kubectl annotate pod cluster-name-kafka-index strimzi.io/delete-pod-and-pvc=true -

Wait for the next reconciliation, when the annotated pod with the underlying persistent volume claim will be deleted and then recreated.

2.1.9. Deleting ZooKeeper nodes manually

This procedure describes how to delete an existing ZooKeeper node by using a Kubernetes annotation.

Deleting a ZooKeeper node consists of deleting both the Pod on which ZooKeeper is running and the related PersistentVolumeClaim (if the cluster was deployed with persistent storage).

After deletion, the Pod and its related PersistentVolumeClaim are recreated automatically.

|

Warning

|

Deleting a PersistentVolumeClaim can cause permanent data loss. The following procedure should only be performed if you have encountered storage issues.

|

See the Deploying and Upgrading Strimzi guide for instructions on running a:

-

Find the name of the

Podthat you want to delete.For example, if the cluster is named cluster-name, the pods are named cluster-name-zookeeper-index, where index starts at zero and ends at the total number of replicas.

-

Annotate the

Podresource in Kubernetes.Use

kubectl annotate:kubectl annotate pod cluster-name-zookeeper-index strimzi.io/delete-pod-and-pvc=true -

Wait for the next reconciliation, when the annotated pod with the underlying persistent volume claim will be deleted and then recreated.

2.1.10. List of Kafka cluster resources

The following resources are created by the Cluster Operator in the Kubernetes cluster:

cluster-name-cluster-ca-

Secret with the Cluster CA private key used to encrypt the cluster communication.

cluster-name-cluster-ca-cert-

Secret with the Cluster CA public key. This key can be used to verify the identity of the Kafka brokers.

cluster-name-clients-ca-

Secret with the Clients CA private key used to sign user certificates

cluster-name-clients-ca-cert-

Secret with the Clients CA public key. This key can be used to verify the identity of the Kafka users.

cluster-name-cluster-operator-certs-

Secret with Cluster operators keys for communication with Kafka and ZooKeeper.

cluster-name-zookeeper-

StatefulSet which is in charge of managing the ZooKeeper node pods.

cluster-name-zookeeper-idx-

Pods created by the Zookeeper StatefulSet.

cluster-name-zookeeper-nodes-

Headless Service needed to have DNS resolve the ZooKeeper pods IP addresses directly.

cluster-name-zookeeper-client-

Service used by Kafka brokers to connect to ZooKeeper nodes as clients.

cluster-name-zookeeper-config-

ConfigMap that contains the ZooKeeper ancillary configuration, and is mounted as a volume by the ZooKeeper node pods.

cluster-name-zookeeper-nodes-

Secret with ZooKeeper node keys.

cluster-name-zookeeper-

Service account used by the Zookeeper nodes.

cluster-name-zookeeper-

Pod Disruption Budget configured for the ZooKeeper nodes.

cluster-name-network-policy-zookeeper-

Network policy managing access to the ZooKeeper services.

data-cluster-name-zookeeper-idx-

Persistent Volume Claim for the volume used for storing data for the ZooKeeper node pod

idx. This resource will be created only if persistent storage is selected for provisioning persistent volumes to store data.

cluster-name-kafka-

StatefulSet which is in charge of managing the Kafka broker pods.

cluster-name-kafka-idx-

Pods created by the Kafka StatefulSet.

cluster-name-kafka-brokers-

Service needed to have DNS resolve the Kafka broker pods IP addresses directly.

cluster-name-kafka-bootstrap-

Service can be used as bootstrap servers for Kafka clients connecting from within the Kubernetes cluster.

cluster-name-kafka-external-bootstrap-

Bootstrap service for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled. The old service name will be used for backwards compatibility when the listener name is

externaland port is9094. cluster-name-kafka-pod-id-

Service used to route traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled. The old service name will be used for backwards compatibility when the listener name is

externaland port is9094. cluster-name-kafka-external-bootstrap-

Bootstrap route for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled and set to type

route. The old route name will be used for backwards compatibility when the listener name isexternaland port is9094. cluster-name-kafka-pod-id-

Route for traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled and set to type

route. The old route name will be used for backwards compatibility when the listener name isexternaland port is9094. cluster-name-kafka-listener-name-bootstrap-

Bootstrap service for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled. The new service name will be used for all other external listeners.

cluster-name-kafka-listener-name-pod-id-

Service used to route traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled. The new service name will be used for all other external listeners.

cluster-name-kafka-listener-name-bootstrap-

Bootstrap route for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled and set to type

route. The new route name will be used for all other external listeners. cluster-name-kafka-listener-name-pod-id-

Route for traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled and set to type

route. The new route name will be used for all other external listeners. cluster-name-kafka-config-

ConfigMap which contains the Kafka ancillary configuration and is mounted as a volume by the Kafka broker pods.

cluster-name-kafka-brokers-

Secret with Kafka broker keys.

cluster-name-kafka-

Service account used by the Kafka brokers.

cluster-name-kafka-

Pod Disruption Budget configured for the Kafka brokers.

cluster-name-network-policy-kafka-

Network policy managing access to the Kafka services.

strimzi-namespace-name-cluster-name-kafka-init-

Cluster role binding used by the Kafka brokers.

cluster-name-jmx-