1. Deployment overview

Strimzi simplifies the process of running Apache Kafka within a Kubernetes cluster.

This guide provides instructions for deploying and managing Strimzi. Deployment options and steps are covered using the example installation files included with Strimzi. While the guide highlights important configuration considerations, it does not cover all available options. For a deeper understanding of the Kafka component configuration options, refer to the Strimzi Custom Resource API Reference.

In addition to deployment instructions, the guide offers pre- and post-deployment guidance. It covers setting up and securing client access to your Kafka cluster. Furthermore, it explores additional deployment options such as metrics integration, distributed tracing, and cluster management tools like Cruise Control and the Strimzi Drain Cleaner. You’ll also find recommendations on managing Strimzi and fine-tuning Kafka configuration for optimal performance.

Upgrade instructions are provided for both Strimzi and Kafka, to help keep your deployment up to date.

Strimzi is designed to be compatible with all types of Kubernetes clusters, irrespective of their distribution. Whether your deployment involves public or private clouds, or if you are setting up a local development environment, the instructions in this guide are applicable in all cases.

1.1. Strimzi custom resources

The deployment of Kafka components onto a Kubernetes cluster using Strimzi is highly configurable through the use of custom resources. These resources are created as instances of APIs introduced by Custom Resource Definitions (CRDs), which extend Kubernetes resources.

CRDs act as configuration instructions to describe the custom resources in a Kubernetes cluster, and are provided with Strimzi for each Kafka component used in a deployment, as well as users and topics. CRDs and custom resources are defined as YAML files. Example YAML files are provided with the Strimzi distribution.

CRDs also allow Strimzi resources to benefit from native Kubernetes features like CLI accessibility and configuration validation.

1.1.1. Strimzi custom resource example

CRDs require a one-time installation in a cluster to define the schemas used to instantiate and manage Strimzi-specific resources.

After a new custom resource type is added to your cluster by installing a CRD, you can create instances of the resource based on its specification.

Depending on the cluster setup, installation typically requires cluster admin privileges.

|

Note

|

Access to manage custom resources is limited to Strimzi administrators. For more information, see Designating Strimzi administrators. |

A CRD defines a new kind of resource, such as kind:Kafka, within a Kubernetes cluster.

The Kubernetes API server allows custom resources to be created based on the kind and understands from the CRD how to validate and store the custom resource when it is added to the Kubernetes cluster.

Each Strimzi-specific custom resource conforms to the schema defined by the CRD for the resource’s kind.

The custom resources for Strimzi components have common configuration properties, which are defined under spec.

To understand the relationship between a CRD and a custom resource, let’s look at a sample of the CRD for a Kafka topic.

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata: (1)

name: kafkatopics.kafka.strimzi.io

labels:

app: strimzi

spec: (2)

group: kafka.strimzi.io

versions:

v1

scope: Namespaced

names:

# ...

singular: kafkatopic

plural: kafkatopics

shortNames:

- kt (3)

additionalPrinterColumns: (4)

# ...

subresources:

status: {} (5)

validation: (6)

openAPIV3Schema:

properties:

spec:

type: object

properties:

partitions:

type: integer

minimum: 1

replicas:

type: integer

minimum: 1

maximum: 32767

# ...-

The metadata for the topic CRD, its name and a label to identify the CRD.

-

The specification for this CRD, including the group (domain) name, the plural name and the supported schema version, which are used in the URL to access the API of the topic. The other names are used to identify instance resources in the CLI. For example,

kubectl get kafkatopic my-topicorkubectl get kafkatopics. -

The shortname can be used in CLI commands. For example,

kubectl get ktcan be used as an abbreviation instead ofkubectl get kafkatopic. -

The information presented when using a

getcommand on the custom resource. -

The current status of the CRD as described in the schema reference for the resource.

-

openAPIV3Schema validation provides validation for the creation of topic custom resources. For example, a topic requires at least one partition and one replica.

|

Note

|

You can identify the CRD YAML files supplied with the Strimzi installation files, because the file names contain an index number followed by ‘Crd’. |

Here is a corresponding example of a KafkaTopic custom resource.

apiVersion: kafka.strimzi.io/v1

kind: KafkaTopic (1)

metadata:

name: my-topic

labels:

strimzi.io/cluster: my-cluster (2)

spec: (3)

partitions: 1

replicas: 1

config:

retention.ms: 7200000

segment.bytes: 1073741824

status:

conditions: (4)

- lastTransitionTime: "2019-08-20T11:37:00.706Z"

status: "True"

type: Ready

observedGeneration: 1

# ...-

The

kindandapiVersionidentify the CRD of which the custom resource is an instance. -

A label, applicable only to

KafkaTopicandKafkaUserresources, that defines the name of the Kafka cluster (which is same as the name of theKafkaresource) to which a topic or user belongs. -

The spec shows the number of partitions and replicas for the topic as well as the configuration parameters for the topic itself. In this example, the retention period for a message to remain in the topic and the segment file size for the log are specified.

-

Status conditions for the

KafkaTopicresource. Thetypecondition changed toReadyat thelastTransitionTime.

Custom resources can be applied to a cluster through the platform CLI. When the custom resource is created, it uses the same validation as the built-in resources of the Kubernetes API.

After a KafkaTopic custom resource is created, the Topic Operator is notified and corresponding Kafka topics are created in Strimzi.

1.1.2. Performing kubectl operations on custom resources

You can use kubectl commands to retrieve information and perform other operations on Strimzi custom resources.

Use kubectl commands, such as get, describe, edit, or delete, to perform operations on resource types.

For example, kubectl get kafkatopics retrieves a list of all Kafka topics and kubectl get kafkas retrieves all deployed Kafka clusters.

When referencing resource types, you can use both singular and plural names:

kubectl get kafkas gets the same results as kubectl get kafka.

You can also use the short name of the resource.

Learning short names can save you time when managing Strimzi.

The short name for Kafka is k, so you can also run kubectl get k to list all Kafka clusters.

kubectl get k

NAME READY METADATA STATE WARNINGS

my-cluster True KRaft| Strimzi resource | Long name | Short name |

|---|---|---|

Kafka |

kafka |

k |

Kafka Node Pool |

kafkanodepool |

knp |

Kafka Topic |

kafkatopic |

kt |

Kafka User |

kafkauser |

ku |

Kafka Connect |

kafkaconnect |

kc |

Kafka Connector |

kafkaconnector |

kctr |

Kafka MirrorMaker 2 |

kafkamirrormaker2 |

kmm2 |

HTTP Bridge |

kafkabridge |

kb |

Kafka Rebalance |

kafkarebalance |

kr |

Strimzi Pod Set |

strimzipodset |

sps |

Resource categories

Categories of custom resources can also be used in kubectl commands.

All Strimzi custom resources belong to the category strimzi, so you can use strimzi to get all the Strimzi resources with one command.

For example, running kubectl get strimzi lists all Strimzi custom resources in a given namespace.

kubectl get strimzi

NAME PODS READY PODS CURRENT PODS AGE

strimzipodset.core.strimzi.io/my-cluster-brokers 3 3 3 6h11m

strimzipodset.core.strimzi.io/my-cluster-controllers 3 3 3 6h11m

NAME DESIRED REPLICAS ROLES NODEIDS

kafkanodepool.kafka.strimzi.io/brokers 3 ["broker"] [3,4,5]

kafkanodepool.kafka.strimzi.io/controllers 3 ["controller"] [0,1,2]

NAME READY METADATA STATE WARNINGS

kafka.kafka.strimzi.io/my-cluster True KRaft

NAME PARTITIONS REPLICATION FACTOR

kafkatopic.kafka.strimzi.io/kafka-apps 3 3

NAME AUTHENTICATION AUTHORIZATION

kafkauser.kafka.strimzi.io/my-user tls simpleThe kubectl get strimzi -o name command returns all resource types and resource names.

The -o name option fetches the output in the type/name format

kubectl get strimzi -o name

strimzipodset.core.strimzi.io/my-cluster-brokers

strimzipodset.core.strimzi.io/my-cluster-controllers

kafkanodepool.kafka.strimzi.io/brokers

kafkanodepool.kafka.strimzi.io/controllers

kafka.kafka.strimzi.io/my-cluster

kafkatopic.kafka.strimzi.io/kafka-apps

kafkauser.kafka.strimzi.io/my-userYou can combine this strimzi command with other commands.

For example, you can pass it into a kubectl delete command to delete all resources in a single command.

kubectl delete $(kubectl get strimzi -o name)

strimzipodset.core.strimzi.io "my-cluster-brokers" deleted

strimzipodset.core.strimzi.io "my-cluster-controllers" deleted

kafkanodepool.kafka.strimzi.io "brokers" deleted

kafkanodepool.kafka.strimzi.io "controllers" deleted

kafka.kafka.strimzi.io "my-cluster" deleted

kafkatopic.kafka.strimzi.io "kafka-apps" deleted

kafkauser.kafka.strimzi.io "my-user" deletedDeleting all resources in a single operation might be useful, for example, when you are testing new Strimzi features.

Querying the status of sub-resources

There are other values you can pass to the -o option.

For example, by using -o yaml you get the output in YAML format.

Using -o json will return it as JSON.

You can see all the options in kubectl get --help.

One of the most useful options is the JSONPath support, which allows you to pass JSONPath expressions to query the Kubernetes API. A JSONPath expression can extract or navigate specific parts of any resource.

For example, you can use the JSONPath expression {.status.listeners[?(@.name=="tls")].bootstrapServers}

to get the bootstrap address from the status of the Kafka custom resource and use it in your Kafka clients.

Here, the command retrieves the bootstrapServers value of the listener named tls:

kubectl get kafka my-cluster -o=jsonpath='{.status.listeners[?(@.name=="tls")].bootstrapServers}{"\n"}'

my-cluster-kafka-bootstrap.myproject.svc:9093By changing the name condition you can also get the address of the other Kafka listeners.

You can use jsonpath to extract any other property or group of properties from any custom resource.

1.1.3. Strimzi custom resource status information

Status properties provide status information for certain custom resources.

The following table lists the custom resources that provide status information (when deployed) and the schemas that define the status properties.

| Strimzi resource | Schema reference | Publishes status information on… |

|---|---|---|

|

|

The Kafka cluster, its listeners, node pools, and any auto-rebalances on scaling |

|

|

The nodes in the node pool, their roles, and the associated Kafka cluster |

|

|

Kafka topics in the Kafka cluster |

|

|

Kafka users in the Kafka cluster |

|

|

The Kafka Connect cluster and connector plugins |

|

|

KafkaConnector resources |

|

|

The Kafka MirrorMaker 2 cluster and internal connectors |

|

|

The HTTP Bridge |

|

|

The status and results of a rebalance |

|

|

The number of pods: being managed, using the current version, and in a ready state |

The status property of a resource provides information on the state of the resource.

The status.conditions and status.observedGeneration properties are common to all resources.

status.conditions-

Status conditions describe the current state of a resource. Status condition properties are useful for tracking progress related to the resource achieving its desired state, as defined by the configuration specified in its

spec. Status condition properties provide the time and reason the state of the resource changed, and details of events preventing or delaying the operator from realizing the desired state. status.observedGeneration-

Last observed generation denotes the latest reconciliation of the resource by the Cluster Operator. If the value of

observedGenerationis different from the value ofmetadata.generation(the current version of the deployment), the operator has not yet processed the latest update to the resource. If these values are the same, the status information reflects the most recent changes to the resource.

The status properties also provide resource-specific information.

For example, KafkaStatus provides information on listener addresses, and the ID of the Kafka cluster.

KafkaStatus also provides information on the Kafka and Strimzi versions being used.

You can check the values of operatorLastSuccessfulVersion and kafkaVersion to determine whether an upgrade of Strimzi or Kafka has completed

Strimzi creates and maintains the status of custom resources, periodically evaluating the current state of the custom resource and updating its status accordingly.

When performing an update on a custom resource using kubectl edit, for example, its status is not editable. Moreover, changing the status would not affect the configuration of the Kafka cluster.

Here we see the status properties for a Kafka custom resource.

apiVersion: kafka.strimzi.io/v1

kind: Kafka

metadata:

spec:

# ...

status:

clusterId: XP9FP2P-RByvEy0W4cOEUA

conditions:

- lastTransitionTime: '2023-01-20T17:56:29.396588Z'

status: 'True'

type: Ready

kafkaMetadataState: KRaft

kafkaVersion: 4.2.0

kafkaNodePools:

- name: broker

- name: controller

listeners:

- addresses:

- host: my-cluster-kafka-bootstrap.prm-project.svc

port: 9092

bootstrapServers: 'my-cluster-kafka-bootstrap.prm-project.svc:9092'

name: plain

- addresses:

- host: my-cluster-kafka-bootstrap.prm-project.svc

port: 9093

bootstrapServers: 'my-cluster-kafka-bootstrap.prm-project.svc:9093'

certificates:

- |

-----BEGIN CERTIFICATE-----

-----END CERTIFICATE-----

name: tls

- addresses:

- host: >-

2054284155.us-east-2.elb.amazonaws.com

port: 9095

bootstrapServers: >-

2054284155.us-east-2.elb.amazonaws.com:9095

certificates:

- |

-----BEGIN CERTIFICATE-----

-----END CERTIFICATE-----

name: external3

- addresses:

- host: ip-10-0-172-202.us-east-2.compute.internal

port: 31644

bootstrapServers: 'ip-10-0-172-202.us-east-2.compute.internal:31644'

certificates:

- |

-----BEGIN CERTIFICATE-----

-----END CERTIFICATE-----

name: external4

observedGeneration: 3

operatorLastSuccessfulVersion: latest-

clusterIdshows the Kafka cluster ID. -

conditionsdescribe the current state of the Kafka cluster. -

The

Readycondition indicates that the Cluster Operator considers the Kafka cluster ready to handle traffic. -

kafkaMetadataStateshows that KRaft is managing Kafka metadata and coordinating operations. -

kafkaVersionshows the Kafka version used by the cluster. -

kafkaNodePoolslists the node pools that belong to the Kafka cluster. -

listenersdescribe the Kafka bootstrap addresses for each listener type. -

observedGenerationindicates the last reconciliation of theKafkacustom resource by the Cluster Operator. -

operatorLastSuccessfulVersionshows the operator version that successfully completed the last reconciliation.

|

Note

|

The Kafka bootstrap addresses listed in the status do not signify that those endpoints or the Kafka cluster is in a Ready state.

|

1.1.4. Finding the status of a custom resource

Use kubectl with the status subresource of a custom resource to retrieve information about the resource.

-

A Kubernetes cluster.

-

The Cluster Operator is running.

-

Specify the custom resource and use the

-o jsonpathoption to apply a standard JSONPath expression to select thestatusproperty:kubectl get kafka <kafka_resource_name> -o jsonpath='{.status}' | jqThis expression returns all the status information for the specified custom resource. You can use dot notation, such as

status.listenersorstatus.observedGeneration, to fine-tune the status information you wish to see.Using the

jqcommand line JSON parser tool makes it easier to read the output.

1.2. Strimzi operators

Strimzi uses operators to deploy and manage Kafka components.

The operators continuously monitor Strimzi custom resources (like Kafka, KafkaTopic, and KafkaUser) and reconcile the state of Kafka components to match their configuration.

This reconciliation process involves three main operations:

- Creation

-

When you create a Strimzi custom resource, the responsible operator detects it and takes the necessary actions to create the component. This might involve creating Kubernetes resources, such as

Deployment,Pod,Service, andConfigMap, or configuring items inside the Kafka cluster itself, such as topics and users. - Update

-

Each time you update a custom resource, the operator detects the change and applies a corresponding update if the changes are valid. This could trigger a rolling update of pods or reconfigure a resource within Kafka. Rolling updates maintain the availability of the Kafka cluster, but can lead to service disruption in the Kafka clients.

- Deletion

-

When you delete a custom resource, the operator detects the deletion and acts to remove the component. Most dependent resources are deleted automatically by Kubernetes garbage collection. The exact behavior depends on the resource type. For a Kafka cluster, PVCs are retained by default to prevent data loss. For a Kafka topic, the topic is fully deleted from the Apache Kafka cluster.

Strimzi provides the following operators, each responsible for different aspects of a Kafka deployment:

- Cluster Operator (required)

-

The Cluster Operator is the core operator and must be deployed first. It handles the deployment and lifecycle of Apache Kafka clusters on Kubernetes, automating the setup of Kafka nodes and related resources.

Additionally, Strimzi provides Drain Cleaner, which is deployed separately. Drain Cleaner supports the Cluster Operator in managing pod evictions for Kafka clusters.

- Entity Operator (recommended)

-

The Entity Operator can be deployed by the Cluster Operator. It runs in a single pod and includes one or both of the following operators:

-

Topic Operator to manage Kafka topics.

-

User Operator to manage Kafka users.

Each operator runs in a separate container within the Entity Operator pod.

-

- Access Operator (optional)

-

Manages and shares Kafka cluster connection details. It is deployed independently of the Cluster Operator.

|

Note

|

The Topic Operator and User Operator can also be deployed standalone (without the Entity Operator) to manage topics and users for a Kafka cluster that is not managed by Strimzi. |

1.2.1. Operator-watched Kafka resources

Operators watch and manage Kafka resources within defined Kubernetes namespaces. The namespace scope of where each operator can watch these resources differs.

You can choose the namespace scope for the Cluster Operator. The Topic Operator and the User Operator can only watch a single Kafka cluster in a namespace. And they can only be connected to a single Kafka cluster.

|

Warning

|

While the operator can be configured to watch multiple namespaces, each watched namespace should contain only one instance of a specific component type, such as one Kafka cluster, to avoid conflicts. |

| Operator | Watched resources | Namespace scope |

|---|---|---|

Cluster Operator |

|

Single, multiple, or all |

Topic Operator |

|

Single namespace (one Kafka cluster only) |

User Operator |

|

Single namespace (one Kafka cluster only) |

Access Operator |

|

Single or all |

|

Note

|

For a standalone deployment of the Topic Operator or User Operator, you specify a namespace and connection to the Kafka cluster to watch in the configuration. |

1.2.2. Managing RBAC resources

The Cluster Operator creates and manages role-based access control (RBAC) resources for Strimzi components that need access to Kubernetes resources.

For the Cluster Operator to function, it needs permission within the Kubernetes cluster to interact with Kafka resources, such as Kafka and KafkaConnect, as well as managed resources like ConfigMap, Pod, Deployment, and Service.

Permission is specified through the following Kubernetes RBAC resources:

-

ServiceAccount -

RoleandClusterRole -

RoleBindingandClusterRoleBinding

Delegating privileges to Strimzi components

The Cluster Operator runs under a service account called strimzi-cluster-operator, which is assigned cluster roles that give it permission to create the necessary RBAC resources for Strimzi components.

Role bindings associate the cluster roles with the service account.

Kubernetes enforces privilege escalation prevention, meaning the Cluster Operator cannot grant privileges it does not possess, nor can it grant such privileges in a namespace it cannot access. Consequently, the Cluster Operator must have the necessary privileges for all the components it orchestrates.

The Cluster Operator must be able to do the following:

-

Enable the Topic Operator to manage

KafkaTopicresources by creatingRoleandRoleBindingresources in the relevant namespace. -

Enable the User Operator to manage

KafkaUserresources by creatingRoleandRoleBindingresources in the relevant namespace. -

Allow Strimzi to discover the failure domain of a

Nodeby creating aClusterRoleBinding.

When using rack-aware partition assignment, broker pods need to access information about the Node they are running on, such as the Availability Zone in Amazon AWS.

Similarly, when using NodePort type listeners, broker pods need to advertise the address of the Node they are running on.

Since a Node is a cluster-scoped resource, this access must be granted through a ClusterRoleBinding, not a namespace-scoped RoleBinding.

The following sections describe the RBAC resources required by the Cluster Operator.

ClusterRole resources

The Cluster Operator uses ClusterRole resources to provide the necessary access to resources.

Depending on the Kubernetes cluster setup, a cluster administrator might be needed to create the cluster roles.

|

Note

|

Cluster administrator rights are only needed for the creation of ClusterRole resources.

The Cluster Operator will not run under a cluster admin account.

|

The RBAC resources follow the principle of least privilege and contain only those privileges needed by the Cluster Operator to operate the cluster of the Kafka component.

All cluster roles are required by the Cluster Operator in order to delegate privileges.

| Name | Description |

|---|---|

|

Access rights for namespace-scoped resources used by the Cluster Operator to deploy and manage the operands. |

|

Access rights for cluster-scoped resources used by the Cluster Operator to deploy and manage the operands. |

|

Access rights used by the Cluster Operator for leader election. |

|

Access rights used by the Cluster Operator to watch and manage the Strimzi custom resources. |

|

Access rights to allow Kafka brokers to get the topology labels from Kubernetes worker nodes when rack-awareness is used. |

|

Access rights used by the Topic and User Operators to manage Kafka users and topics. |

|

Access rights to allow Kafka Connect, MirrorMaker (1 and 2), and HTTP Bridge to get the topology labels from Kubernetes worker nodes when rack-awareness is used. |

ClusterRoleBinding resources

The Cluster Operator uses ClusterRoleBinding and RoleBinding resources to associate its ClusterRole with its ServiceAccount.

Cluster role bindings are required by cluster roles containing cluster-scoped resources.

| Name | Description |

|---|---|

|

Grants the Cluster Operator the rights from the |

|

Grants the Cluster Operator the rights from the |

|

Grants the Cluster Operator the rights from the |

| Name | Description |

|---|---|

|

Grants the Cluster Operator the rights from the |

|

Grants the Cluster Operator the rights from the |

|

Grants the Cluster Operator the rights from the |

|

Grants the Cluster Operator the rights from the |

ServiceAccount resources

The Cluster Operator runs using the strimzi-cluster-operator ServiceAccount.

This service account grants it the privileges it requires to manage the operands.

The Cluster Operator creates additional ClusterRoleBinding and RoleBinding resources to delegate some of these RBAC rights to the operands.

Each of the operands uses its own service account created by the Cluster Operator. This allows the Cluster Operator to follow the principle of least privilege and give the operands only the access rights that are really need.

| Name | Used by |

|---|---|

|

Kafka broker pods |

|

Entity Operator |

|

Cruise Control pods |

|

Kafka Exporter pods |

|

Kafka Connect pods |

|

MirrorMaker pods |

|

MirrorMaker 2 pods |

|

HTTP Bridge pods |

1.2.3. Managing pod resources

The StrimziPodSet custom resource is used by Strimzi to create and manage Kafka, Kafka Connect, and MirrorMaker 2 pods.

You must not create, update, or delete StrimziPodSet resources.

The StrimziPodSet custom resource is used internally and resources are managed solely by the Cluster Operator.

As a consequence, the Cluster Operator must be running properly to avoid the possibility of pods not starting and Kafka clusters not being available.

|

Note

|

Kubernetes Deployment resources are used for creating and managing the pods of other components.

|

1.2.4. Lock acquisition warnings for cluster operations

The Cluster Operator ensures that only one operation runs at a time for each cluster by using locks. If another operation attempts to start while a lock is held, it waits until the current operation completes.

Operations such as cluster creation, rolling updates, scaling down, and scaling up are managed by the Cluster Operator.

If acquiring a lock takes longer than the configured timeout (STRIMZI_OPERATION_TIMEOUT_MS), a DEBUG message is logged:

DEBUG AbstractOperator:406 - Reconciliation #55(timer) Kafka(myproject/my-cluster): Failed to acquire lock lock::myproject::Kafka::my-cluster within 10000ms.Timed-out operations are retried during the next periodic reconciliation in intervals defined by STRIMZI_FULL_RECONCILIATION_INTERVAL_MS (by default 120 seconds).

If an INFO message continues to appear with the same same reconciliation number, it might indicate a lock release error:

INFO AbstractOperator:399 - Reconciliation #1(watch) Kafka(myproject/my-cluster): Reconciliation is in progressRestarting the Cluster Operator can resolve such issues.

1.3. Using the HTTP Bridge to connect with a Kafka cluster

You can use the HTTP Bridge API to create and manage consumers and send and receive records over HTTP rather than the native Kafka protocol.

When you set up the HTTP Bridge you configure HTTP access to the Kafka cluster. You can then use the HTTP Bridge to produce and consume messages from the cluster, as well as performing other operations through its REST interface.

-

For information on installing and using the HTTP Bridge, see Using the HTTP Bridge.

1.4. Seamless FIPS support

Federal Information Processing Standards (FIPS) are standards for computer security and interoperability. When running Strimzi on a FIPS-enabled Kubernetes cluster, the OpenJDK used in Strimzi container images automatically switches to FIPS mode. From version 0.33, Strimzi can run on FIPS-enabled Kubernetes clusters without any changes or special configuration. It uses only the FIPS-compliant security libraries from the OpenJDK.

|

Important

|

If you are using FIPS-enabled Kubernetes clusters, you may experience higher memory consumption compared to regular Kubernetes clusters. To avoid any issues, we suggest increasing the memory request to at least 512Mi. |

1.4.1. NIST validation

Strimzi is designed to use FIPS-validated cryptographic libraries for secure communication in a FIPS-enabled Kubernetes cluster. However, it’s important to note that while Strimzi can leverage these libraries in a FIPS environment, the underlying Universal Base Images (UBI) used in Strimzi deployments may not inherently include NIST-validated binaries. This means that while Strimzi can leverage cryptographic libraries for FIPS, the specific binaries within the Strimzi container images might not have undergone NIST validation.

For more information about the NIST validation program and validated modules, see Cryptographic Module Validation Program on the NIST website.

1.4.2. Minimum password length

When running in the FIPS mode, SCRAM-SHA-512 passwords need to be at least 32 characters long. From Strimzi 0.33, the default password length in Strimzi User Operator is set to 32 characters as well. If you have a Kafka cluster with custom configuration that uses a password length that is less than 32 characters, you need to update your configuration. If you have any users with passwords shorter than 32 characters, you need to regenerate a password with the required length. You can do that, for example, by deleting the user secret and waiting for the User Operator to create a new password with the appropriate length.

1.5. Document conventions

User-replaced values, also known as replaceables, are shown in with angle brackets (< >).

Underscores ( _ ) are used for multi-word values.

If the value refers to code or commands, monospace is also used.

For example, the following code shows that <my_namespace> must be replaced by the correct namespace name:

sed -i 's/namespace: .*/namespace: <my_namespace>/' install/cluster-operator/*RoleBinding*.yaml2. Using Kafka in KRaft mode

KRaft (Kafka Raft metadata) mode replaces Kafka’s dependency on ZooKeeper for cluster management. KRaft mode simplifies the deployment and management of Kafka clusters by bringing metadata management and coordination of clusters into Kafka.

Kafka in KRaft mode is designed to offer enhanced reliability, scalability, and throughput. Metadata operations become more efficient as they are directly integrated. And by removing the need to maintain a ZooKeeper cluster, there’s also a reduction in the operational and security overhead.

To deploy a Kafka cluster in KRaft mode, you must use Kafka and KafkaNodePool custom resources.

For more details and examples, see Deploying a Kafka cluster.

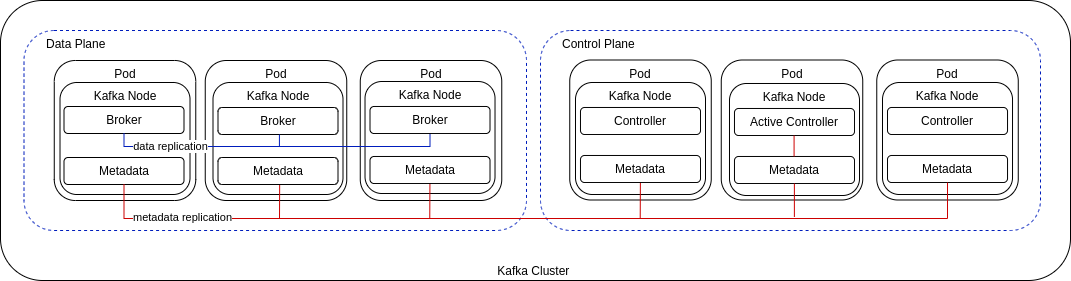

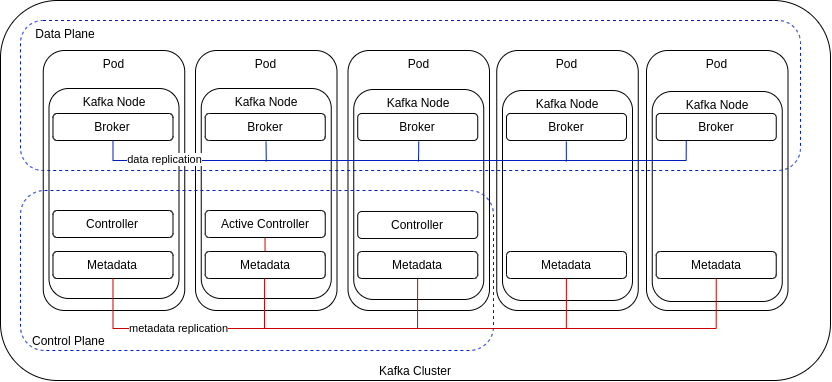

Through node pool configuration using KafkaNodePool resources, nodes are assigned the role of broker, controller, or both:

-

Controller nodes operate in the control plane to manage cluster metadata and the state of the cluster using a Raft-based consensus protocol.

-

Broker nodes operate in the data plane to manage the streaming of messages, receiving and storing data in topic partitions.

-

Dual-role nodes fulfill the responsibilities of controllers and brokers.

Controllers use a metadata log, stored as a single-partition topic (__cluster_metadata) on every node, which records the state of the cluster.

When requests are made to change the cluster configuration, an active (lead) controller manages updates to the metadata log, and follower controllers replicate these updates.

The metadata log stores information on brokers, replicas, topics, and partitions, including the state of in-sync replicas and partition leadership.

Kafka uses this metadata to coordinate changes and manage the cluster effectively.

Broker nodes act as observers, storing the metadata log passively to stay up-to-date with the cluster’s state. Each node fetches updates to the log independently. If you are using JBOD storage, you can change the volume that stores the metadata log.

|

Note

|

The KRaft metadata version used in the Kafka cluster must be supported by the Kafka version in use.

Both versions are managed through the Kafka resource configuration.

For more information, see Configuring Kafka.

|

In the following example, a Kafka cluster comprises a quorum of controller and broker nodes for fault tolerance and high availability.

In a typical production environment, use dedicated broker and controller nodes. However, you might want to use nodes in a dual-role configuration for development or testing.

You can use a combination of nodes that combine roles with nodes that perform a single role. In the following example, three nodes perform a dual role and two nodes act only as brokers.

2.1. KRaft limitations

KRaft limitations primarily relate to controller scaling, which impacts cluster operations.

2.1.1. Controller scaling

KRaft mode supports two types of controller quorums:

-

Static controller quorums

In this mode, the number of controllers is fixed, and scaling requires downtime. -

Dynamic controller quorums

This mode enables dynamic scaling of controllers without downtime. New controllers join as observers, replicate the metadata log, and eventually become eligible to join the quorum. If a controller being removed is the active controller, it will step down from the quorum only after the new quorum is confirmed.

Scaling is useful not only for adding or removing controllers, but supports the following operations:

-

Renaming a node pool, which involves adding a new node pool with the desired name and deleting the old one.

-

Changing non-JBOD storage, which requires creating a new node pool with the updated storage configuration and removing the old one.

Dynamic controller quorums provide the flexibility to make these operations significantly easier to perform.

2.1.2. Limitations with static controller quorums

Migration between static and dynamic controller quorums is not currently supported by Apache Kafka, though it is expected to be introduced in a future release. As a result, Strimzi uses static controller quorums for all deployments, including new installations. All pre-existing KRaft-based Apache Kafka clusters that use static controller quorums must continue using them. To ensure compatibility with existing KRaft-based clusters, Strimzi continues to use static controller quorums as well.

This limitation means dynamic scaling of controller quorums cannot be used to support the following:

-

Adding or removing node pools with controller roles

-

Adding the controller role to an existing node pool

-

Removing the controller role from an existing node pool

-

Scaling a node pool with the controller role

-

Renaming a node pool with the controller role

Static controller quorums also limit operations that require scaling. For example, changing the storage type for a node pool with a controller role is not possible because it involves scaling the controller quorum. For non-JBOD storage, creating a new node pool with the desired storage type, adding it to the cluster, and removing the old one would require scaling operations, which are not supported. In some cases, workarounds are possible. For instance, when modifying node pool roles to combine controller and broker functions, you can add broker roles to controller nodes instead of adding controller roles to broker nodes to avoid controller scaling. However, this approach would require reassigning more data, which may temporarily affect cluster performance.

Once migration is possible, Strimzi plans to assess introducing support for dynamic quorums.

2.2. Migrating ZooKeeper-based Kafka clusters

Kafka 4.0 runs exclusively in KRaft mode, with no ZooKeeper integration. As a result of this change, Strimzi removed support for ZooKeeper-based Kafka clusters starting with version 0.46.

To upgrade to Strimzi 0.46 or later, first migrate any ZooKeeper-based Kafka clusters to KRaft mode.

NOTE: To perform the migration before upgrading, follow the procedure outlined in the Strimzi 0.45.x documentation. For more information, see Migrating to KRaft mode.

3. Deployment methods

You can deploy Strimzi on Kubernetes 1.30 and later using one of the following methods:

| Installation method | Description |

|---|---|

Download the deployment files to manually deploy Strimzi components.

The installation files bundle all the necessary Kubernetes resources, including the Custom Resource Definitions (CRDs) that define resources like |

|

Install Strimzi using the Operator Lifecycle Manager (OLM) from OperatorHub.io. Once the Cluster Operator is running, you can deploy Strimzi components using custom resources. This method provides a standard configuration and supports automatic updates for streamlined lifecycle management. |

|

Use a Helm chart to deploy the Cluster Operator, then deploy Strimzi components using custom resources. Helm charts offer a convenient and repeatable way to manage installations, especially in environments already using Helm. |

|

Note

|

All deployment methods assume that you have access to a running Kubernetes cluster with appropriate permissions. Some methods may also require additional setup, such as access to container registries. |

4. Deployment path

You can configure a deployment where Strimzi manages a single Kafka cluster in the same namespace, suitable for development or testing. Alternatively, Strimzi can manage multiple Kafka clusters across different namespaces in a production environment.

The basic deployment path includes the following steps:

-

Create a Kubernetes namespace for the Cluster Operator.

-

Deploy the Cluster Operator based on your chosen deployment method.

-

Deploy the Kafka cluster, including the Topic Operator and User Operator if desired.

-

Optionally, deploy additional components:

-

The Topic Operator and User Operator as standalone components, if not deployed with the Kafka cluster

-

Kafka Connect

-

Kafka MirrorMaker

-

HTTP Bridge

-

Metrics monitoring components

-

The Cluster Operator creates Kubernetes resources such as Deployment, Service, and Pod for each component.

The resource names are appended with the name of the deployed component.

For example, a Kafka cluster named my-kafka-cluster will have a service named my-kafka-cluster-kafka.

5. Downloading deployment files

To deploy Strimzi components using YAML files, download and extract the latest release archive (strimzi-latest.*) from the GitHub releases page.

The release archive contains sample YAML files for deploying Strimzi components to Kubernetes using kubectl.

Begin by deploying the Cluster Operator from the install/cluster-operator directory to watch a single namespace, multiple namespaces, or all namespaces.

In the install folder, you can also deploy other Strimzi components, including:

-

Strimzi administrator roles (

strimzi-admin) -

Standalone Topic Operator (

topic-operator) -

Standalone User Operator (

user-operator) -

Strimzi Drain Cleaner (

drain-cleaner)

The examples folder provides examples of Strimzi custom resources to help you develop your own Kafka configurations.

|

Note

|

Strimzi container images are available through the Container Registry, but we recommend using the provided YAML files for deployment. |

6. Preparing for your deployment

Prepare for a deployment of Strimzi by completing any necessary pre-deployment tasks. Take the necessary preparatory steps according to your specific requirements, such as the following:

|

Note

|

To run the commands in this guide, your cluster user must have the rights to manage role-based access control (RBAC) and CRDs. |

6.1. Deployment prerequisites

To deploy Strimzi, you will need the following:

-

A Kubernetes 1.30 and later cluster.

-

The

kubectlcommand-line tool is installed and configured to connect to the running cluster.

For more information on the tools available for running Kubernetes, see Install Tools in the Kubernetes documentation.

|

Note

|

Strimzi supports some features that are specific to OpenShift, where such integration benefits OpenShift users and there is no equivalent implementation using standard Kubernetes. |

oc and kubectl commands

The oc command functions as an alternative to kubectl.

In almost all cases the example kubectl commands used in this guide can be done using oc simply by replacing the command name (options and arguments remain the same).

In other words, instead of using:

kubectl apply -f <your_file>when using OpenShift you can use:

oc apply -f <your_file>|

Note

|

As an exception to this general rule, oc uses oc adm subcommands for cluster management functionality,

whereas kubectl does not make this distinction.

For example, the oc equivalent of kubectl taint is oc adm taint.

|

6.2. Planning your Cluster Operator deployment

To support a stable and reliable Strimzi deployment, follow the best practices in this section. Run a single Cluster Operator per Kubernetes cluster, choose an appropriate watch strategy, and isolate components within watched namespaces to reduce the risk of conflicts and unexpected behavior.

6.2.1. Avoiding deployment conflicts

A single operator is capable of managing multiple Kafka clusters across different namespaces. Deploying multiple instances of the Cluster Operator, particularly with different versions, introduces the following risks:

- Resource conflicts

-

Conflicts over cluster-scoped resources like Custom Resource Definitions (CRDs) and ClusterRoles, leading to unpredictable behavior. This conflict occurs even when the operators are deployed in separate namespaces.

- Version incompatibility

-

Different operator versions can create compatibility issues with the Kafka clusters they manage. New Strimzi releases may introduce features, bug fixes, or other changes that are not backward-compatible.

To avoid these risks, the recommended approach to deploying the Cluster Operator is as follows:

- Run a single Cluster Operator

-

Deploy only one Cluster Operator per Kubernetes cluster.

- Consider a dedicated namespace

-

Install the Cluster Operator in its own namespace, separate from the Kafka components it manages. This separation is most useful when the operator is configured to watch multiple namespaces, but it can also help prevent uncontrolled growth of resources in a single namespace.

- Keep everything updated

-

Regularly update Strimzi and the version of Kafka it manages so that you have the latest features, bug fixes, and enhancements.

6.2.2. Choosing namespace watch options

You configure the Cluster Operator to watch for changes to Kafka resources in specific namespaces.

You can configure the operator to watch:

Choosing to watch a specific list of multiple namespaces can have the biggest impact on performance due to increased processing overhead. To optimize performance, the recommended modes are to either watch a single namespace for focused monitoring or all namespaces for a comprehensive view of the entire cluster.

6.2.3. Isolating components in watched namespaces

After deploying the Cluster Operator, it begins watching specified namespaces for changes to Kafka resources. To reduce risks and maintain reliability, isolate component types within each watched namespace. Each namespace should contain only one instance of a given component type, such as one Kafka cluster, to avoid the following types of issues:

-

Conflicting resource names

-

Ambiguity in access management

-

Topic and user name collisions

-

Unpredictable behavior during upgrades or recovery

6.3. Pushing container images to your own registry

Container images for Strimzi are available in the Container Registry. The installation YAML files provided by Strimzi will pull the images directly from the Container Registry.

If you do not have access to the Container Registry or want to use your own container repository:

-

Pull all container images listed here

-

Push them into your own registry

-

Update the image names in the YAML files used in deployment

|

Note

|

Each Kafka version supported for the release has a separate image. |

| Container image | Namespace/Repository | Description |

|---|---|---|

Kafka |

|

Strimzi image for running Kafka, including:

|

Operator |

|

Strimzi image for running the operators:

|

HTTP Bridge |

|

Strimzi image for running the HTTP Bridge |

Strimzi Drain Cleaner |

|

Strimzi image for running the Strimzi Drain Cleaner |

6.4. Designating Strimzi administrators

Strimzi provides custom resources for configuration of your deployment. By default, permission to view, create, edit, and delete these resources is limited to Kubernetes cluster administrators. Strimzi provides two cluster roles that you can use to assign these rights to other users:

-

strimzi-viewallows users to view and list Strimzi resources. -

strimzi-adminallows users to also create, edit or delete Strimzi resources.

When you install these roles, they will automatically aggregate (add) these rights to the default Kubernetes cluster roles.

strimzi-view aggregates to the view role, and strimzi-admin aggregates to the edit and admin roles.

Because of the aggregation, you might not need to assign these roles to users who already have similar rights.

The following procedure shows how to assign a strimzi-admin role that allows non-cluster administrators to manage Strimzi resources.

A system administrator can designate Strimzi administrators after the Cluster Operator is deployed.

-

The Strimzi admin deployment files, which are included in the Strimzi deployment files.

-

The Strimzi Custom Resource Definitions (CRDs) and role-based access control (RBAC) resources to manage the CRDs have been deployed with the Cluster Operator.

-

Create the

strimzi-viewandstrimzi-admincluster roles in Kubernetes.kubectl create -f install/strimzi-admin -

If needed, assign the roles that provide access rights to users that require them.

kubectl create clusterrolebinding strimzi-admin --clusterrole=strimzi-admin --user=user1 --user=user2

7. Deploying Strimzi using installation files

Download and use the Strimzi deployment files to deploy Strimzi components to a Kubernetes cluster.

You can deploy Strimzi latest on Kubernetes 1.30 and later.

The steps to deploy Strimzi using the installation files are as follows:

-

Use the Cluster Operator to deploy the following:

-

Optionally, deploy the following Kafka components according to your requirements:

|

Note

|

To run the commands in this guide, a Kubernetes user must have the rights to manage role-based access control (RBAC) and CRDs. |

7.1. Deploying the Cluster Operator

The first step for any deployment of Strimzi is to install the Cluster Operator, which is responsible for deploying and managing Kafka clusters within a Kubernetes cluster.

A single command applies all the installation files in the install/cluster-operator folder: kubectl apply -f ./install/cluster-operator.

The command sets up everything you need to be able to create and manage a Kafka deployment, including the following resources:

-

Cluster Operator (

Deployment,ConfigMap) -

Strimzi CRDs (

CustomResourceDefinition) -

RBAC resources (

ClusterRole,ClusterRoleBinding,RoleBinding) -

Service account (

ServiceAccount)

Cluster-scoped resources like CustomResourceDefinition, ClusterRole, and ClusterRoleBinding require administrator privileges for installation.

Prior to installation, it’s advisable to review the ClusterRole specifications to ensure they do not grant unnecessary privileges.

After installation, the Cluster Operator runs as a regular Deployment to watch for updates of Kafka resources.

For more information, see Operator-watched resources.

Any standard (non-admin) Kubernetes user with privileges to access the Deployment can configure it.

A cluster administrator can also grant standard users the privileges necessary to manage Strimzi custom resources.

By default, a single replica of the Cluster Operator is deployed. You can add replicas with leader election so that additional Cluster Operators are on standby in case of disruption. For more information, see Running multiple Cluster Operator replicas with leader election.

|

Warning

|

While the operator can be configured to watch multiple namespaces, each watched namespace should contain only one instance of a specific component type, such as one Kafka cluster, to avoid conflicts. |

7.1.1. Deploying the Cluster Operator to watch a single namespace

This procedure shows how to deploy the Cluster Operator to watch Strimzi resources in a single namespace in your Kubernetes cluster.

-

You need an account with permission to create and manage

CustomResourceDefinitionand RBAC (ClusterRole, andRoleBinding) resources.

-

Edit the Strimzi installation files to use the namespace the Cluster Operator is going to be installed into.

For example, in this procedure the Cluster Operator is installed into the namespace

my-cluster-operator-namespace.On Linux, use:

sed -i 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yaml -

Deploy the Cluster Operator:

kubectl create -f install/cluster-operator -n my-cluster-operator-namespace -

Check the status of the deployment:

kubectl get deployments -n my-cluster-operator-namespaceOutput shows the deployment name and readinessNAME READY UP-TO-DATE AVAILABLE strimzi-cluster-operator 1/1 1 1READYshows the number of replicas that are ready/expected. The deployment is successful when theAVAILABLEoutput shows1.

7.1.2. Deploying the Cluster Operator to watch multiple namespaces

This procedure shows how to deploy the Cluster Operator to watch Strimzi resources across multiple namespaces in your Kubernetes cluster.

-

You need an account with permission to create and manage

CustomResourceDefinitionand RBAC (ClusterRole, andRoleBinding) resources.

-

Edit the Strimzi installation files to use the namespace the Cluster Operator is going to be installed into.

For example, in this procedure the Cluster Operator is installed into the namespace

my-cluster-operator-namespace.On Linux, use:

sed -i 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yaml -

Edit the

install/cluster-operator/060-Deployment-strimzi-cluster-operator.yamlfile to add a list of all the namespaces the Cluster Operator will watch to theSTRIMZI_NAMESPACEenvironment variable.For example, in this procedure the Cluster Operator will watch the namespaces

watched-namespace-1,watched-namespace-2,watched-namespace-3.apiVersion: apps/v1 kind: Deployment spec: # ... template: spec: serviceAccountName: strimzi-cluster-operator containers: - name: strimzi-cluster-operator image: quay.io/strimzi/operator:latest imagePullPolicy: IfNotPresent env: - name: STRIMZI_NAMESPACE value: watched-namespace-1,watched-namespace-2,watched-namespace-3 -

For each namespace listed, install the

RoleBindings.In this example, we replace

watched-namespacein these commands with the namespaces listed in the previous step, repeating them forwatched-namespace-1,watched-namespace-2,watched-namespace-3:kubectl create -f install/cluster-operator/020-RoleBinding-strimzi-cluster-operator.yaml -n <watched_namespace> kubectl create -f install/cluster-operator/023-RoleBinding-strimzi-cluster-operator.yaml -n <watched_namespace> kubectl create -f install/cluster-operator/031-RoleBinding-strimzi-cluster-operator-entity-operator-delegation.yaml -n <watched_namespace> -

Deploy the Cluster Operator:

kubectl create -f install/cluster-operator -n my-cluster-operator-namespace -

Check the status of the deployment:

kubectl get deployments -n my-cluster-operator-namespaceOutput shows the deployment name and readinessNAME READY UP-TO-DATE AVAILABLE strimzi-cluster-operator 1/1 1 1READYshows the number of replicas that are ready/expected. The deployment is successful when theAVAILABLEoutput shows1.

7.1.3. Deploying the Cluster Operator to watch all namespaces

This procedure shows how to deploy the Cluster Operator to watch Strimzi resources across all namespaces in your Kubernetes cluster.

When running in this mode, the Cluster Operator automatically manages clusters in any new namespaces that are created.

-

You need an account with permission to create and manage

CustomResourceDefinitionand RBAC (ClusterRole, andRoleBinding) resources.

-

Edit the Strimzi installation files to use the namespace the Cluster Operator is going to be installed into.

For example, in this procedure the Cluster Operator is installed into the namespace

my-cluster-operator-namespace.On Linux, use:

sed -i 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yaml -

Edit the

install/cluster-operator/060-Deployment-strimzi-cluster-operator.yamlfile to set the value of theSTRIMZI_NAMESPACEenvironment variable to*.apiVersion: apps/v1 kind: Deployment spec: # ... template: spec: # ... serviceAccountName: strimzi-cluster-operator containers: - name: strimzi-cluster-operator image: quay.io/strimzi/operator:latest imagePullPolicy: IfNotPresent env: - name: STRIMZI_NAMESPACE value: "*" # ... -

Create

ClusterRoleBindingsthat grant cluster-wide access for all namespaces to the Cluster Operator.kubectl create clusterrolebinding strimzi-cluster-operator-namespaced --clusterrole=strimzi-cluster-operator-namespaced --serviceaccount my-cluster-operator-namespace:strimzi-cluster-operator kubectl create clusterrolebinding strimzi-cluster-operator-watched --clusterrole=strimzi-cluster-operator-watched --serviceaccount my-cluster-operator-namespace:strimzi-cluster-operator kubectl create clusterrolebinding strimzi-cluster-operator-entity-operator-delegation --clusterrole=strimzi-entity-operator --serviceaccount my-cluster-operator-namespace:strimzi-cluster-operator -

Deploy the Cluster Operator to your Kubernetes cluster.

kubectl create -f install/cluster-operator -n my-cluster-operator-namespace -

Check the status of the deployment:

kubectl get deployments -n my-cluster-operator-namespaceOutput shows the deployment name and readinessNAME READY UP-TO-DATE AVAILABLE strimzi-cluster-operator 1/1 1 1READYshows the number of replicas that are ready/expected. The deployment is successful when theAVAILABLEoutput shows1.

7.2. Deploying Kafka

To be able to manage a Kafka cluster with the Cluster Operator, you must deploy it as a Kafka resource.

Strimzi provides example deployment files to do this.

You can use these files to deploy the Topic Operator and User Operator at the same time.

After you have deployed the Cluster Operator, use a Kafka resource to deploy the following components:

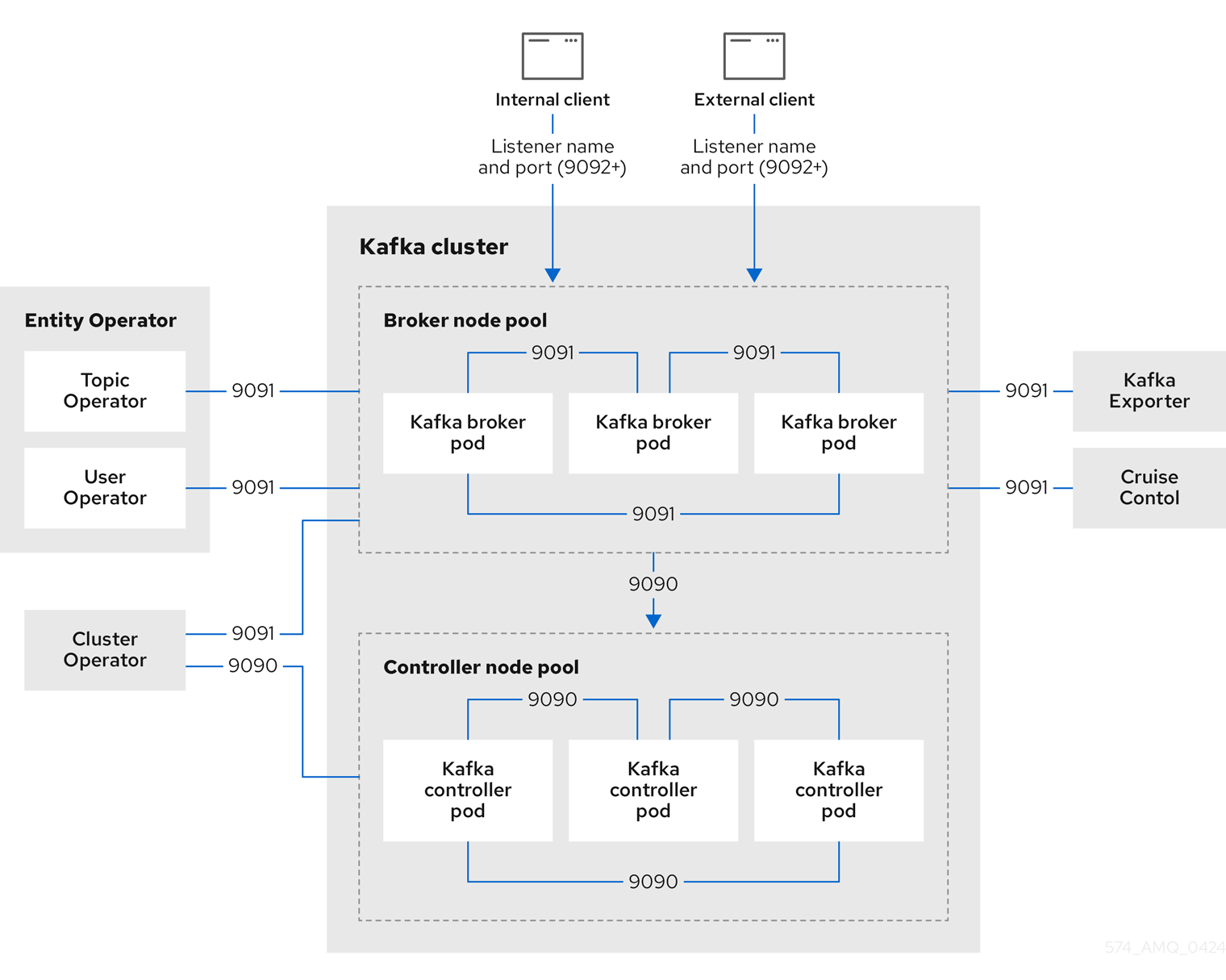

Node pools are used in the deployment of a Kafka cluster.

Node pools represent a distinct group of Kafka nodes within the Kafka cluster that share the same configuration.

For each Kafka node in the node pool, any configuration not defined in node pool is inherited from the cluster configuration in the Kafka resource.

If you haven’t deployed a Kafka cluster as a Kafka resource, you can’t use the Cluster Operator to manage it.

This applies, for example, to a Kafka cluster running outside of Kubernetes.

However, you can use the Topic Operator and User Operator with a Kafka cluster that is not managed by Strimzi, by deploying them as standalone components.

You can also deploy and use other Kafka components with a Kafka cluster not managed by Strimzi.

7.2.1. Deploying a Kafka cluster

This procedure shows how to deploy a Kafka cluster and associated node pools using the Cluster Operator.

The deployment uses a YAML file to provide the specification to create a Kafka resource and KafkaNodePool resources.

Strimzi provides the following example deployment files that you can use to create a Kafka cluster that uses node pools:

kafka/kafka-with-dual-role-nodes.yaml-

Deploys a Kafka cluster with one pool of nodes that share the broker and controller roles.

kafka/kafka-persistent.yaml-

Deploys a persistent Kafka cluster with one pool of controller nodes and one pool of broker nodes.

kafka/kafka-ephemeral.yaml-

Deploys an ephemeral Kafka cluster with one pool of controller nodes and one pool of broker nodes.

kafka/kafka-single-node.yaml-

Deploys a Kafka cluster with a single node.

kafka/kafka-jbod.yaml-

Deploys a Kafka cluster with multiple volumes in each broker node.

In this procedure, we use the example deployment file that deploys a Kafka cluster with one pool of nodes that share the broker and controller roles.

The example YAML files specify the latest supported Kafka version and KRaft metadata version used by the Kafka cluster.

|

Warning

|

When deploying multiple Kafka clusters managed by the Cluster Operator, deploy each cluster into a separate namespace. Deploying multiple clusters in the same namespace can lead to naming conflicts and resource collisions. |

By default, the example deployment files specify my-cluster as the Kafka cluster name.

The name cannot be changed after the cluster has been deployed.

To change the cluster name before you deploy the cluster, edit the Kafka.metadata.name property of the Kafka resource in the relevant YAML file.

-

Deploy a Kafka cluster.

To deploy a Kafka cluster with a single node pool that uses dual-role nodes:

kubectl apply -f examples/kafka/kafka-with-dual-role-nodes.yaml -

Check the status of the deployment:

kubectl get pods -n <my_cluster_operator_namespace>Output shows the node pool names and readinessNAME READY STATUS RESTARTS my-cluster-entity-operator 3/3 Running 0 my-cluster-pool-a-0 1/1 Running 0 my-cluster-pool-a-1 1/1 Running 0 my-cluster-pool-a-4 1/1 Running 0-

my-clusteris the name of the Kafka cluster. -

pool-ais the name of the node pool.A sequential index number starting with

0identifies each Kafka pod created.READYshows the number of replicas that are ready/expected. The deployment is successful when theSTATUSdisplays asRunning.Information on the deployment is also shown in the status of the

KafkaNodePoolresource, including a list of IDs for nodes in the pool.NoteNode IDs are assigned sequentially starting at 0 (zero) across all node pools within a cluster. This means that node IDs might not run sequentially within a specific node pool. If there are gaps in the sequence of node IDs across the cluster, the next node to be added is assigned an ID that fills the gap. When scaling down, the node with the highest node ID within a pool is removed.

-

7.2.2. Deploying the Topic Operator using the Cluster Operator

This procedure describes how to deploy the Topic Operator using the Cluster Operator.

You configure the entityOperator property of the Kafka resource to include the topicOperator.

By default, the Topic Operator watches for KafkaTopic resources in the namespace of the Kafka cluster deployed by the Cluster Operator.

You can also specify a namespace using watchedNamespace in the Topic Operator spec.

A single Topic Operator can watch a single namespace.

One namespace should be watched by only one Topic Operator.

|

Warning

|

Do not deploy more than one Kafka cluster into the same namespace. This causes the Topic Operator connected to each cluster to compete for the same topic resources using the same names, leading to name collisions and unpredictable behavior. |

If you want to use the Topic Operator with a Kafka cluster that is not managed by Strimzi, you must deploy the Topic Operator as a standalone component.

For more information about configuring the entityOperator and topicOperator properties,

see Configuring the Entity Operator.

-

Edit the

entityOperatorproperties of theKafkaresource to includetopicOperator:apiVersion: kafka.strimzi.io/v1 kind: Kafka metadata: name: my-cluster spec: #... entityOperator: topicOperator: {} userOperator: {} -

Configure the Topic Operator

specusing the properties described in theEntityTopicOperatorSpecschema reference.Use an empty object (

{}) if you want all properties to use their default values. -

Create or update the resource:

kubectl apply -f <kafka_configuration_file> -

Check the status of the deployment:

kubectl get pods -n <my_cluster_operator_namespace>Output shows the pod name and readinessNAME READY STATUS RESTARTS my-cluster-entity-operator 3/3 Running 0 # ...my-clusteris the name of the Kafka cluster.READYshows the number of replicas that are ready/expected. The deployment is successful when theSTATUSdisplays asRunning.

7.2.3. Deploying the User Operator using the Cluster Operator

This procedure describes how to deploy the User Operator using the Cluster Operator.

You configure the entityOperator property of the Kafka resource to include the userOperator.

By default, the User Operator watches for KafkaUser resources in the namespace of the Kafka cluster deployment.

You can also specify a namespace using watchedNamespace in the User Operator spec.

A single User Operator can watch a single namespace.

One namespace should be watched by only one User Operator.

|

Warning

|

Do not deploy more than one Kafka cluster into the same namespace.

This can cause the User Operator deployed with each cluster to compete for the same KafkaUser resources, leading to name collisions and unpredictable behavior.

|

If you want to use the User Operator with a Kafka cluster that is not managed by Strimzi, you must deploy the User Operator as a standalone component.

For more information about configuring the entityOperator and userOperator properties, see Configuring the Entity Operator.

-

Edit the

entityOperatorproperties of theKafkaresource to includeuserOperator:apiVersion: kafka.strimzi.io/v1 kind: Kafka metadata: name: my-cluster spec: #... entityOperator: topicOperator: {} userOperator: {} -

Configure the User Operator

specusing the properties described inEntityUserOperatorSpecschema reference.Use an empty object (

{}) if you want all properties to use their default values. -

Create or update the resource:

kubectl apply -f <kafka_configuration_file> -

Check the status of the deployment:

kubectl get pods -n <my_cluster_operator_namespace>Output shows the pod name and readinessNAME READY STATUS RESTARTS my-cluster-entity-operator 3/3 Running 0 # ...my-clusteris the name of the Kafka cluster.READYshows the number of replicas that are ready/expected. The deployment is successful when theSTATUSdisplays asRunning.

7.2.4. List of Kafka cluster resources

The following resources are created by the Cluster Operator in the Kubernetes cluster.

<kafka_cluster_name>-cluster-ca-

Secret with the Cluster CA private key used to encrypt the cluster communication.

<kafka_cluster_name>-cluster-ca-cert-

Secret with the Cluster CA public key. This key can be used to verify the identity of the Kafka brokers.

<kafka_cluster_name>-clients-ca-

Secret with the Clients CA private key used to sign user certificates

<kafka_cluster_name>-clients-ca-cert-

Secret with the Clients CA public key. This key can be used to verify the identity of the Kafka users.

<kafka_cluster_name>-cluster-operator-certs-

Secret with Cluster operators keys for communication with Kafka.

<kafka_cluster_name>-kafka-

Name given to the following Kafka resources:

-

Service account used by the Kafka pods.

-

PodDisruptionBudget that applies to all Kafka pods in the cluster, including broker-only, controller-only, and mixed-role (broker/controller) pods.

-

Role granting the Kafka brokers and controllers access to read their certificates and credentials.

-

<kafka_cluster_name>-kafka-brokers-

Service needed to have DNS resolve the Kafka broker pods IP addresses directly.

<kafka_cluster_name>-kafka-bootstrap-

Service can be used as bootstrap servers for Kafka clients connecting from within the Kubernetes cluster.

<kafka_cluster_name>-kafka-external-bootstrap-

Bootstrap service for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled. The old service name will be used for backwards compatibility when the listener name is

externaland port is9094. <kafka_cluster_name>-kafka-external-bootstrap-

Bootstrap route for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled and set to type

route. The old route name will be used for backwards compatibility when the listener name isexternaland port is9094. <kafka_cluster_name>-kafka-<listener_name>-bootstrap-

Bootstrap service for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled. The new service name will be used for all other external listeners.

<kafka_cluster_name>-kafka-<listener_name>-bootstrap-

Bootstrap route for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled and set to type

route. The new route name will be used for all other external listeners. <kafka_cluster_name>-kafka-<listener_name>-bootstrap-

Bootstrap ingress for clients connecting from outside the Kubernetes cluster. This resource is created only when an external listener is enabled and set to type

ingress. The new route name will be used for all other external listeners. <kafka_cluster_name>-network-policy-kafka-

Network policy managing access to the Kafka services.

<kafka_cluster_name>-kafka-role-

Role binding granting the Kafka brokers and controllers access to read their certificates and credentials.

strimzi-namespace-name-<kafka_cluster_name>-kafka-init-

Cluster role binding used by the Kafka brokers.

<kafka_cluster_name>-jmx-

Secret with JMX username and password used to secure the Kafka broker port. This resource is created only when JMX is enabled in Kafka.

The resources that are created per node pool.

The naming convention includes the name of the Kafka cluster and the node pool: <kafka_cluster_name>-<pool_name>.

<kafka_cluster_name>-<pool_name>-

Name given to the StrimziPodSet for managing the Kafka node pool.

<kafka_cluster_name>-<pool_name>-<pod_id>-

Name given to the following Kafka node pool resources:

-

Pods created by the StrimziPodSet.

-

Secret with Kafka node public and private keys.

-

ConfigMaps with Kafka node configuration.

-

Service used to route traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled. The old service name will be used for backwards compatibility when the listener name is

externaland port is9094. -

Route for traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled and set to type

route. The old route name will be used for backwards compatibility when the listener name isexternaland port is9094. -

Ingress for traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled and set to type

ingress. The old ingress name will be used for backwards compatibility when the listener name isexternaland port is9094.

-

<kafka_cluster_name>-<pool_name>-<listener_name>-<pod_id>-

Service used to route traffic from outside the Kubernetes cluster to individual pods, or when using the type

cluster-iplistener. This resource is created only when an external listener is enabled. The new service name will be used for all other external listeners. <kafka_cluster_name>-<pool_name>-<listener_name>-<pod_id>-

Route for traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled and set to type

route. The new route name will be used for all other external listeners. <kafka_cluster_name>-<pool_name>-<listener_name>-<pod_id>-

Ingress for traffic from outside the Kubernetes cluster to individual pods. This resource is created only when an external listener is enabled and set to type

ingress. The new ingress name will be used for all other external listeners. data-<kafka_cluster_name>-<pool_name>-<pod_id>-

Persistent Volume Claim for the volume used for storing data for a specific node. This resource is created only if persistent storage is selected for provisioning persistent volumes to store data.

data-<id>-<kafka_cluster_name>-<pool_name>-<pod_id>-

Persistent Volume Claim for the volume

idused for storing data for a specific node. This resource is created only if persistent storage is selected for JBOD volumes when provisioning persistent volumes to store data.

These resources are only created if the Entity Operator is deployed using the Cluster Operator.

<kafka_cluster_name>-entity-operator-

Name given to the following Entity Operator resources:

-

Deployment with Topic and User Operators.

-

Service account used by the Entity Operator.

-

Network policy managing access to the Entity Operator metrics.

-

Role granting the Entity Operator the rights to manage topics and users.

-

<kafka_cluster_name>-entity-operator-<random_string>-

Pod created by the Entity Operator deployment.

<kafka_cluster_name>-entity-topic-operator-config-

ConfigMap with ancillary configuration for Topic Operators.

<kafka_cluster_name>-entity-user-operator-config-

ConfigMap with ancillary configuration for User Operators.

<kafka_cluster_name>-entity-topic-operator-certs-

Secret with Topic Operator keys for communication with Kafka.

<kafka_cluster_name>-entity-user-operator-certs-

Secret with User Operator keys for communication with Kafka.

<kafka_cluster_name>-entity-topic-operator-

Role binding used by the Entity Topic Operator.

<kafka_cluster_name>-entity-user-operator-

Role binding used by the Entity User Operator.

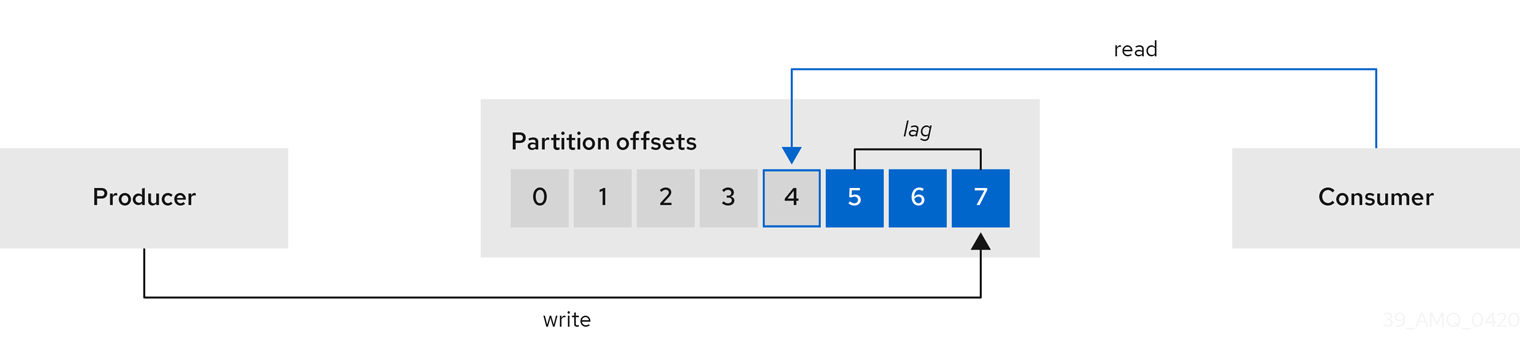

These resources are only created if the Kafka Exporter is deployed using the Cluster Operator.

<kafka_cluster_name>-kafka-exporter-

Name given to the following Kafka Exporter resources:

-

Deployment with Kafka Exporter.

-

Service used to collect consumer lag metrics.

-

Service account used by the Kafka Exporter.

-

Network policy managing access to the Kafka Exporter metrics.

-

<kafka_cluster_name>-kafka-exporter-<random_string>-

Pod created by the Kafka Exporter deployment.

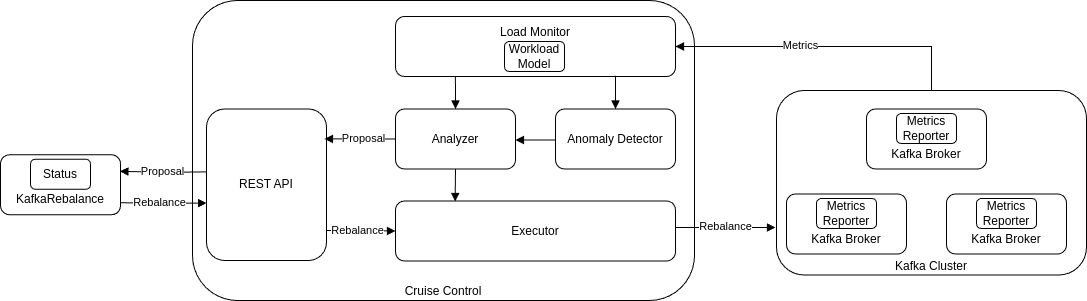

These resources are only created if Cruise Control was deployed using the Cluster Operator.

<kafka_cluster_name>-cruise-control-

Name given to the following Cruise Control resources:

-

Deployment with Cruise Control.

-

Service used to communicate with Cruise Control.

-

Service account used by the Cruise Control.

-

<kafka_cluster_name>-cruise-control-<random_string>-

Pod created by the Cruise Control deployment.

<kafka_cluster_name>-cruise-control-config-

ConfigMap that contains the Cruise Control ancillary configuration, and is mounted as a volume by the Cruise Control pods.

<kafka_cluster_name>-cruise-control-certs-

Secret with Cruise Control keys for communication with Kafka.

<kafka_cluster_name>-network-policy-cruise-control-

Network policy managing access to the Cruise Control service.

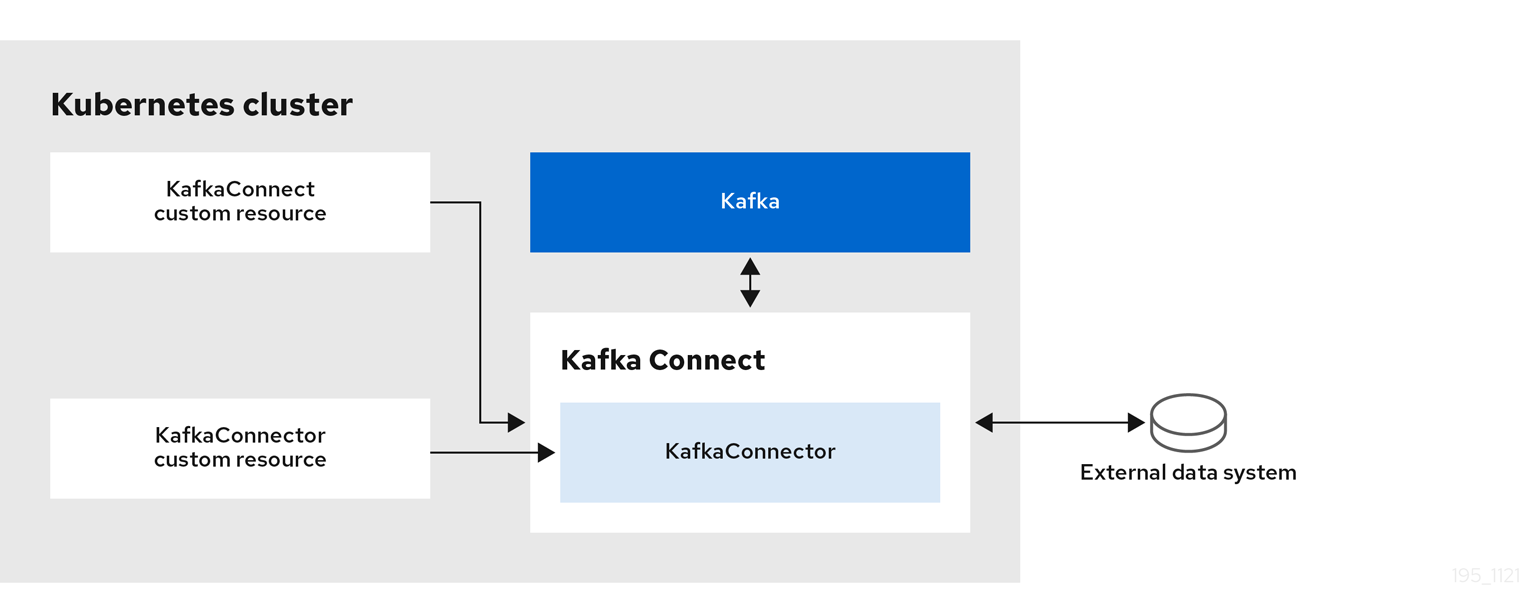

7.3. Deploying Kafka Connect

Kafka Connect is an integration toolkit for streaming data between Kafka brokers and other systems using connector plugins. Kafka Connect provides a framework for integrating Kafka with an external data source or target, such as a database or messaging system, for import or export of data using connectors. Connectors are plugins that provide the connection configuration needed.

In Strimzi, Kafka Connect is deployed in distributed mode. Kafka Connect can also work in standalone mode, but this is not supported by Strimzi.

Using the concept of connectors, Kafka Connect provides a framework for moving large amounts of data into and out of your Kafka cluster while maintaining scalability and reliability.

The Cluster Operator manages Kafka Connect clusters deployed using the KafkaConnect resource and connectors created using the KafkaConnector resource.

In order to use Kafka Connect, you need to do the following.

|

Note

|

The term connector is used interchangeably to mean a connector instance running within a Kafka Connect cluster, or a connector class. In this guide, the term connector is used when the meaning is clear from the context. |

7.3.1. Deploying Kafka Connect

This procedure shows how to deploy a Kafka Connect cluster to your Kubernetes cluster using the Cluster Operator.

A Kafka Connect cluster deployment is implemented with a configurable number of nodes (also called workers) that distribute the workload of connectors as tasks, ensuring a scalable and reliable message flow.

The deployment uses a YAML file to provide the specification to create a KafkaConnect resource.

Strimzi provides example configuration files. In this procedure, we use the following example file:

-

examples/connect/kafka-connect.yaml

|

Warning

|

When deploying multiple Kafka Connect clusters managed by the Cluster Operator, deploy each cluster into a separate namespace. Deploying multiple clusters in the same namespace can lead to naming conflicts and resource collisions. |

-

Cluster Operator is deployed.

-

Kafka cluster is running.

This procedure assumes that the Kafka cluster was deployed using Strimzi.

-

Edit the deployment file to configure connection details (if required).

In

examples/connect/kafka-connect.yaml, add or update the following properties as needed:-

spec.bootstrapServersto specify the Kafka bootstrap address. -

spec.authenticationto specify the authentication type astls,scram-sha-256,scram-sha-512,plain, orcustom. See theKafkaConnectSpecschema properties for configuration details. -

spec.tls.trustedCertificatesto configure the TLS certificate.

Use[](an empty array) to trust the default Java CAs, or specify secrets containing trusted certificates. See thetrustedCertificatesproperties for configuration details.

-

-

Configure the deployment for multiple Kafka Connect clusters (if required).

If you plan to run more than one Kafka Connect cluster in the same environment, each instance must use unique internal topic names and a unique group ID.

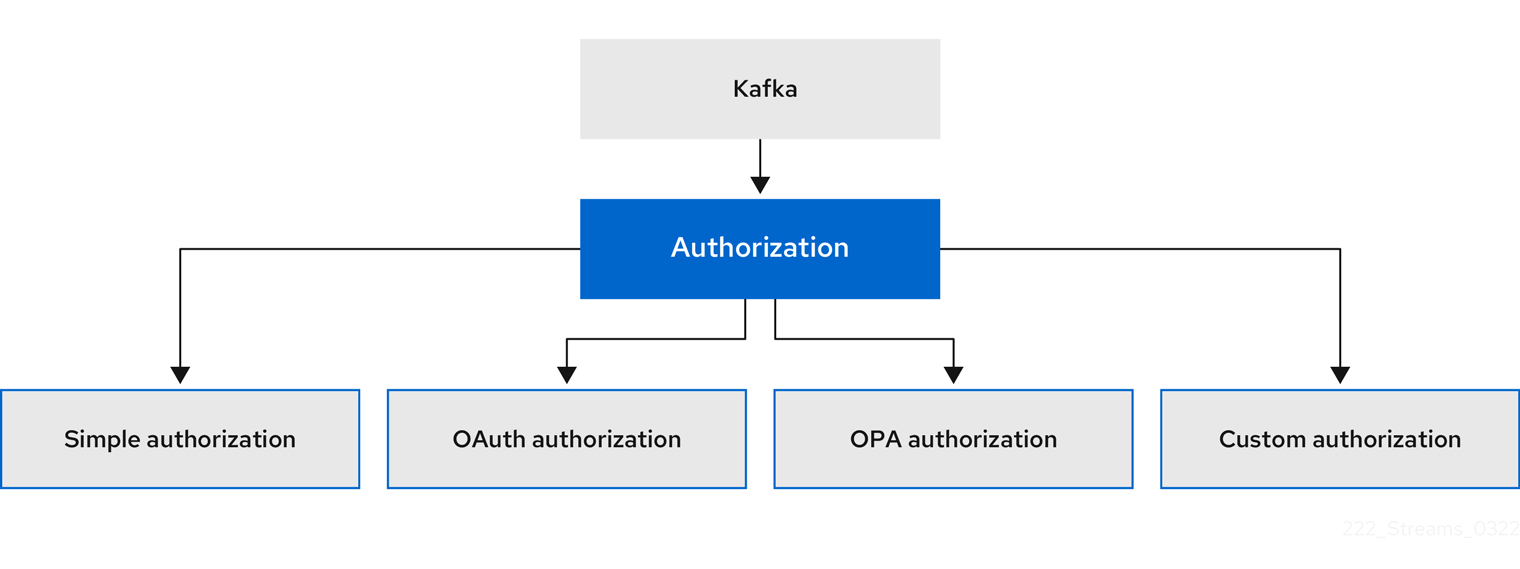

Update the