apiVersion: kafka.strimzi.io/v1beta1

kind: CustomResourceDefinition

metadata: (1)

name: kafkatopics.kafka.strimzi.io

labels:

app: strimzi

spec: (2)

group: kafka.strimzi.io

versions:

v1beta1

scope: Namespaced

names:

# ...

singular: kafkatopic

plural: kafkatopics

shortNames:

- kt (3)

additionalPrinterColumns: (4)

# ...

subresources:

status: {} (5)

validation: (6)

openAPIV3Schema:

properties:

spec:

type: object

properties:

partitions:

type: integer

minimum: 1

replicas:

type: integer

minimum: 1

maximum: 32767

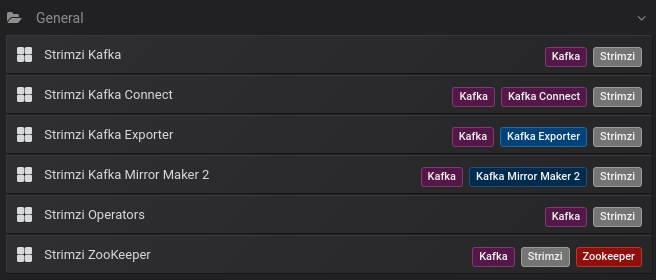

# ...- 1. Deployment overview

- 2. What is deployed with Strimzi

- 3. Preparing for your Strimzi deployment

- 4. Deploying Strimzi

- 5. Setting up client access to the Kafka cluster

- 6. Introducing Metrics to Kafka

- 7. Upgrading Strimzi

Deploying and Upgrading (0.19.0)

- 1. Deployment overview

- 2. What is deployed with Strimzi

- 3. Preparing for your Strimzi deployment

- 4. Deploying Strimzi

- 5. Setting up client access to the Kafka cluster

- 6. Introducing Metrics to Kafka

- 7. Upgrading Strimzi

1. Deployment overview

Strimzi simplifies the process of running Apache Kafka in a Kubernetes cluster.

This guide provides instructions on all the options available for deploying and upgrading Strimzi, describing what is deployed, and the order of deployment required to run Apache Kafka in a Kubernetes cluster.

As well as describing the deployment steps, the guide also provides pre- and post-deployment instructions to prepare for and verify a deployment. Additional deployment options described include the steps to introduce metrics. Upgrade instructions are provided for Strimzi and Kafka upgrades.

Strimzi is designed to work on all types of Kubernetes cluster regardless of distribution, from public and private clouds to local deployments intended for development.

1.1. How Strimzi supports Kafka

Strimzi provides container images and Operators for running Kafka on Kubernetes. Strimzi Operators are fundamental to the running of Strimzi. The Operators provided with Strimzi are purpose-built with specialist operational knowledge to effectively manage Kafka.

Operators simplify the process of:

-

Deploying and running Kafka clusters

-

Deploying and running Kafka components

-

Configuring access to Kafka

-

Securing access to Kafka

-

Upgrading Kafka

-

Managing brokers

-

Creating and managing topics

-

Creating and managing users

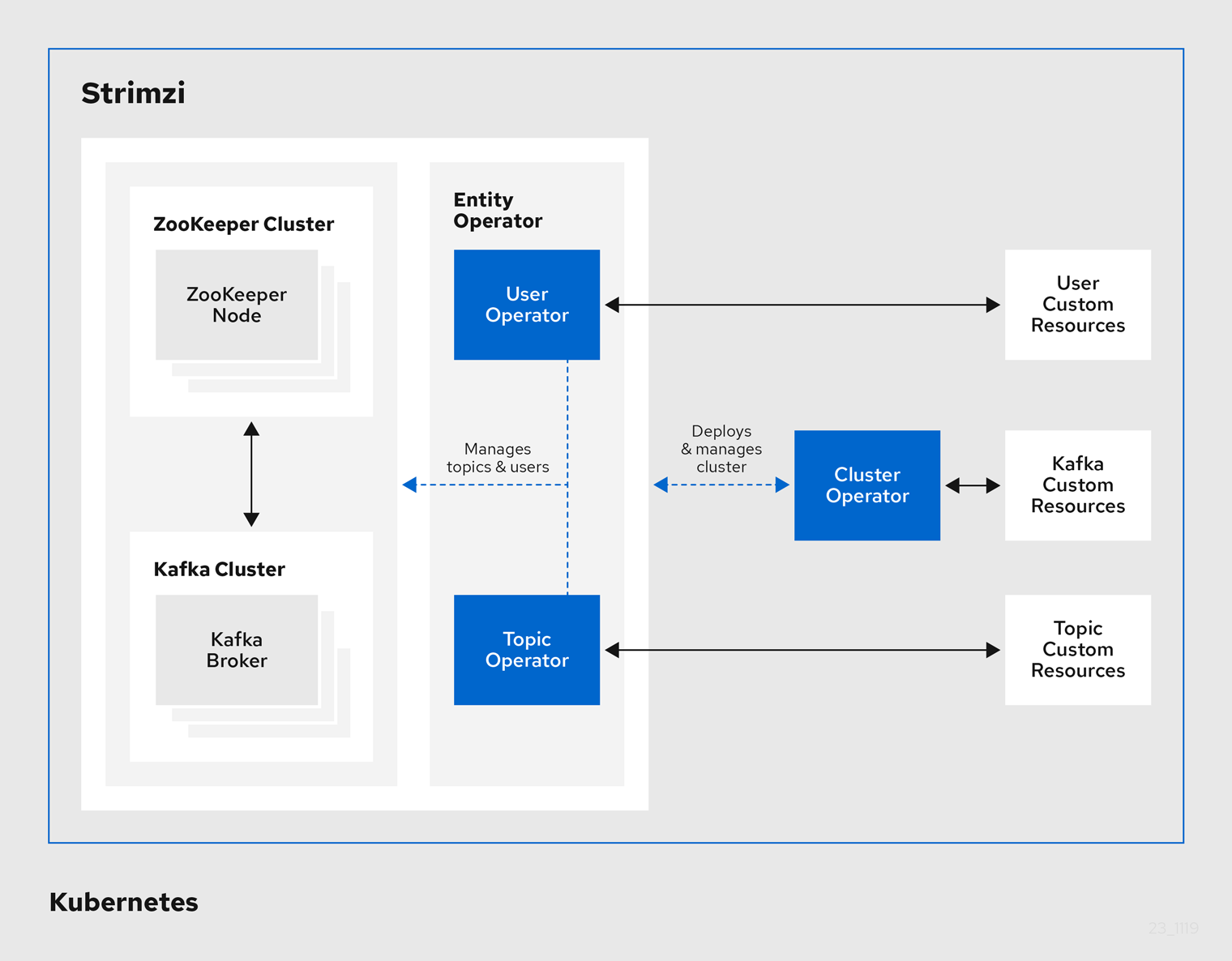

1.2. Strimzi Operators

Strimzi supports Kafka using Operators to deploy and manage the components and dependencies of Kafka to Kubernetes.

Operators are a method of packaging, deploying, and managing a Kubernetes application. Strimzi Operators extend Kubernetes functionality, automating common and complex tasks related to a Kafka deployment. By implementing knowledge of Kafka operations in code, Kafka administration tasks are simplified and require less manual intervention.

Operators

Strimzi provides Operators for managing a Kafka cluster running within a Kubernetes cluster.

- Cluster Operator

-

Deploys and manages Apache Kafka clusters, Kafka Connect, Kafka MirrorMaker, Kafka Bridge, Kafka Exporter, and the Entity Operator

- Entity Operator

-

Comprises the Topic Operator and User Operator

- Topic Operator

-

Manages Kafka topics

- User Operator

-

Manages Kafka users

The Cluster Operator can deploy the Topic Operator and User Operator as part of an Entity Operator configuration at the same time as a Kafka cluster.

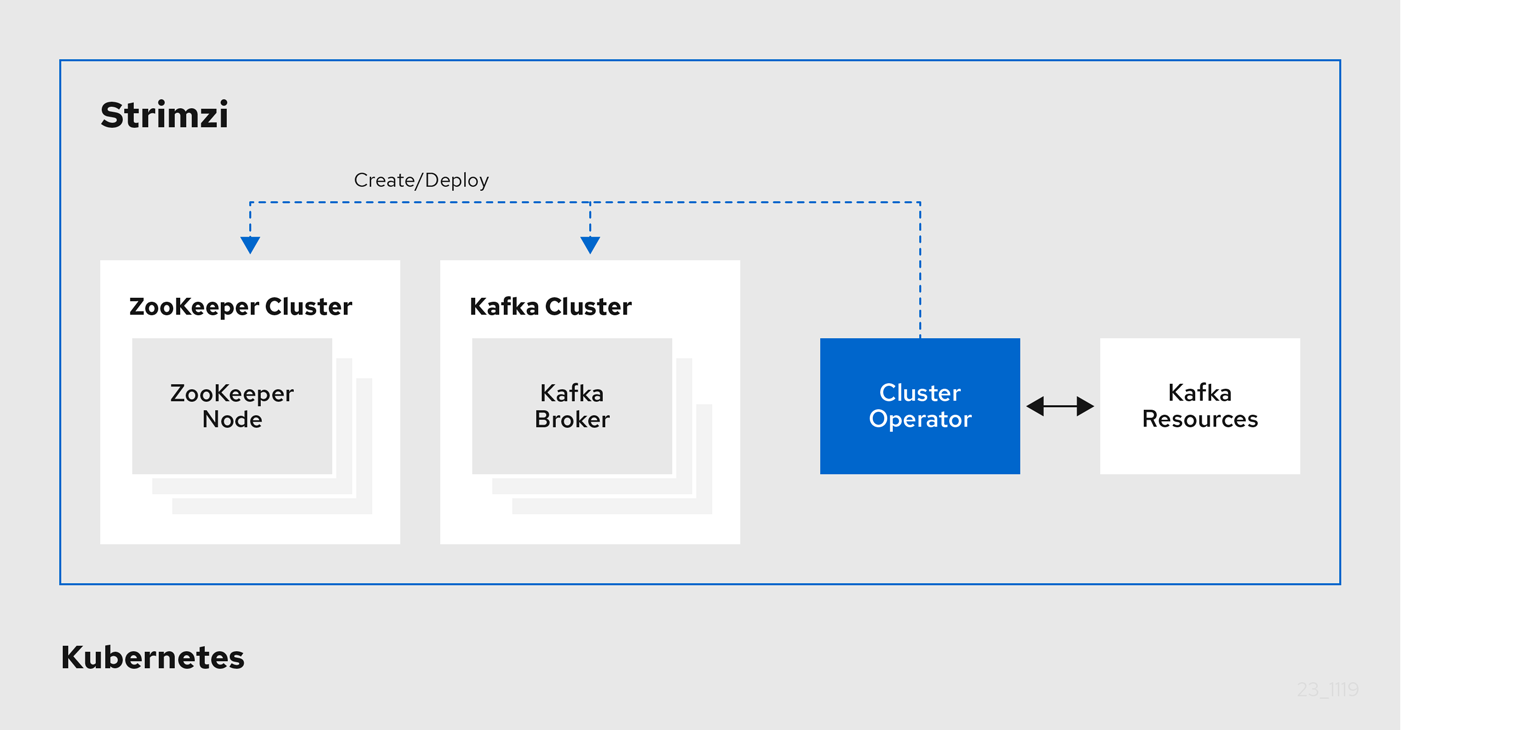

1.2.1. Cluster Operator

Strimzi uses the Cluster Operator to deploy and manage clusters for:

-

Kafka (including ZooKeeper, Entity Operator, Kafka Exporter, and Cruise Control)

-

Kafka Connect

-

Kafka MirrorMaker

-

Kafka Bridge

Custom resources are used to deploy the clusters.

For example, to deploy a Kafka cluster:

-

A

Kafkaresource with the cluster configuration is created within the Kubernetes cluster. -

The Cluster Operator deploys a corresponding Kafka cluster, based on what is declared in the

Kafkaresource.

The Cluster Operator can also deploy (through configuration of the Kafka resource):

-

A Topic Operator to provide operator-style topic management through

KafkaTopiccustom resources -

A User Operator to provide operator-style user management through

KafkaUsercustom resources

The Topic Operator and User Operator function within the Entity Operator on deployment.

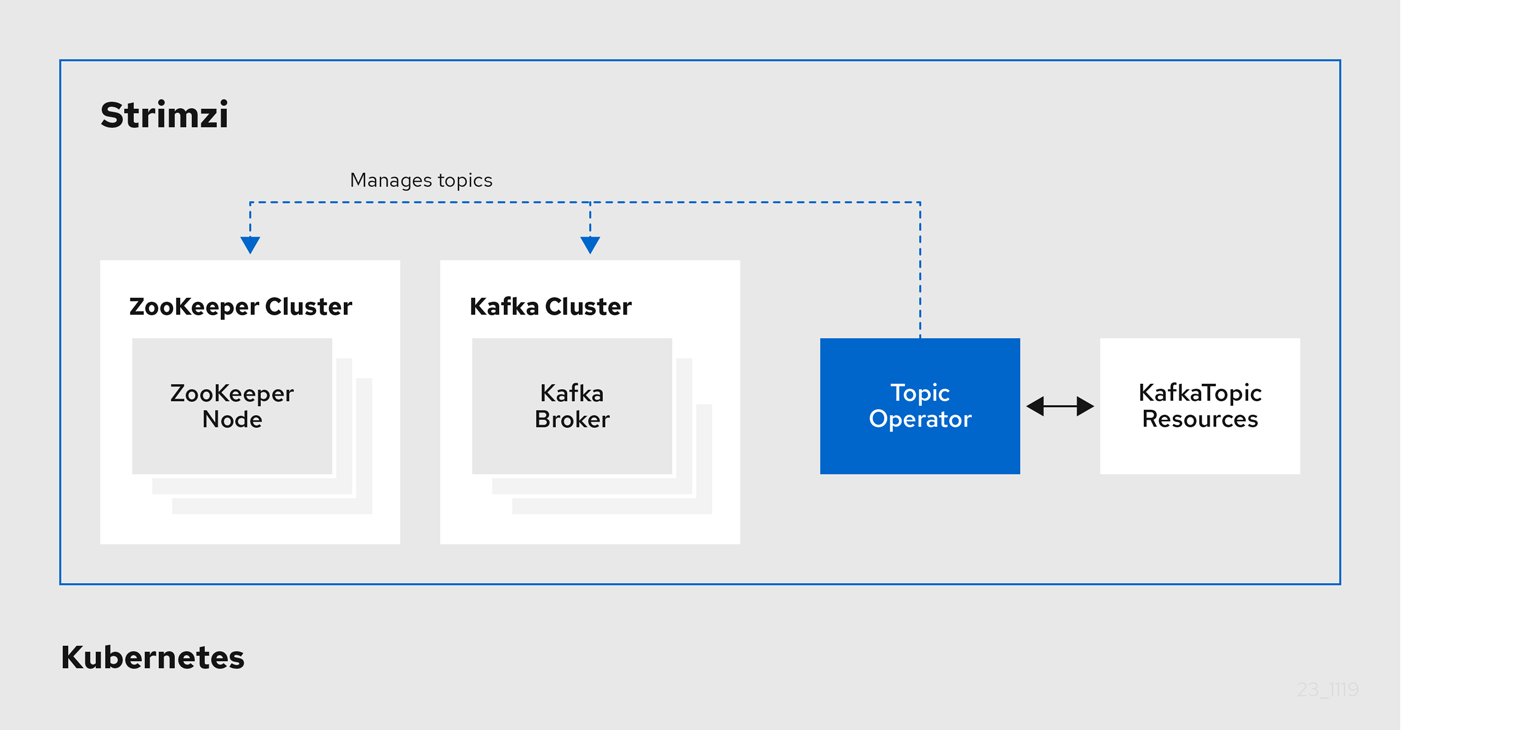

1.2.2. Topic Operator

The Topic Operator provides a way of managing topics in a Kafka cluster through Kubernetes resources.

The role of the Topic Operator is to keep a set of KafkaTopic Kubernetes resources describing Kafka topics in-sync with corresponding Kafka topics.

Specifically, if a KafkaTopic is:

-

Created, the Topic Operator creates the topic

-

Deleted, the Topic Operator deletes the topic

-

Changed, the Topic Operator updates the topic

Working in the other direction, if a topic is:

-

Created within the Kafka cluster, the Operator creates a

KafkaTopic -

Deleted from the Kafka cluster, the Operator deletes the

KafkaTopic -

Changed in the Kafka cluster, the Operator updates the

KafkaTopic

This allows you to declare a KafkaTopic as part of your application’s deployment and the Topic Operator will take care of creating the topic for you.

Your application just needs to deal with producing or consuming from the necessary topics.

If the topic is reconfigured or reassigned to different Kafka nodes, the KafkaTopic will always be up to date.

1.2.3. User Operator

The User Operator manages Kafka users for a Kafka cluster by watching for KafkaUser resources that describe Kafka users,

and ensuring that they are configured properly in the Kafka cluster.

For example, if a KafkaUser is:

-

Created, the User Operator creates the user it describes

-

Deleted, the User Operator deletes the user it describes

-

Changed, the User Operator updates the user it describes

Unlike the Topic Operator, the User Operator does not sync any changes from the Kafka cluster with the Kubernetes resources. Kafka topics can be created by applications directly in Kafka, but it is not expected that the users will be managed directly in the Kafka cluster in parallel with the User Operator.

The User Operator allows you to declare a KafkaUser resource as part of your application’s deployment.

You can specify the authentication and authorization mechanism for the user.

You can also configure user quotas that control usage of Kafka resources to ensure, for example, that a user does not monopolize access to a broker.

When the user is created, the user credentials are created in a Secret.

Your application needs to use the user and its credentials for authentication and to produce or consume messages.

In addition to managing credentials for authentication, the User Operator also manages authorization rules by including a description of the user’s access rights in the KafkaUser declaration.

1.3. Strimzi custom resources

A deployment of Kafka components to a Kubernetes cluster using Strimzi is highly configurable through the application of custom resources. Custom resources are created as instances of APIs added by Custom resource definitions (CRDs) to extend Kubernetes resources.

CRDs act as configuration instructions to describe the custom resources in a Kubernetes cluster, and are provided with Strimzi for each Kafka component used in a deployment, as well as users and topics. CRDs and custom resources are defined as YAML files. Example YAML files are provided with the Strimzi distribution.

CRDs also allow Strimzi resources to benefit from native Kubernetes features like CLI accessibility and configuration validation.

1.3.1. Strimzi custom resource example

CRDs require a one-time installation in a cluster to define the schemas used to instantiate and manage Strimzi-specific resources.

After a new custom resource type is added to your cluster by installing a CRD, you can create instances of the resource based on its specification.

Depending on the cluster setup, installation typically requires cluster admin privileges.

|

Note

|

Access to manage custom resources is limited to Strimzi administrators. For more information, see Designating Strimzi administrators in the Deploying Strimzi guide. |

A CRD defines a new kind of resource, such as kind:Kafka, within a Kubernetes cluster.

The Kubernetes API server allows custom resources to be created based on the kind and understands from the CRD how to validate and store the custom resource when it is added to the Kubernetes cluster.

|

Warning

|

When CRDs are deleted, custom resources of that type are also deleted. Additionally, the resources created by the custom resource, such as pods and statefulsets are also deleted. |

Each Strimzi-specific custom resource conforms to the schema defined by the CRD for the resource’s kind.

The custom resources for Strimzi components have common configuration properties, which are defined under spec.

To understand the relationship between a CRD and a custom resource, let’s look at a sample of the CRD for a Kafka topic.

-

The metadata for the topic CRD, its name and a label to identify the CRD.

-

The specification for this CRD, including the group (domain) name, the plural name and the supported schema version, which are used in the URL to access the API of the topic. The other names are used to identify instance resources in the CLI. For example,

kubectl get kafkatopic my-topicorkubectl get kafkatopics. -

The shortname can be used in CLI commands. For example,

kubectl get ktcan be used as an abbreviation instead ofkubectl get kafkatopic. -

The information presented when using a

getcommand on the custom resource. -

The current status of the CRD as described in the schema reference for the resource.

-

openAPIV3Schema validation provides validation for the creation of topic custom resources. For example, a topic requires at least one partition and one replica.

|

Note

|

You can identify the CRD YAML files supplied with the Strimzi installation files, because the file names contain an index number followed by ‘Crd’. |

Here is a corresponding example of a KafkaTopic custom resource.

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaTopic (1)

metadata:

name: my-topic

labels:

strimzi.io/cluster: my-cluster (2)

spec: (3)

partitions: 1

replicas: 1

config:

retention.ms: 7200000

segment.bytes: 1073741824

status:

conditions: (4)

lastTransitionTime: "2019-08-20T11:37:00.706Z"

status: "True"

type: Ready

observedGeneration: 1

/ ...-

The

kindandapiVersionidentify the CRD of which the custom resource is an instance. -

A label, applicable only to

KafkaTopicandKafkaUserresources, that defines the name of the Kafka cluster (which is same as the name of theKafkaresource) to which a topic or user belongs. -

The spec shows the number of partitions and replicas for the topic as well as the configuration parameters for the topic itself. In this example, the retention period for a message to remain in the topic and the segment file size for the log are specified.

-

Status conditions for the

KafkaTopicresource. Thetypecondition changed toReadyat thelastTransitionTime.

Custom resources can be applied to a cluster through the platform CLI. When the custom resource is created, it uses the same validation as the built-in resources of the Kubernetes API.

After a KafkaTopic custom resource is created, the Topic Operator is notified and corresponding Kafka topics are created in Strimzi.

2. What is deployed with Strimzi

Apache Kafka components are provided for deployment to Kubernetes with the Strimzi distribution. The Kafka components are generally run as clusters for availability.

A typical deployment incorporating Kafka components might include:

-

Kafka cluster of broker nodes

-

ZooKeeper cluster of replicated ZooKeeper instances

-

Kafka Connect cluster for external data connections

-

Kafka MirrorMaker cluster to mirror the Kafka cluster in a secondary cluster

-

Kafka Exporter to extract additional Kafka metrics data for monitoring

-

Kafka Bridge to make HTTP-based requests to the Kafka cluster

Not all of these components are mandatory, though you need Kafka and ZooKeeper as a minimum. Some components can be deployed without Kafka, such as MirrorMaker or Kafka Connect.

2.1. Order of deployment

The required order of deployment to a Kubernetes cluster is as follows:

-

Deploy the Cluster operator to manage your Kafka cluster

-

Deploy the Kafka cluster with the ZooKeeper cluster, and include the Topic Operator and User Operator in the deployment

-

Optionally deploy:

-

The Topic Operator and User Operator standalone if you did not deploy them with the Kafka cluster

-

Kafka Connect

-

Kafka MirrorMaker

-

Kafka Bridge

-

Components for the monitoring of metrics

-

2.2. Additional deployment configuration options

The deployment procedures in this guide describe a deployment using the example installation YAML files provided with Strimzi. The procedures highlight any important configuration considerations, but they do not describe all the configuration options available.

You can use custom resources to refine your deployment.

You may wish to review the configuration options available for Kafka components before you deploy Strimzi. For more information on the configuration through custom resources, see Deployment configuration in the Using Strimzi guide.

2.2.1. Securing Kafka

On deployment, the Cluster Operator automatically sets up TLS certificates for data encryption and authentication within your cluster.

Strimzi provides additional configuration options for encryption, authentication and authorization, which are described in the Using Strimzi guide:

-

Secure data exchange between the Kafka cluster and clients by configuration of Kafka resources.

-

Configure your deployment to use an authorization server to provide OAuth 2.0 authentication and OAuth 2.0 authorization.

2.2.2. Monitoring your deployment

Strimzi supports additional deployment options to monitor your deployment.

-

Extract metrics and monitor Kafka components by deploying Prometheus and Grafana with your Kafka cluster.

-

Extract additional metrics, particularly related to monitoring consumer lag, by deploying Kafka Exporter with your Kafka cluster.

-

Track messages end-to-end by setting up distributed tracing, as described in the Using Strimzi guide.

3. Preparing for your Strimzi deployment

This section shows how you prepare for a Strimzi deployment, describing:

-

How to download the Strimzi release artifacts to use in your deployment

-

How to push the Strimzi container images into you own registry (if required)

-

How to set up admin roles for configuration of custom resources used in deployment

-

Alternative deployment options to Kubernetes using Minikube or Minishift

|

Note

|

To run the commands in this guide, your cluster user must have the rights to manage role-based access control (RBAC) and CRDs. |

3.1. Deployment prerequisites

To deploy Strimzi, make sure:

-

A Kubernetes 1.11 and later cluster is available

-

The

kubectlcommand-line tool is installed and configured to connect to the running cluster.

|

Note

|

Strimzi supports some features that are specific to OpenShift, where such integration benefits OpenShift users and there is no equivalent implementation using standard Kubernetes. |

Alternatives if a Kubernetes cluster is not available

If you do not have access to a Kubernetes cluster, as an alternative you can try installing Strimzi with:

3.2. Downloading Strimzi release artifacts

To install Strimzi, download the release artifacts from GitHub.

Strimzi release artifacts include sample YAML files to help you deploy the components of Strimzi to Kubernetes, perform common operations, and configure your Kafka cluster.

You deploy Strimzi to a Kubernetes cluster using the kubectl command-line tool.

|

Note

|

Additionally, Strimzi container images are available through the Docker Hub. However, we recommend that you use the YAML files provided to deploy Strimzi. |

3.3. Pushing container images to your own registry

Container images for Strimzi are available in the Docker Hub. The installation YAML files provided by Strimzi will pull the images directly from the Docker Hub.

If you do not have access to the Docker Hub or want to use your own container repository:

-

Pull all container images listed here

-

Push them into your own registry

-

Update the image names in the YAML files used in deployment

|

Note

|

Each Kafka version supported for the release has a separate image. |

| Container image | Namespace/Repository | Description |

|---|---|---|

Kafka |

|

Strimzi image for running Kafka, including:

|

Operator |

|

Strimzi image for running the operators:

|

Kafka Bridge |

|

Strimzi image for running the Strimzi kafka Bridge |

JmxTrans |

|

Strimzi image for running the Strimzi JmxTrans |

3.4. Designating Strimzi administrators

Strimzi provides custom resources for configuration of your deployment. By default, permission to view, create, edit, and delete these resources is limited to Kubernetes cluster administrators. Strimzi provides two cluster roles that you can use to assign these rights to other users:

-

strimzi-viewallows users to view and list Strimzi resources. -

strimzi-adminallows users to also create, edit or delete Strimzi resources.

When you install these roles, they will automatically aggregate (add) these rights to the default Kubernetes cluster roles.

strimzi-view aggregates to the view role, and strimzi-admin aggregates to the edit and admin roles.

Because of the aggregation, you might not need to assign these roles to users who already have similar rights.

The following procedure shows how to assign a strimzi-admin role that allows non-cluster administrators to manage Strimzi resources.

A system administrator can designate Strimzi administrators after the Cluster Operator is deployed.

-

The Strimzi Custom Resource Definitions (CRDs) and role-based access control (RBAC) resources to manage the CRDs have been deployed with the Cluster Operator.

-

Create the

strimzi-viewandstrimzi-admincluster roles in Kubernetes.kubectl apply -f install/strimzi-admin -

If needed, assign the roles that provide access rights to users that require them.

kubectl create clusterrolebinding strimzi-admin --clusterrole=strimzi-admin --user=user1 --user=user2

3.5. Alternative cluster deployment options

This section suggests alternatives to using a Kubernetes cluster.

If a Kubernetes cluster is unavailable, you can use:

-

Minikube to create a local cluster

-

Minishift to create a local OpenShift cluster and use OpenShift-specific features

3.5.1. Installing a local Kubernetes cluster

The easiest way to get started with Kubernetes is using Minikube. This section provides basic guidance on how to use it. For more information on the tools, refer to the documentation available online.

In order to interact with a Kubernetes cluster the kubectl

utility needs to be installed.

You can download and install Minikube from the Kubernetes website. Depending on the number of brokers you want to deploy inside the cluster, and if you need Kafka Connect running as well, try running Minikube with at least with 4 GB of RAM instead of the default 2 GB.

Once installed, start Minikube using:

minikube start --memory 40963.5.2. Installing a local OpenShift cluster

The easiest way to get started with OpenShift is using Minishift or oc cluster up.

This section provides basic guidance on how to use them.

For more information on the tools, refer to the documentation available online.

oc cluster up

The oc utility is one of the main tools for interacting with OpenShift.

It provides a simple way of starting a local cluster using the command:

oc cluster upThis command requires Docker to be installed. You can find more inforation on here.

Minishift

Minishift is an OpenShift installation within a VM. It can be downloaded and installed from the Minishift website. Depending on the number of brokers you want to deploy inside the cluster, and if you need Kafka Connect running as well, try running Minishift with at least 4 GB of RAM instead of the default 2 GB.

Once installed, start Minishift using:

minishift start --memory 4GBIf you want to use kubectl with either an oc cluster up or minishift cluster,

you will need to configure it, as unlike with Minikube this won’t be done automatically.

oc and kubectl commands

The oc command functions as an alternative to kubectl.

In almost all cases the example kubectl commands given in this guide can be done using oc simply by replacing the command name (options and arguments remain the same).

In other words, instead of using:

kubectl apply -f your-filewhen using OpenShift you can use

oc apply -f your-file|

Note

|

As an exception to this general rule, oc uses oc adm subcommands for cluster management,

while kubectl does not make such a distinction.

For example, the oc equivalent of kubectl taint is oc adm taint.

|

4. Deploying Strimzi

Having prepared your environment for a deployment of Strimzi, this section shows:

-

Optional procedures to deploy other Kafka components according to your requirements:

The procedures assume a Kubernetes cluster is available and running.

This section describes the procedures to deploy Strimzi on Kubernetes 1.11 and later.

|

Note

|

To run the commands in this guide, your cluster user must have the rights to manage role-based access control (RBAC) and CRDs. |

4.1. Create the Kafka cluster

In order to create your Kafka cluster, you deploy the Cluster Operator to manage the Kafka cluster, then deploy the Kafka cluster.

When deploying the Kafka cluster using the Kafka resource, you can deploy the Topic Operator and User Operator at the same time.

Alternatively, if you are using a non-Strimzi Kafka cluster, you can deploy the Topic Operator and User Operator as standalone components.

Deploying a Kafka cluster with the Topic Operator and User Operator

Perform these deployment steps if you want to use the Topic Operator and User Operator with a Kafka cluster managed by Strimzi.

-

Use the Cluster Operator to deploy the:

Deploying a standalone Topic Operator and User Operator

Perform these deployment steps if you want to use the Topic Operator and User Operator with a Kafka cluster that is not managed by Strimzi.

4.1.1. Deploying the Cluster Operator

The Cluster Operator is responsible for deploying and managing Apache Kafka clusters within a Kubernetes cluster.

The procedures in this section show:

-

How to deploy the Cluster Operator to watch:

-

Alternative deployment options:

Watch options for a Cluster Operator deployment

When the Cluster Operator is running, it starts to watch for updates of Kafka resources.

You can choose to deploy the Cluster Operator to watch Kafka resources from:

-

A single namespace (the same namespace containing the Cluster Operator)

-

Multiple namespaces

-

All namespaces

|

Note

|

Strimzi provides example YAML files to make the deployment process easier. |

The Cluster Operator watches for changes to the following resources:

-

Kafkafor the Kafka cluster. -

KafkaConnectfor the Kafka Connect cluster. -

KafkaConnectS2Ifor the Kafka Connect cluster with Source2Image support. -

KafkaConnectorfor creating and managing connectors in a Kafka Connect cluster. -

KafkaMirrorMakerfor the Kafka MirrorMaker instance. -

KafkaBridgefor the Kafka Bridge instance

When one of these resources is created in the Kubernetes cluster, the operator gets the cluster description from the resource and starts creating a new cluster for the resource by creating the necessary Kubernetes resources, such as StatefulSets, Services and ConfigMaps.

Each time a Kafka resource is updated, the operator performs corresponding updates on the Kubernetes resources that make up the cluster for the resource.

Resources are either patched or deleted, and then recreated in order to make the cluster for the resource reflect the desired state of the cluster. This operation might cause a rolling update that might lead to service disruption.

When a resource is deleted, the operator undeploys the cluster and deletes all related Kubernetes resources.

Deploying the Cluster Operator to watch a single namespace

This procedure shows how to deploy the Cluster Operator to watch Strimzi resources in a single namespace in your Kubernetes cluster.

-

This procedure requires use of a Kubernetes user account which is able to create

CustomResourceDefinitions,ClusterRolesandClusterRoleBindings. Use of Role Base Access Control (RBAC) in the Kubernetes cluster usually means that permission to create, edit, and delete these resources is limited to Kubernetes cluster administrators, such assystem:admin.

-

Edit the Strimzi installation files to use the namespace the Cluster Operator is going to be installed into.

For example, in this procedure the Cluster Operator is installed into the namespace

my-cluster-operator-namespace.On Linux, use:

sed -i 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yaml -

Deploy the Cluster Operator:

kubectl apply -f install/cluster-operator -n my-cluster-operator-namespace -

Verify that the Cluster Operator was successfully deployed:

kubectl get deployments

Deploying the Cluster Operator to watch multiple namespaces

This procedure shows how to deploy the Cluster Operator to watch Strimzi resources across multiple namespaces in your Kubernetes cluster.

-

This procedure requires use of a Kubernetes user account which is able to create

CustomResourceDefinitions,ClusterRolesandClusterRoleBindings. Use of Role Base Access Control (RBAC) in the Kubernetes cluster usually means that permission to create, edit, and delete these resources is limited to Kubernetes cluster administrators, such assystem:admin.

-

Edit the Strimzi installation files to use the namespace the Cluster Operator is going to be installed into.

For example, in this procedure the Cluster Operator is installed into the namespace

my-cluster-operator-namespace.On Linux, use:

sed -i 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yaml -

Edit the

install/cluster-operator/050-Deployment-strimzi-cluster-operator.yamlfile to add a list of all the namespaces the Cluster Operator will watch to theSTRIMZI_NAMESPACEenvironment variable.For example, in this procedure the Cluster Operator will watch the namespaces

watched-namespace-1,watched-namespace-2,watched-namespace-3.apiVersion: apps/v1 kind: Deployment spec: # ... template: spec: serviceAccountName: strimzi-cluster-operator containers: - name: strimzi-cluster-operator image: strimzi/operator:0.19.0 imagePullPolicy: IfNotPresent env: - name: STRIMZI_NAMESPACE value: watched-namespace-1,watched-namespace-2,watched-namespace-3 -

For each namespace listed, install the

RoleBindings.In this example, we replace

watched-namespacein these commands with the namespaces listed in the previous step, repeating them forwatched-namespace-1,watched-namespace-2,watched-namespace-3:kubectl apply -f install/cluster-operator/020-RoleBinding-strimzi-cluster-operator.yaml -n watched-namespace kubectl apply -f install/cluster-operator/031-RoleBinding-strimzi-cluster-operator-entity-operator-delegation.yaml -n watched-namespace kubectl apply -f install/cluster-operator/032-RoleBinding-strimzi-cluster-operator-topic-operator-delegation.yaml -n watched-namespace -

Deploy the Cluster Operator:

kubectl apply -f install/cluster-operator -n my-cluster-operator-namespace -

Verify that the Cluster Operator was successfully deployed:

kubectl get deployments

Deploying the Cluster Operator to watch all namespaces

This procedure shows how to deploy the Cluster Operator to watch Strimzi resources across all namespaces in your Kubernetes cluster.

When running in this mode, the Cluster Operator automatically manages clusters in any new namespaces that are created.

-

This procedure requires use of a Kubernetes user account which is able to create

CustomResourceDefinitions,ClusterRolesandClusterRoleBindings. Use of Role Base Access Control (RBAC) in the Kubernetes cluster usually means that permission to create, edit, and delete these resources is limited to Kubernetes cluster administrators, such assystem:admin.

-

Edit the Strimzi installation files to use the namespace the Cluster Operator is going to be installed into.

For example, in this procedure the Cluster Operator is installed into the namespace

my-cluster-operator-namespace.On Linux, use:

sed -i 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yamlOn MacOS, use:

sed -i '' 's/namespace: .*/namespace: my-cluster-operator-namespace/' install/cluster-operator/*RoleBinding*.yaml -

Edit the

install/cluster-operator/050-Deployment-strimzi-cluster-operator.yamlfile to set the value of theSTRIMZI_NAMESPACEenvironment variable to*.apiVersion: apps/v1 kind: Deployment spec: # ... template: spec: # ... serviceAccountName: strimzi-cluster-operator containers: - name: strimzi-cluster-operator image: strimzi/operator:0.19.0 imagePullPolicy: IfNotPresent env: - name: STRIMZI_NAMESPACE value: "*" # ... -

Create

ClusterRoleBindingsthat grant cluster-wide access for all namespaces to the Cluster Operator.kubectl create clusterrolebinding strimzi-cluster-operator-namespaced --clusterrole=strimzi-cluster-operator-namespaced --serviceaccount my-cluster-operator-namespace:strimzi-cluster-operator kubectl create clusterrolebinding strimzi-cluster-operator-entity-operator-delegation --clusterrole=strimzi-entity-operator --serviceaccount my-cluster-operator-namespace:strimzi-cluster-operator kubectl create clusterrolebinding strimzi-cluster-operator-topic-operator-delegation --clusterrole=strimzi-topic-operator --serviceaccount my-cluster-operator-namespace:strimzi-cluster-operatorReplace

my-cluster-operator-namespacewith the namespace you want to install the Cluster Operator into. -

Deploy the Cluster Operator to your Kubernetes cluster.

kubectl apply -f install/cluster-operator -n my-cluster-operator-namespace -

Verify that the Cluster Operator was successfully deployed:

kubectl get deployments

Deploying the Cluster Operator using a Helm Chart

As an alternative to using the YAML deployment files, this procedure shows how to deploy the Cluster Operator using a Helm chart provided with Strimzi.

-

The Helm client must be installed on a local machine.

-

Helm must be installed to the Kubernetes cluster.

For more information about Helm, see the Helm website.

-

Add the Strimzi Helm Chart repository:

helm repo add strimzi https://strimzi.io/charts/ -

Deploy the Cluster Operator using the Helm command line tool:

helm install strimzi/strimzi-kafka-operator -

Verify that the Cluster Operator has been deployed successfully using the Helm command line tool:

helm ls

Deploying the Cluster Operator from OperatorHub.io

OperatorHub.io is a catalog of Kubernetes Operators sourced from multiple providers. It offers you an alternative way to install stable versions of Strimzi using the Strimzi Kafka Operator.

The Operator Lifecycle Manager is used for the installation and management of all Operators published on OperatorHub.io.

To install Strimzi from OperatorHub.io, locate the Strimzi Kafka Operator and follow the instructions provided.

4.1.2. Deploying Kafka

Apache Kafka is an open-source distributed publish-subscribe messaging system for fault-tolerant real-time data feeds.

The procedures in this section show:

-

How to use the Cluster Operator to deploy:

-

The Topic Operator and User Operator by configuring the

Kafkacustom resource:

-

Alternative standalone deployment procedures for the Topic Operator and User Operator:

When installing Kafka, Strimzi also installs a ZooKeeper cluster and adds the necessary configuration to connect Kafka with ZooKeeper.

Deploying the Kafka cluster

This procedure shows how to deploy a Kafka cluster to your Kubernetes using the Cluster Operator.

The deployment uses a YAML file to provide the specification to create a Kafka resource.

Strimzi provides example YAMLs files for deployment in examples/kafka/:

kafka-persistent.yaml-

Deploys a persistent cluster with three ZooKeeper and three Kafka nodes.

kafka-jbod.yaml-

Deploys a persistent cluster with three ZooKeeper and three Kafka nodes (each using multiple persistent volumes).

kafka-persistent-single.yaml-

Deploys a persistent cluster with a single ZooKeeper node and a single Kafka node.

kafka-ephemeral.yaml-

Deploys an ephemeral cluster with three ZooKeeper and three Kafka nodes.

kafka-ephemeral-single.yaml-

Deploys an ephemeral cluster with three ZooKeeper nodes and a single Kafka node.

In this procedure, we use the examples for an ephemeral and persistent Kafka cluster deployment:

- Ephemeral cluster

-

In general, an ephemeral (or temporary) Kafka cluster is suitable for development and testing purposes, not for production. This deployment uses

emptyDirvolumes for storing broker information (for ZooKeeper) and topics or partitions (for Kafka). Using anemptyDirvolume means that its content is strictly related to the pod life cycle and is deleted when the pod goes down. - Persistent cluster

-

A persistent Kafka cluster uses

PersistentVolumesto store ZooKeeper and Kafka data. ThePersistentVolumeis acquired using aPersistentVolumeClaimto make it independent of the actual type of thePersistentVolume. For example, it can use Amazon EBS volumes in Amazon AWS deployments without any changes in the YAML files. ThePersistentVolumeClaimcan use aStorageClassto trigger automatic volume provisioning.

The example clusters are named my-cluster by default.

The cluster name is defined by the name of the resource and cannot be changed after the cluster has been deployed.

To change the cluster name before you deploy the cluster, edit the Kafka.metadata.name property of the Kafka resource in the relevant YAML file.

apiVersion: kafka.strimzi.io/v1beta1

kind: Kafka

metadata:

name: my-cluster

# ...For more information about configuring the Kafka resource, see Kafka cluster configuration in the Using Strimzi guide.

-

Create and deploy an ephemeral or persistent cluster.

For development or testing, you might prefer to use an ephemeral cluster. You can use a persistent cluster in any situation.

-

To create and deploy an ephemeral cluster:

kubectl apply -f examples/kafka/kafka-ephemeral.yaml -

To create and deploy a persistent cluster:

kubectl apply -f examples/kafka/kafka-persistent.yaml

-

-

Verify that the Kafka cluster was successfully deployed:

kubectl get deployments

Deploying the Topic Operator using the Cluster Operator

This procedure describes how to deploy the Topic Operator using the Cluster Operator.

You configure the entityOperator property of the Kafka resource to include the topicOperator.

If you want to use the Topic Operator with a Kafka cluster that is not managed by Strimzi, you must deploy the Topic Operator as a standalone component.

For more information about configuring the entityOperator and topicOperator properties,

see Entity Operator in the Using Strimzi guide.

-

Edit the

entityOperatorproperties of theKafkaresource to includetopicOperator:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: #... entityOperator: topicOperator: {} userOperator: {} -

Configure the Topic Operator

specusing the properties described inEntityTopicOperatorSpecschema reference.Use an empty object (

{}) if you want all properties to use their default values. -

Create or update the resource:

Use

kubectl apply:kubectl apply -f <your-file>

Deploying the User Operator using the Cluster Operator

This procedure describes how to deploy the User Operator using the Cluster Operator.

You configure the entityOperator property of the Kafka resource to include the userOperator.

If you want to use the User Operator with a Kafka cluster that is not managed by Strimzi, you must deploy the User Operator as a standalone component.

For more information about configuring the entityOperator and userOperator properties, see Entity Operator in the Using Strimzi guide.

-

Edit the

entityOperatorproperties of theKafkaresource to includeuserOperator:apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka metadata: name: my-cluster spec: #... entityOperator: topicOperator: {} userOperator: {} -

Configure the User Operator

specusing the properties described inEntityUserOperatorSpecschema reference in the Using Strimzi guide.Use an empty object (

{}) if you want all properties to use their default values. -

Create or update the resource:

kubectl apply -f <your-file>

4.1.3. Alternative standalone deployment options for Strimzi Operators

When deploying a Kafka cluster using the Cluster Operator, you can also deploy the Topic Operator and User Operator. Alternatively, you can perform a standalone deployment.

A standalone deployment means the Topic Operator and User Operator can operate with a Kafka cluster that is not managed by Strimzi.

Deploying the standalone Topic Operator

This procedure shows how to deploy the Topic Operator as a standalone component.

A standalone deployment requires configuration of environment variables, and is more complicated than deploying the Topic Operator using the Cluster Operator. However, a standalone deployment is more flexible as the Topic Operator can operate with any Kafka cluster, not necessarily one deployed by the Cluster Operator.

-

You need an existing Kafka cluster for the Topic Operator to connect to.

-

Edit the

Deployment.spec.template.spec.containers[0].envproperties in theinstall/topic-operator/05-Deployment-strimzi-topic-operator.yamlfile by setting:-

STRIMZI_KAFKA_BOOTSTRAP_SERVERSto list the bootstrap brokers in your Kafka cluster, given as a comma-separated list ofhostname:portpairs. -

STRIMZI_ZOOKEEPER_CONNECTto list the ZooKeeper nodes, given as a comma-separated list ofhostname:portpairs. This should be the same ZooKeeper cluster that your Kafka cluster is using. -

STRIMZI_NAMESPACEto the Kubernetes namespace in which you want the operator to watch forKafkaTopicresources. -

STRIMZI_RESOURCE_LABELSto the label selector used to identify theKafkaTopicresources managed by the operator. -

STRIMZI_FULL_RECONCILIATION_INTERVAL_MSto specify the interval between periodic reconciliations, in milliseconds. -

STRIMZI_TOPIC_METADATA_MAX_ATTEMPTSto specify the number of attempts at getting topic metadata from Kafka. The time between each attempt is defined as an exponential back-off. Consider increasing this value when topic creation could take more time due to the number of partitions or replicas. Default6. -

STRIMZI_ZOOKEEPER_SESSION_TIMEOUT_MSto the ZooKeeper session timeout, in milliseconds. For example,10000. Default20000(20 seconds). -

STRIMZI_TOPICS_PATHto the Zookeeper node path where the Topic Operator stores its metadata. Default/strimzi/topics. -

STRIMZI_TLS_ENABLEDto enable TLS support for encrypting the communication with Kafka brokers. Defaulttrue. -

STRIMZI_TRUSTSTORE_LOCATIONto the path to the truststore containing certificates for enabling TLS based communication. Mandatory only if TLS is enabled throughSTRIMZI_TLS_ENABLED. -

STRIMZI_TRUSTSTORE_PASSWORDto the password for accessing the truststore defined bySTRIMZI_TRUSTSTORE_LOCATION. Mandatory only if TLS is enabled throughSTRIMZI_TLS_ENABLED. -

STRIMZI_KEYSTORE_LOCATIONto the path to the keystore containing private keys for enabling TLS based communication. Mandatory only if TLS is enabled throughSTRIMZI_TLS_ENABLED. -

STRIMZI_KEYSTORE_PASSWORDto the password for accessing the keystore defined bySTRIMZI_KEYSTORE_LOCATION. Mandatory only if TLS is enabled throughSTRIMZI_TLS_ENABLED. -

STRIMZI_LOG_LEVELto the level for printing logging messages. The value can be set to:ERROR,WARNING,INFO,DEBUG, andTRACE. DefaultINFO. -

STRIMZI_JAVA_OPTS(optional) to the Java options used for the JVM running the Topic Operator. An example is-Xmx=512M -Xms=256M. -

STRIMZI_JAVA_SYSTEM_PROPERTIES(optional) to list the-Doptions which are set to the Topic Operator. An example is-Djavax.net.debug=verbose -DpropertyName=value.

-

-

Deploy the Topic Operator:

kubectl apply -f install/topic-operator -

Verify that the Topic Operator has been deployed successfully:

kubectl describe deployment strimzi-topic-operatorThe Topic Operator is deployed when the

Replicas:entry shows1 available.NoteYou may experience a delay with the deployment if you have a slow connection to the Kubernetes cluster and the images have not been downloaded before.

Deploying the standalone User Operator

This procedure shows how to deploy the User Operator as a standalone component.

A standalone deployment requires configuration of environment variables, and is more complicated than deploying the User Operator using the Cluster Operator. However, a standalone deployment is more flexible as the User Operator can operate with any Kafka cluster, not necessarily one deployed by the Cluster Operator.

-

You need an existing Kafka cluster for the User Operator to connect to.

-

Edit the following

Deployment.spec.template.spec.containers[0].envproperties in theinstall/user-operator/05-Deployment-strimzi-user-operator.yamlfile by setting:-

STRIMZI_KAFKA_BOOTSTRAP_SERVERSto list the Kafka brokers, given as a comma-separated list ofhostname:portpairs. -

STRIMZI_ZOOKEEPER_CONNECTto list the ZooKeeper nodes, given as a comma-separated list ofhostname:portpairs. This must be the same ZooKeeper cluster that your Kafka cluster is using. Connecting to ZooKeeper nodes with TLS encryption is not supported. -

STRIMZI_NAMESPACEto the Kubernetes namespace in which you want the operator to watch forKafkaUserresources. -

STRIMZI_LABELSto the label selector used to identify theKafkaUserresources managed by the operator. -

STRIMZI_FULL_RECONCILIATION_INTERVAL_MSto specify the interval between periodic reconciliations, in milliseconds. -

STRIMZI_ZOOKEEPER_SESSION_TIMEOUT_MSto the ZooKeeper session timeout, in milliseconds. For example,10000. Default20000(20 seconds). -

STRIMZI_CA_CERT_NAMEto point to a KubernetesSecretthat contains the public key of the Certificate Authority for signing new user certificates for TLS client authentication. TheSecretmust contain the public key of the Certificate Authority under the keyca.crt. -

STRIMZI_CA_KEY_NAMEto point to a KubernetesSecretthat contains the private key of the Certificate Authority for signing new user certificates for TLS client authentication. TheSecretmust contain the private key of the Certificate Authority under the keyca.key. -

STRIMZI_CLUSTER_CA_CERT_SECRET_NAMEto point to a KubernetesSecretcontaining the public key of the Certificate Authority used for signing Kafka brokers certificates for enabling TLS-based communication. TheSecretmust contain the public key of the Certificate Authority under the keyca.crt. This environment variable is optional and should be set only if the communication with the Kafka cluster is TLS based. -

STRIMZI_EO_KEY_SECRET_NAMEto point to a KubernetesSecretcontaining the private key and related certificate for TLS client authentication against the Kafka cluster. TheSecretmust contain the keystore with the private key and certificate under the keyentity-operator.p12, and the related password under the keyentity-operator.password. This environment variable is optional and should be set only if TLS client authentication is needed when the communication with the Kafka cluster is TLS based. -

STRIMZI_CA_VALIDITYthe validity period for the Certificate Authority. Default is365days. -

STRIMZI_CA_RENEWALthe renewal period for the Certificate Authority. -

STRIMZI_LOG_LEVELto the level for printing logging messages. The value can be set to:ERROR,WARNING,INFO,DEBUG, andTRACE. DefaultINFO. -

STRIMZI_GC_LOG_ENABLEDto enable garbage collection (GC) logging. Defaulttrue. Default is30days to initiate certificate renewal before the old certificates expire. -

STRIMZI_JAVA_OPTS(optional) to the Java options used for the JVM running User Operator. An example is-Xmx=512M -Xms=256M. -

STRIMZI_JAVA_SYSTEM_PROPERTIES(optional) to list the-Doptions which are set to the User Operator. An example is-Djavax.net.debug=verbose -DpropertyName=value.

-

-

Deploy the User Operator:

kubectl apply -f install/user-operator -

Verify that the User Operator has been deployed successfully:

kubectl describe deployment strimzi-user-operatorThe User Operator is deployed when the

Replicas:entry shows1 available.NoteYou may experience a delay with the deployment if you have a slow connection to the Kubernetes cluster and the images have not been downloaded before.

4.2. Deploy Kafka Connect

Kafka Connect is a tool for streaming data between Apache Kafka and external systems.

In Strimzi, Kafka Connect is deployed in distributed mode. Kafka Connect can also work in standalone mode, but this is not supported by Strimzi.

Using the concept of connectors, Kafka Connect provides a framework for moving large amounts of data into and out of your Kafka cluster while maintaining scalability and reliability.

Kafka Connect is typically used to integrate Kafka with external databases and storage and messaging systems.

The procedures in this section show how to:

|

Note

|

The term connector is used interchangeably to mean a connector instance running within a Kafka Connect cluster, or a connector class. In this guide, the term connector is used when the meaning is clear from the context. |

4.2.1. Deploying Kafka Connect to your Kubernetes cluster

This procedure shows how to deploy a Kafka Connect cluster to your Kubernetes cluster using the Cluster Operator.

A Kafka Connect cluster is implemented as a Deployment with a configurable number of nodes (also called workers) that distribute the workload of connectors as tasks so that the message flow is highly scalable and reliable.

The deployment uses a YAML file to provide the specification to create a KafkaConnect resource.

In this procedure, we use the example file provided with Strimzi:

-

examples/connect/kafka-connect.yaml

See the Using Strimzi guide for more information about configuring the KafkaConnect resource:

-

Deploy Kafka Connect to your Kubernetes cluster.

kubectl apply -f examples/connect/kafka-connect.yaml -

Verify that Kafka Connect was successfully deployed:

kubectl get deployments

4.2.2. Extending Kafka Connect with connector plug-ins

The Strimzi container images for Kafka Connect include two built-in file connectors for moving file-based data into and out of your Kafka cluster.

| File Connector | Description |

|---|---|

|

Transfers data to your Kafka cluster from a file (the source). |

|

Transfers data from your Kafka cluster to a file (the sink). |

The Cluster Operator can also use images that you have created to deploy a Kafka Connect cluster to your Kubernetes cluster.

The procedures in this section show how to add your own connector classes to connector images by:

|

Important

|

You create the configuration for connectors directly using the Kafka Connect REST API or KafkaConnector custom resources. |

Creating a Docker image from the Kafka Connect base image

This procedure shows how to create a custom image and add it to the /opt/kafka/plugins directory.

You can use the Kafka container image on Docker Hub as a base image for creating your own custom image with additional connector plug-ins.

At startup, the Strimzi version of Kafka Connect loads any third-party connector plug-ins contained in the /opt/kafka/plugins directory.

-

Create a new

Dockerfileusingstrimzi/kafka:0.19.0-kafka-2.5.0as the base image:FROM strimzi/kafka:0.19.0-kafka-2.5.0 USER root:root COPY ./my-plugins/ /opt/kafka/plugins/ USER 1001Example plug-in file$ tree ./my-plugins/ ./my-plugins/ ├── debezium-connector-mongodb │ ├── bson-3.4.2.jar │ ├── CHANGELOG.md │ ├── CONTRIBUTE.md │ ├── COPYRIGHT.txt │ ├── debezium-connector-mongodb-0.7.1.jar │ ├── debezium-core-0.7.1.jar │ ├── LICENSE.txt │ ├── mongodb-driver-3.4.2.jar │ ├── mongodb-driver-core-3.4.2.jar │ └── README.md ├── debezium-connector-mysql │ ├── CHANGELOG.md │ ├── CONTRIBUTE.md │ ├── COPYRIGHT.txt │ ├── debezium-connector-mysql-0.7.1.jar │ ├── debezium-core-0.7.1.jar │ ├── LICENSE.txt │ ├── mysql-binlog-connector-java-0.13.0.jar │ ├── mysql-connector-java-5.1.40.jar │ ├── README.md │ └── wkb-1.0.2.jar └── debezium-connector-postgres ├── CHANGELOG.md ├── CONTRIBUTE.md ├── COPYRIGHT.txt ├── debezium-connector-postgres-0.7.1.jar ├── debezium-core-0.7.1.jar ├── LICENSE.txt ├── postgresql-42.0.0.jar ├── protobuf-java-2.6.1.jar └── README.md -

Build the container image.

-

Push your custom image to your container registry.

-

Point to the new container image.

You can either:

-

Edit the

KafkaConnect.spec.imageproperty of theKafkaConnectcustom resource.If set, this property overrides the

STRIMZI_KAFKA_CONNECT_IMAGESvariable in the Cluster Operator.apiVersion: kafka.strimzi.io/v1beta1 kind: KafkaConnect metadata: name: my-connect-cluster spec: (1) #... image: my-new-container-image (2) config: (3) #...-

The docker image for the pods.

-

Configuration of the Kafka Connect workers (not connectors).

or

-

In the

install/cluster-operator/050-Deployment-strimzi-cluster-operator.yamlfile, edit theSTRIMZI_KAFKA_CONNECT_IMAGESvariable to point to the new container image, and then reinstall the Cluster Operator.

-

See the Using Strimzi guide for more information on:

Creating a container image using OpenShift builds and Source-to-Image

This procedure shows how to use OpenShift builds and the Source-to-Image (S2I) framework to create a new container image.

An OpenShift build takes a builder image with S2I support, together with source code and binaries provided by the user, and uses them to build a new container image. Once built, container images are stored in OpenShift’s local container image repository and are available for use in deployments.

A Kafka Connect builder image with S2I support is provided on the Docker Hub as part of the strimzi/kafka:0.19.0-kafka-2.5.0 image.

This S2I image takes your binaries (with plug-ins and connectors) and stores them in the /tmp/kafka-plugins/s2i directory.

It creates a new Kafka Connect image from this directory, which can then be used with the Kafka Connect deployment.

When started using the enhanced image, Kafka Connect loads any third-party plug-ins from the /tmp/kafka-plugins/s2i directory.

-

On the command line, use the

oc applycommand to create and deploy a Kafka Connect S2I cluster:oc apply -f examples/connect/kafka-connect-s2i.yaml -

Create a directory with Kafka Connect plug-ins:

$ tree ./my-plugins/ ./my-plugins/ ├── debezium-connector-mongodb │ ├── bson-3.4.2.jar │ ├── CHANGELOG.md │ ├── CONTRIBUTE.md │ ├── COPYRIGHT.txt │ ├── debezium-connector-mongodb-0.7.1.jar │ ├── debezium-core-0.7.1.jar │ ├── LICENSE.txt │ ├── mongodb-driver-3.4.2.jar │ ├── mongodb-driver-core-3.4.2.jar │ └── README.md ├── debezium-connector-mysql │ ├── CHANGELOG.md │ ├── CONTRIBUTE.md │ ├── COPYRIGHT.txt │ ├── debezium-connector-mysql-0.7.1.jar │ ├── debezium-core-0.7.1.jar │ ├── LICENSE.txt │ ├── mysql-binlog-connector-java-0.13.0.jar │ ├── mysql-connector-java-5.1.40.jar │ ├── README.md │ └── wkb-1.0.2.jar └── debezium-connector-postgres ├── CHANGELOG.md ├── CONTRIBUTE.md ├── COPYRIGHT.txt ├── debezium-connector-postgres-0.7.1.jar ├── debezium-core-0.7.1.jar ├── LICENSE.txt ├── postgresql-42.0.0.jar ├── protobuf-java-2.6.1.jar └── README.md -

Use the

oc start-buildcommand to start a new build of the image using the prepared directory:oc start-build my-connect-cluster-connect --from-dir ./my-plugins/NoteThe name of the build is the same as the name of the deployed Kafka Connect cluster. -

When the build has finished, the new image is used automatically by the Kafka Connect deployment.

4.2.3. Creating and managing connectors

When you have created a container image for your connector plug-in, you need to create a connector instance in your Kafka Connect cluster. You can then configure, monitor, and manage a running connector instance.

A connector is an instance of a particular connector class that knows how to communicate with the relevant external system in terms of messages. Connectors are available for many external systems, or you can create your own.

You can create source and sink types of connector.

- Source connector

-

A source connector is a runtime entity that fetches data from an external system and feeds it to Kafka as messages.

- Sink connector

-

A sink connector is a runtime entity that fetches messages from Kafka topics and feeds them to an external system.

Strimzi provides two APIs for creating and managing connectors:

-

KafkaConnectorresources (referred to asKafkaConnectors) -

Kafka Connect REST API

Using the APIs, you can:

-

Check the status of a connector instance

-

Reconfigure a running connector

-

Increase or decrease the number of tasks for a connector instance

-

Restart failed tasks (not supported by

KafkaConnectorresource) -

Pause a connector instance

-

Resume a previously paused connector instance

-

Delete a connector instance

KafkaConnector resources

KafkaConnectors allow you to create and manage connector instances for Kafka Connect in a Kubernetes-native way, so an HTTP client such as cURL is not required.

Like other Kafka resources, you declare a connector’s desired state in a KafkaConnector YAML file that is deployed to your Kubernetes cluster to create the connector instance.

You manage a running connector instance by updating its corresponding KafkaConnector, and then applying the updates. You remove a connector by deleting its corresponding KafkaConnector.

To ensure compatibility with earlier versions of Strimzi, KafkaConnectors are disabled by default. To enable them for a Kafka Connect cluster, you must use annotations on the KafkaConnect resource.

For instructions, see Enabling KafkaConnector resources in the Using Strimzi guide.

When KafkaConnectors are enabled, the Cluster Operator begins to watch for them. It updates the configurations of running connector instances to match the configurations defined in their KafkaConnectors.

Strimzi includes an example KafkaConnector, named examples/connect/source-connector.yaml. You can use this example to create and manage a FileStreamSourceConnector.

Availability of the Kafka Connect REST API

The Kafka Connect REST API is available on port 8083 as the <connect-cluster-name>-connect-api service.

If KafkaConnectors are enabled, manual changes made directly using the Kafka Connect REST API are reverted by the Cluster Operator.

The operations supported by the REST API are described in the Apache Kafka documentation.

4.2.4. Deploying a KafkaConnector resource to Kafka Connect

This procedure describes how to deploy the example KafkaConnector to a Kafka Connect cluster.

The example YAML will create a FileStreamSourceConnector to send each line of the license file to Kafka as a message in a topic named my-topic.

-

A Kafka Connect deployment in which

KafkaConnectorsare enabled -

A running Cluster Operator

-

Edit the

examples/connect/source-connector.yamlfile:apiVersion: kafka.strimzi.io/v1alpha1 kind: KafkaConnector metadata: name: my-source-connector (1) labels: strimzi.io/cluster: my-connect-cluster (2) spec: class: org.apache.kafka.connect.file.FileStreamSourceConnector (3) tasksMax: 2 (4) config: (5) file: "/opt/kafka/LICENSE" topic: my-topic # ...-

Enter a name for the

KafkaConnectorresource. This will be used as the name of the connector within Kafka Connect. You can choose any name that is valid for a Kubernetes resource. -

Enter the name of the Kafka Connect cluster in which to create the connector.

-

The name or alias of the connector class. This should be present in the image being used by the Kafka Connect cluster.

-

The maximum number of tasks that the connector can create.

-

Configuration settings for the connector. Available configuration options depend on the connector class.

-

-

Create the

KafkaConnectorin your Kubernetes cluster:kubectl apply -f examples/connect/source-connector.yaml -

Check that the resource was created:

kubectl get kctr --selector strimzi.io/cluster=my-connect-cluster -o name

4.3. Deploy Kafka MirrorMaker

The Cluster Operator deploys one or more Kafka MirrorMaker replicas to replicate data between Kafka clusters. This process is called mirroring to avoid confusion with the Kafka partitions replication concept. MirrorMaker consumes messages from the source cluster and republishes those messages to the target cluster.

4.3.1. Deploying Kafka MirrorMaker to your Kubernetes cluster

This procedure shows how to deploy a Kafka MirrorMaker cluster to your Kubernetes cluster using the Cluster Operator.

The deployment uses a YAML file to provide the specification to create a KafkaMirrorMaker or KafkaMirrorMaker2 resource depending on the version of MirrorMaker deployed.

In this procedure, we use the example files provided with Strimzi:

-

examples/mirror-maker/kafka-mirror-maker.yaml -

examples/mirror-maker/kafka-mirror-maker-2.yaml

For information about configuring KafkaMirrorMaker or KafkaMirrorMaker2 resources,

see Kafka MirrorMaker configuration in the Using Strimzi guide.

-

Deploy Kafka MirrorMaker to your Kubernetes cluster:

For MirrorMaker:

kubectl apply -f examples/mirror-maker/kafka-mirror-maker.yamlFor MirrorMaker 2.0:

kubectl apply -f examples/mirror-maker/kafka-mirror-maker-2.yaml -

Verify that MirrorMaker was successfully deployed:

kubectl get deployments

4.4. Deploy Kafka Bridge

The Cluster Operator deploys one or more Kafka bridge replicas to send data between Kafka clusters and clients via HTTP API.

4.4.1. Deploying Kafka Bridge to your Kubernetes cluster

This procedure shows how to deploy a Kafka Bridge cluster to your Kubernetes cluster using the Cluster Operator.

The deployment uses a YAML file to provide the specification to create a KafkaBridge resource.

In this procedure, we use the example file provided with Strimzi:

-

examples/bridge/kafka-bridge.yaml

For information about configuring the KafkaBridge resource,

see Kafka Bridge configuration in the Using Strimzi guide.

-

Deploy Kafka Bridge to your Kubernetes cluster:

kubectl apply -f examples/bridge/kafka-bridge.yaml -

Verify that Kafka Bridge was successfully deployed:

kubectl get deployments

5. Setting up client access to the Kafka cluster

After you have deployed Strimzi, the procedures in this section explain how to:

-

Deploy example producer and consumer clients, which you can use to verify your deployment

-

Set up external client access to the Kafka cluster

The steps to set up access to the Kafka cluster for a client outside Kubernetes are more complex, and require familiarity with the Kafka component configuration procedures described in the Using Strimzi guide.

5.1. Deploying example clients

This procedure shows how to deploy example producer and consumer clients that use the Kafka cluster you created to send and receive messages.

-

The Kafka cluster is available for the clients.

-

Deploy a Kafka producer.

kubectl run kafka-producer -ti --image=strimzi/kafka:0.19.0-kafka-2.5.0 --rm=true --restart=Never -- bin/kafka-console-producer.sh --broker-list cluster-name-kafka-bootstrap:9092 --topic my-topic -

Type a message into the console where the producer is running.

-

Press Enter to send the message.

-

Deploy a Kafka consumer.

kubectl run kafka-consumer -ti --image=strimzi/kafka:0.19.0-kafka-2.5.0 --rm=true --restart=Never -- bin/kafka-console-consumer.sh --bootstrap-server cluster-name-kafka-bootstrap:9092 --topic my-topic --from-beginning -

Confirm that you see the incoming messages in the consumer console.

5.2. Setting up access for clients outside of Kubernetes

This procedure shows how to configure client access to a Kafka cluster from outside Kubernetes.

Using the address of the Kafka cluster, you can provide external access to a client on a different Kubernetes namespace or outside Kubernetes entirely.

You configure an external Kafka listener to provide the access.

The following external listener types are supported:

-

routeto use OpenShiftRouteand the default HAProxy router -

loadbalancerto use loadbalancer services -

nodeportto use ports on Kubernetes nodes -

ingressto use Kubernetes Ingress and the NGINX Ingress Controller for Kubernetes

The type chosen depends on your requirements, and your environment and infrastructure. For example, loadbalancers might not be suitable for certain infrastructure, such as bare metal, where node ports provide a better option.

In this procedure:

-

An external listener is configured for the Kafka cluster, with TLS encryption and authentication, and Kafka simple authorization is enabled.

-

A

KafkaUseris created for the client, with TLS authentication and Access Control Lists (ACLs) defined for simple authorization.

You can configure your listener to use TLS or SCRAM-SHA authentication, both of which can be used with TLS encryption. If you are using an authorization server, you can use token-based OAuth 2.0 authentication and OAuth 2.0 authorization.

When you configure the KafkaUser authentication and authorization mechanisms, ensure they match the equivalent Kafka configuration:

-

KafkaUser.spec.authenticationmatchesKafka.spec.kafka.listeners.*.authentication -

KafkaUser.spec.authorizationmatchesKafka.spec.kafka.authorization

You should have at least one listener supporting the authentication you want to use for the KafkaUser.

|

Note

|

Authentication between Kafka users and Kafka brokers depends on the authentication settings for each. For example, it is not possible to authenticate a user with TLS if it is not also enabled in the Kafka configuration. |

Strimzi operators automate the configuration process:

-

The Cluster Operator creates the listeners and sets up the cluster and client certificate authority (CA) certificates to enable authentication within the Kafka cluster.

-

The User Operator creates the user representing the client and the security credentials used for client authentication, based on the chosen authentication type.

In this procedure, the certificates generated by the Cluster Operator are used, but you can replace them by installing your own certificates. You can also configure your listener to use a Kafka listener certificate managed by an external Certificate Authority.

Certificates are available in PKCS #12 format (.p12) and PEM (.crt) formats.

-

The Kafka cluster is available for the client

-

The Cluster Operator and User Operator are running in the cluster

-

A client outside the Kubernetes cluster to connect to the Kafka cluster

-

Configure the Kafka cluster with an

externalKafka listener.-

Define the authentication required to access the Kafka broker through the listener

-

Enable authorization on the Kafka broker

For example:

apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka spec: kafka: # ... listeners: (1) external: type: LISTENER-TYPE (2) tls: true (3) authentication: type: tls (4) configuration: preferredAddressType: InternalDNS (5) overrides: (6) # ... authorization: (7) type: simple superUsers: - super-user-name (8) # ...-

Configuration options for enabling external listeners are described in the Kafka listeners schema reference

-

External listener type specified as

route,loadbalancer,nodeportoringress. -

Enables TLS encryption on the listener. Not required for

routelisteners. -

Authentication specified as

tls. -

(Optional, for

nodeportlisteners only) Configuration to specify a preference for the first address type used by Strimzi as the node address. -

(Optional, but not applicable to

ingresslisteners) Overrides customize the bootstrap and broker addresses advertised to clients. Strimzi automatically determines the addresses to advertise to clients. The addresses are automatically assigned by Kubernetes. Use overrides if the infrastructure on which you are running Strimzi does not provide the right address. Validation is not performed on overrides. The override configuration differs according to the external listener type, so you can override hosts forroute, DNS names or IP addresses forloadbalancer, and node ports (shown) fornodeport. Refer to the Kafka listeners schema reference for more information on external listeneroverrides. -

Authorization enables

simpleauthorization on the Kafka broker using theSimpleAclAuthorizerKafka plugin. -

(Optional) Super users can access all brokers regardless of any access restrictions defined in ACLs.

-

-

-

Create or update the

Kafkaresource.kubectl apply -f KAFKA-CONFIG-FILEThe Kafka cluster is configured with a Kafka broker listener using TLS authentication.

A service is created for each Kafka broker pod.

A service is created to serve as the bootstrap address for connection to the Kafka cluster.

A service is also created as the external bootstrap address for external connection to the Kafka cluster using

nodeportlisteners.The cluster CA certificate to verify the identity of the kafka brokers is also created with the same name as the

Kafkaresource. -

Find the bootstrap address and port from the status of the

Kafkaresource.kubectl get kafka KAFKA-CLUSTER-NAME -o jsonpath='{.status.listeners[?(@.type=="external")].bootstrapServers}'Use the bootstrap address in your Kafka client to connect to the Kafka cluster.

-

Extract the public cluster CA certificate and password from the generated

KAFKA-CLUSTER-NAME-cluster-ca-certSecret.kubectl get secret KAFKA-CLUSTER-NAME-cluster-ca-cert -o jsonpath='{.data.ca\.p12}' | base64 -d > ca.p12kubectl get secret KAFKA-CLUSTER-NAME-cluster-ca-cert -o jsonpath='{.data.ca\.password}' | base64 -d > ca.passwordUse the certificate and password in your Kafka client to connect to the Kafka cluster with TLS encryption.

NoteCluster CA certificates renew automatically by default. If your are using your own Kafka listener certificates, you will need to renew the certificates manually. -

Create or modify a user representing the client that requires access to the Kafka cluster.

-

Specify the same authentication type as the

Kafkalistener. -

Specify the authorization ACLs for simple authorization.

For example:

apiVersion: kafka.strimzi.io/v1beta1 kind: KafkaUser metadata: name: my-user labels: strimzi.io/cluster: my-cluster (1) spec: authentication: type: tls (2) authorization: type: simple acls: (3) - resource: type: topic name: my-topic patternType: literal operation: Read - resource: type: topic name: my-topic patternType: literal operation: Describe - resource: type: group name: my-group patternType: literal operation: Read-

The label must match the label of the Kafka cluster for the user to be created.

-

Authentication specified as

tls. -

Simple authorization requires an accompanying list of ACL rules to apply to the user. The rules define the operations allowed on Kafka resources based on the username (

my-user).

-

-

-

Create or modify the

KafkaUserresource.kubectl apply -f USER-CONFIG-FILEThe user is created, as well as a Secret with the same name as the

KafkaUserresource. The Secret contains a private and public key for TLS client authentication.For example:

apiVersion: v1 kind: Secret metadata: name: my-user labels: strimzi.io/kind: KafkaUser strimzi.io/cluster: my-cluster type: Opaque data: ca.crt: PUBLIC-KEY-OF-THE-CLIENT-CA user.crt: USER-CERTIFICATE-CONTAINING-PUBLIC-KEY-OF-USER user.key: PRIVATE-KEY-OF-USER user.p12: P12-ARCHIVE-FILE-STORING-CERTIFICATES-AND-KEYS user.password: PASSWORD-PROTECTING-P12-ARCHIVE -

Configure your client to connect to the Kafka cluster with the properties required to make a secure connection to the Kafka cluster.

-

Add the authentication details for the public cluster certificates:

security.protocol: SSL (1) ssl.truststore.location: PATH-TO/ssl/keys/truststore (2) ssl.truststore.password: CLUSTER-CA-CERT-PASSWORD (3) ssl.truststore.type=PKCS12 (4)-

Enables TLS encryption (with or without TLS client authentication).

-

Specifies the truststore location where the certificates were imported.

-

Specifies the password for accessing the truststore. This property can be omitted if it is not needed by the truststore.

-

Identifies the truststore type.

NoteUse security.protocol: SASL_SSLwhen using SCRAM-SHA authentication over TLS. -

-

Add the bootstrap address and port for connecting to the Kafka cluster:

bootstrap.servers: BOOTSTRAP-ADDRESS:PORT -

Add the authentication details for the public user certificates:

ssl.keystore.location: PATH-TO/ssl/keys/user1.keystore (1) ssl.keystore.password: USER-CERT-PASSWORD (2)-

Specifies the keystore location where the certificates were imported.

-

Specifies the password for accessing the keystore. This property can be omitted if it is not needed by the keystore.

The public user certificate is signed by the client CA when it is created.

-

-

6. Introducing Metrics to Kafka

This section describes setup options for monitoring your Strimzi deployment.

Depending on your requirements, you can:

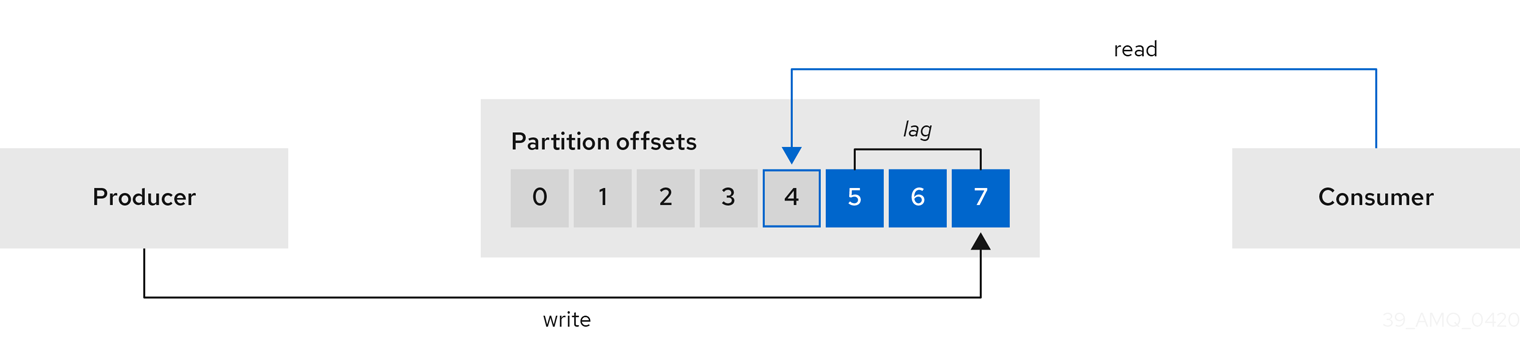

When you have Prometheus and Grafana enabled, Kafka Exporter provides additional monitoring related to consumer lag.

Additionally, you can configure your deployment to track messages end-to-end by setting up distributed tracing as described in the Using Strimzi guide.

-

For more information about Prometheus, see the Prometheus documentation.

-

For more information about Grafana, see the Grafana documentation.

-

Apache Kafka Monitoring describes JMX metrics exposed by Apache Kafka.

-

ZooKeeper JMX describes JMX metrics exposed by Apache ZooKeeper.

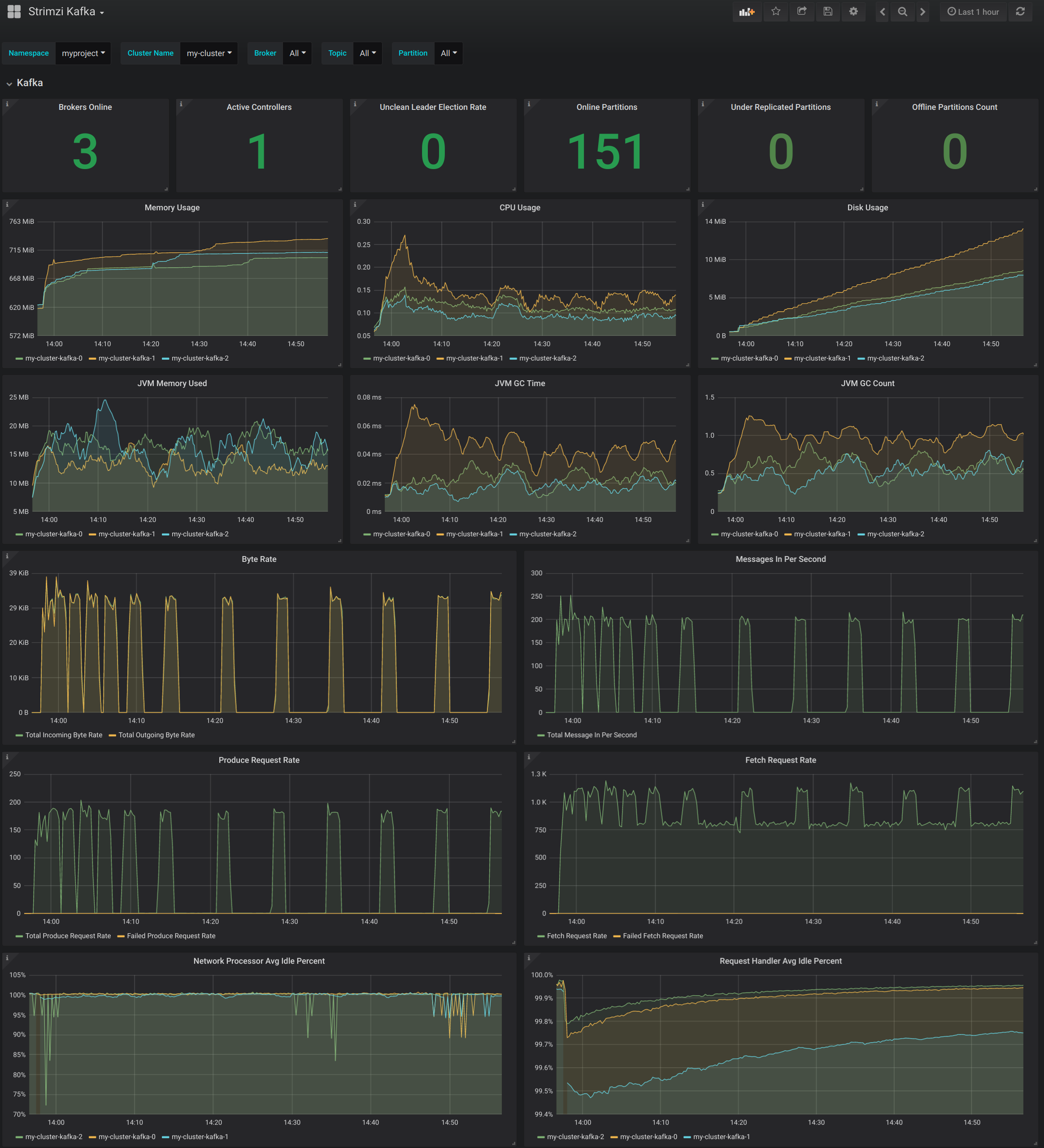

6.1. Add Prometheus and Grafana

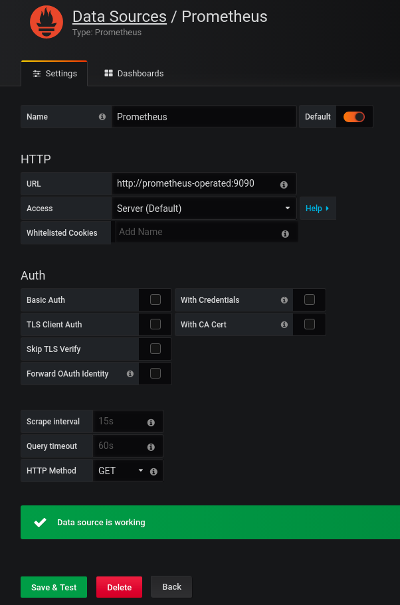

This section describes how to monitor Strimzi Kafka, ZooKeeper, Kafka Connect, and Kafka MirrorMaker and MirrorMaker 2.0 clusters using Prometheus to provide monitoring data for example Grafana dashboards.

Prometheus and Grafana can also be used to monitor Strimzi operators and Kafka Bridge instances. The example Grafana dashboard for operators provides:

-

Information about the operator such as the number of reconciliations or number of Custom Resources they are processing

-

JVM metrics from the operators

In order to run the example Grafana dashboards, you must:

|

Note

|

The resources referenced in this section are intended as a starting point for setting up monitoring, but they are provided as examples only. If you require further support on configuring and running Prometheus or Grafana in production, try reaching out to their respective communities. |

6.1.1. Example Metrics files

You can find the example metrics configuration files in the examples/metrics directory.

metrics

├── grafana-install

│ ├── grafana.yaml (1)

├── grafana-dashboards (2)

│ ├── strimzi-kafka-connect.json

│ ├── strimzi-kafka.json

│ ├── strimzi-zookeeper.json

│ ├── strimzi-kafka-mirror-maker-2.json

│ ├── strimzi-operators.json

│ ├── strimzi-kafka-bridge.json

│ └── strimzi-kafka-exporter.json (3)

├── kafka-connect-metrics.yaml (4)

├── kafka-metrics.yaml (5)

├── kafka-mirror-maker-2-metrics.yaml (6)

├── kafka-bridge-metrics.yaml (7)

├── prometheus-additional-properties

│ └── prometheus-additional.yaml (8)

├── prometheus-alertmanager-config

│ └── alert-manager-config.yaml (9)

└── prometheus-install

├── alert-manager.yaml (10)

├── prometheus-rules.yaml (11)

├── prometheus.yaml (12)

├── strimzi-pod-monitor.yaml (13)-

Installation file for the Grafana image

-

Grafana dashboards

-

Grafana dashboard specific to Kafka Exporter

-

Metrics configuration that defines Prometheus JMX Exporter relabeling rules for Kafka Connect

-

Metrics configuration that defines Prometheus JMX Exporter relabeling rules for Kafka and ZooKeeper

-

Metrics configuration that defines Prometheus JMX Exporter relabeling rules for Kafka Mirror Maker 2.0

-

Kafka Bridge resource with metrics enabled

-

Configuration to add roles for service monitoring

-

Hook definitions for sending notifications through Alertmanager

-

Resources for deploying and configuring Alertmanager

-

Alerting rules examples for use with Prometheus Alertmanager (deployed with Prometheus)

-

Installation file for the Prometheus image

-

Prometheus job definitions to scrape metrics data from pods

6.1.2. Exposing Prometheus metrics

Strimzi uses the Prometheus JMX Exporter to expose JMX metrics from Kafka and ZooKeeper using an HTTP endpoint, which is then scraped by the Prometheus server.

Prometheus metrics configuration

Strimzi provides example configuration files for Grafana.

Grafana dashboards are dependent on Prometheus JMX Exporter relabeling rules, which are defined for:

-

Kafka and ZooKeeper as a

Kafkaresource configuration in the examplekafka-metrics.yamlfile -

Kafka Connect as

KafkaConnectandKafkaConnectS2Iresources in the examplekafka-connect-metrics.yamlfile

A label is a name-value pair. Relabeling is the process of writing a label dynamically. For example, the value of a label may be derived from the name of a Kafka server and client ID.

|

Note

|

We show metrics configuration using kafka-metrics.yaml in this section, but the process is the same when configuring Kafka Connect using the kafka-connect-metrics.yaml file.

|

For more information on the use of relabeling, see Configuration in the Prometheus documentation.

Prometheus metrics deployment options

To apply the example metrics configuration of relabeling rules to your Kafka cluster, do one of the following:

Copying Prometheus metrics configuration to a Kafka resource

To use Grafana dashboards for monitoring, you can copy the example metrics configuration to a Kafka resource.

Execute the following steps for each Kafka resource in your deployment.

-

Update the